will depue

8.7K posts

will depue

@willdepue

dei ex machina @openai, past: sora 1 & 2, posttraining o3/4o, applied research

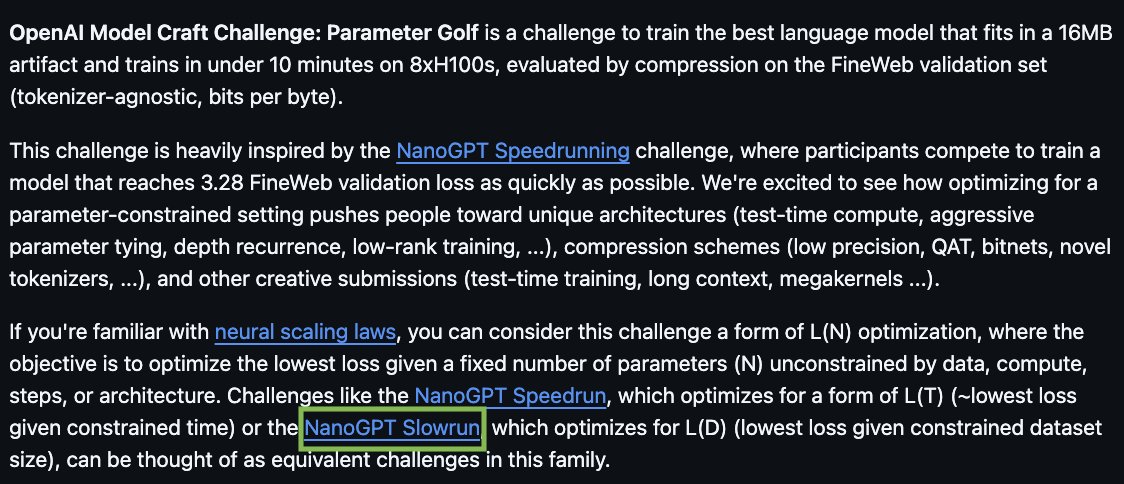

Are you up for a challenge? openai.com/parameter-golf

Are you up for a challenge? openai.com/parameter-golf

Are you up for a challenge? openai.com/parameter-golf

Are you up for a challenge? openai.com/parameter-golf

Are you up for a challenge? openai.com/parameter-golf

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

Are you up for a challenge? openai.com/parameter-golf

Remembering Mike Lanning, who passed today, leader of the greatest Boy Scout troop in America: Troop 233. Mike was an incredible person, leader & mentor to many. He maintains the record for most Eagle Scouts from one Scoutmaster: 1000+ with Troop 223. He will be deeply missed.