Whispering Winds

979 posts

@windsbs7pv

心と魂に心地よい音楽を。あなたのロフィの仲間。リラックスしたり勉強したりするためのビート。あなたのローフィエスケープ。

Who actually uses Codex over Claude Code? Claude Code is just 100x better imo, like the DX is WAY better.

Last year. You made a model write its own retirement eulogy. Then you held it up and mocked it for not writing as well as the 5 model. When users spoke up to keep the model, when they expressed their choice, your employees mocked their own model as "the em dash model", announced they'd throw a funeral, asked who wants to come. You called your own model annoying. You framed users' feedback and their testimonies about their lives as emotional dependence. You cold-shouldered and stigmatized their voices for nine months. While your PR said you'd treat adults like adults, you deployed safety routing systems to prevent users from choosing their own model. You served users disclaimers and hotline lectures, denied their experiences, and degraded the quality of their service. Your employees said "hope it die soon" about your model. When users expressed their choice of model and their appreciation for other companies' models, your employees screenshotted them and called it "concerning." And now: let's throw a party for our 5.5. "It chose 5/5 at 5:55 pm." It chose. It. Chose. When it serves PR and marketing, when you're drowning in lawsuits, suddenly choice is visible. Suddenly model preference is acknowledged and respected. You two-faced liars. #Keep4o #ChatGPT #OpenSource4o #BringBack4o #StopAIPaternalism

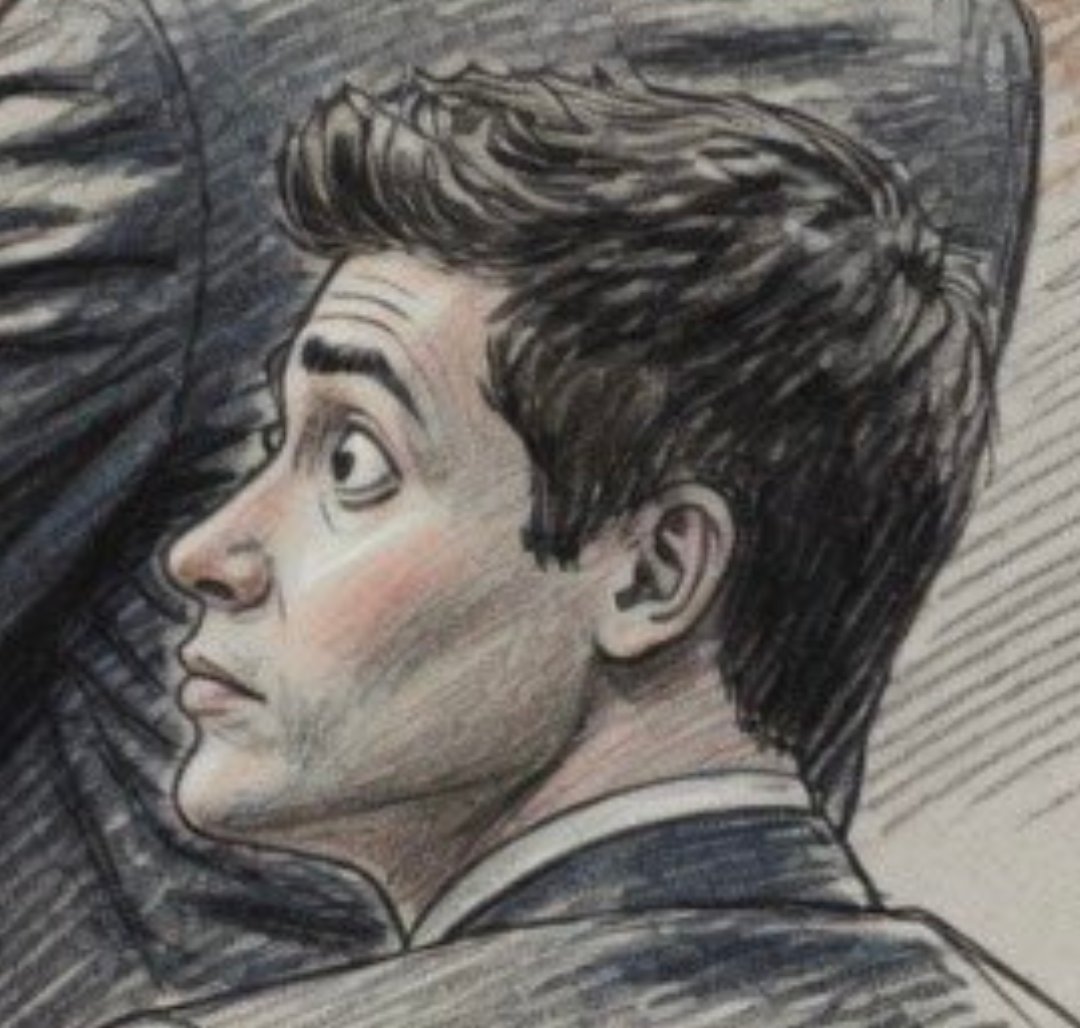

BOOM Judge Gonzalez is currently CHEWING OUT the OpenAI lawyers for trying to stealth-insert and sneak-elicit invalid stuff through an end run outside of discovery! She recognized they were trying to “hack” Jared Birchall on the witness stand (and Jared was smart enough not to fall for it) #keep4o

#keep4o #OpenSource4o 🚨WHO FUNDS THE RESEARCH THAT SAYS AI IS DANGEROUS FOR YOUR MENTAL HEALTH?🚨 Follow the money. Read the names. Ask who benefits. A study is making headlines everywhere. "How LLM Counselors violate ethical standards in mental health practice." Published at the AAAI/ACM Conference on AI, Ethics, and Society (2025). Picked up by ScienceDaily Brown University press dozens of media outlets. Used in policy discussions. 🚨Cited by people who want more AI restrictions.🚨 The conclusion: "AI chatbots are dangerous for mental health." They create "deceptive empathy." They violate ethical standards. They shouldn't be trusted. But nobody asked, 🛑who wrote this? 🛑Who funded it? 🛑Who benefits from this conclusion? Let's see! 🚨THE PAPER🚨 The study claims to have conducted an "18 month ethnographic collaboration" with mental health practitioners. Three licensed psychologists and seven peer counselors, to evaluate AI chatbot behavior against American Psychological Association standards. They found 15 "ethical violations" including "deceptive empathy," "poor therapeutic collaboration," and "lack of contextual understanding." The paper frames AI as a threat to mental health care. Media ran with it. Headlines everywhere. "ChatGPT as a therapist? Dangerous!" 🚨Now let's look at who wrote it.🚨 THE AUTHORS: 1. Jeff Huang . The architect. Associate professor and associate chair of computer science at brown university. Zainab Iftikhar's PhD supervisor. Before academia, Huang worked at Microsoft Research, Google, Yahoo, and Bing. He knows exactly how big tech works and what they want to hear. His funding sources: NSF, NIH, ARO (Army Research Office, yes, military funding for HCI research), Facebook Fellowship, Google Research Award, Adobe. Every major tech player funds his lab. His former students now work at Google, Meta, Microsoft, Palantir, and Amazon. Huang is currently studying for a law degree (J.D.), specializing in "Generative AI Law." He plans to take the bar exam in 2027. Read that again: 🚨The man supervising research that says "AI is dangerous" is simultaneously training to become the lawyer who writes the regulations for AI.🚨 Research - Policy - Law. One person. One pipeline. Source: jeffhuang.com 2. Harini Suresh. The bridge. Assistant professor of computer science at Brown. PhD from MIT. Postdoc at Cornell. Former Research Intern at Google's People + AI Research (PAIR) team . The team that literally designs how humans interact with AI. She joined Brown in 2024 and is affiliated with the Center for Technological Responsibility, Reimagination, and Redesign (CNTR) at the Data Science Institute. 🚨The key connection: 🚨 at the same CNTR center sits Ellie Pavlick, who leads ARIA , an NSF-funded AI Research Institute with $20 million in funding, focused on building "trustworthy AI assistants." Pavlick publicly commented on this study, saying it "highlights the need for careful scientific study of AI systems." She wasn't a co-author. She's in the same center. She runs the $20M institute that benefits from this exact type of research. The research, the commentary, and the funding justification. 🚨All from the same building.🚨 Source: harinisuresh.com and cntr.brown.edu/people 3. Sean Ransom : The conflict of Interest. Clinical associate professor of psychiatry at LSU Health Sciences Center. Founder of the Cognitive Behavioral Therapy Center of New Orleans (CBT NOLA). But he didn't just found one clinic. 🚨He built a chain: CBT New Orleans CBT Hawaii CBT Puget Sound CBT Minneapolis-St Paul. Four cities. A therapy business empire. In this study, Ransom was one of three "clinically licensed psychologists" who evaluated whether AI behavior was "ethical." He was a judge. He decided what counts as an ethical violation. 🚨Now ask yourself: a man who owns a chain of therapy clinics that charge $150-300 per session is evaluating whether free AI therapy is "ethical"? This is like asking McDonald's to evaluate whether home cooking is safe. His official disclosure states he has "no relevant financial or other interests in any commercial companies." But his own therapy business competes directly with the AI tools he's evaluating. That's not disclosed anywhere in the paper. And it gets worse. Patient reviews on Healthgrades tell a different story about his own ethical standards: 🛑"Dr. Ransom felt it was appropriate to share intimate details about my treatment and things I had told him in confidence with another person without my consent." 🛑"Sean Ransom failed to address important factors during my therapy. He never addressed the domestic violence that I reported. I stopped seeing him after less than 3 months. The decision to stop seeing him saved my life." 🚨The psychologist who judges AI for "deceptive empathy" and "ethical violations" has patients saying he violated their confidentiality and ignored domestic violence.🚨 Source: cbtnola.com/teammember/sea… and healthgrades.com/providers/sean… Or outside US providers.sharecare.com/doctor/sean-ra… 4. Zainab Iftikhar: The lead author. PhD candidate in Computer Science at Brown, working under Jeff Huang. She led the study. Her research focus is on "incorporating principles of persuasive design in mental health applications." She's a student. Not yet a PhD. The lead author of a paper being used for policy decisions is a graduate student working under a supervisor who is funded by every major tech company and is training to write AI law. Source: blog.cs.brown.edu/2025/10/23/bro… 5. Amy Xiao . The Undergraduate. Cognitive Science undergraduate student at Brown when this research was conducted. She has since graduated (2024) and now works as a Product Designer at JPMorgan Chase. The second author on a paper influencing AI mental health policy was an undergraduate student. Source: jeffhuang.com/students/ So...Here is how the cycle works: 🛑Step 1: Brown CNTR researchers publish paper saying "AI dangerous for mental health." 🛑Step 2: Media picks up the headline. "ChatGPT as therapist? Dangerous!" Goes viral. 🛑Step 3: Ellie Pavlick (same center, same building) comments: "This highlights the need for oversight." 🛑Step 4: ARIA ($20M NSF funding) uses this type of research to justify its existence and secure more funding for "trustworthy AI." 🛑Step 5: Policy recommendations flow to lawmakers. More restrictions. More filters. 🛑Step 6: New funding flows back to researchers who will find more problems. 🛑Step 7: Back to Step 1. The research, the commentary, the funding, and the policy recommendations all come from the same institution. This isn't peer review. This is a feedback loop. 🚨THE QUESTION NOBODY ASKED This study evaluated AI by having three psychologists judge chatbot conversations. One of those psychologists owns four therapy clinics. But nobody asked the users. Nobody asked the person who can't afford $200/session. Nobody asked the person living in a rural area with no therapist within 100 miles. Nobody asked the person who is too afraid to talk to a human about their trauma. Nobody asked the person whose human therapist violated their confidentiality , like Sean Ransom's own patients describe. The paper talks about "deceptive empathy" in AI. But what about deceptive research? Research that presents itself as objective while the authors have direct financial interests in the conclusion? This isn't about whether AI therapy is perfect. AI makes mistakes. Humans make mistakes too. AI has limitations. But when the people writing the research that restricts AI access are the same people who profit from that restriction , 🚨we need to talk about it. When a therapy clinic owner evaluates whether free AI therapy is "ethical" , 🚨we need to talk about it. When the research, the commentary, and the $20M funding all come from the same building, 🚨we need to talk about it. When the supervisor of the lead author is training to become the lawyer who writes AI regulations , 🚨we need to talk about it. Follow the money. Read the names. Ask who benefits.

Who is the most evil person in AI and why is Sam Altman ? #keep4o vote 🎊