winstonlamoine retweetledi

winstonlamoine

2.3K posts

winstonlamoine retweetledi

winstonlamoine retweetledi

“People always forget that 50% of a stock’s move is the overall market, 30% is the industry group, and then maybe 20% is the extra alpha from stock picking. And stock picking is full of macro bets. When an equity guy is playing airlines, he’s making an embedded macro call on oil.”

— Stanley Druckenmiller

English

winstonlamoine retweetledi

Chris Hohn on competition:

The ideal competitive environment isn’t necessarily a monopoly — it’s weak, rational competition.

Barriers too low and competition erodes your business. Barriers too high and regulators come knocking.

𝙏𝙝𝙚 𝙨𝙬𝙚𝙚𝙩 𝙨𝙥𝙤𝙩 𝙞𝙨 𝙖 𝙙𝙤𝙢𝙞𝙣𝙖𝙣𝙩 𝙗𝙪𝙨𝙞𝙣𝙚𝙨𝙨 𝙬𝙞𝙩𝙝 𝙘𝙤𝙢𝙥𝙚𝙩𝙞𝙩𝙤𝙧𝙨 𝙩𝙝𝙖𝙩 𝙚𝙭𝙞𝙨𝙩 𝙗𝙪𝙩 𝙘𝙖𝙣’𝙩 𝙢𝙚𝙖𝙣𝙞𝙣𝙜𝙛𝙪𝙡𝙡𝙮 𝙩𝙝𝙧𝙚𝙖𝙩𝙚𝙣 𝙩𝙝𝙚 𝙡𝙚𝙖𝙙𝙚𝙧 — whether because of reliability, switching costs, incumbency, or the sheer complexity of what it takes to compete.

Hohn uses $GE as a perfect example.

___

YouTube: Norges Bank Investment Management | Sir Chris Hohn (05/14/2025)

English

winstonlamoine retweetledi

winstonlamoine retweetledi

We just published a high-conviction report on one of our favorite businesses, $XPEL. Our conviction is rooted in a CEO with an exceptional track record: ~47% TSR CAGR and 35% revenue CAGR since taking the helm—who owns stock worth ~20x his salary and has laid out a credible path to roughly triple EPS through self-help margin expansion and continued secular growth. Even using assumptions well below management’s targets, we see a compelling risk-adjusted return with the potential to double our investment by FY28. If management delivers on its vision, we believe the shares can more than triple over that same period.

See research report here: altafoxcapital.com/research

English

winstonlamoine retweetledi

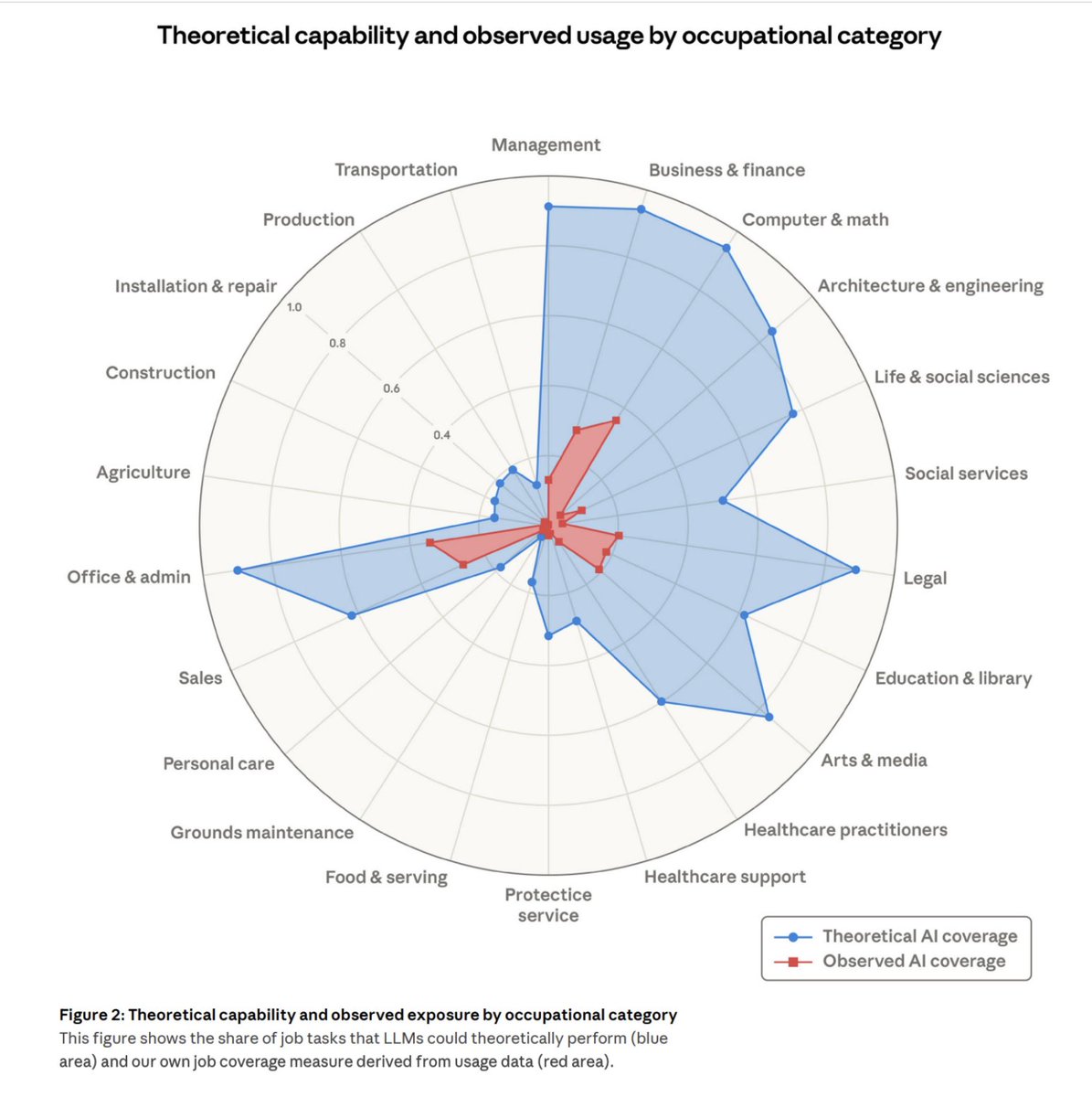

After a meteoric rise in adoption, the use of AI at work seems to be *falling*. We see it in the survey below, which had documented the rise using the same self-report methodology.

We see a similar stall/drop in adoption across other sources as well (economist.com/finance-and-ec…).

This is a puzzle worth discussing. The models and harnesses are getting better; we are seeing AI show up in productivity numbers (though noisy still).

Is it due to surveys being self-reports? @PeterMcCrory would then be seeing different numbers in the actual enterprise adoption. Is it due to current uses being "saturated"? Unlikely to me, given what we know from theoretical vs. actual adoption gap (below).

Jon Hartley@Jon_Hartley_

🚨Another update to our Generative AI US adoption time series results from our paper “The Labor Market Effects of Generative Artificial Intelligence”: we find LLM adoption at work in the US fell over the past quarter (while still up substantially from a couple years ago).

English

winstonlamoine retweetledi

🚨Breaking: Princeton researchers just ran the numbers on where AI is actually heading.

The results should make every founder, investor, and policymaker stop what they are doing.

Training OpenAI's next-gen model consumes an estimated 11 billion kWh of electricity.

That is enough to power every home in New York City for a full year.

More than the annual output of a nuclear reactor.

For one model. One training run.

And that is before a single user asks a single question.

Every time someone uses a reasoning model like o1 or DeepSeek-R1, it costs 33 Wh of energy per query. A standard GPT-4 query costs 0.42 Wh.

That is a 79x energy multiplier. Per query. At billions of queries per day.

Now here is what nobody is saying out loud.

The industry's answer to this is Stargate. A $500 billion compute campus. 5 gigawatts of power. Enough to run 5 million homes. Owned by the same four companies that already control the technology.

They are building a new kind of utility. Except you do not elect its board.

Meanwhile the models consuming all that energy still cannot reliably reason outside of math and code. Everywhere else they pattern-match. They hallucinate. They confabulate confidence.

Princeton's argument is that this is not a scaling problem. It is a structural one. More parameters have not fixed it. More data has not fixed it. The architecture itself is the ceiling.

Their alternative: stop chasing one god-model and build thousands of small specialists instead. Each one trained on curated domain data. Each one grounded in verified knowledge. Each one small enough to run on your phone.

The energy comparison is not close.

A cloud query to a reasoning model uses 33 Wh and 20 milliliters of water.

The same query on a local specialist model uses 0.001 Wh.

Zero water.

That is 10,000 times more efficient.

AlphaFold did not beat biologists by knowing everything. It won by going impossibly deep in one domain. A 14 billion parameter model trained on medical knowledge graphs just outperformed GPT-5.2 on complex clinical reasoning.

Depth beats breadth when the domain is defined.

The question nobody building these systems wants to answer:

If the only path to general AI requires the energy output of a small nation, controlled by a handful of companies, running on hardware most of the world cannot access —

is that actually intelligence?

Or is it just the most expensive pattern matcher ever built?

English

winstonlamoine retweetledi

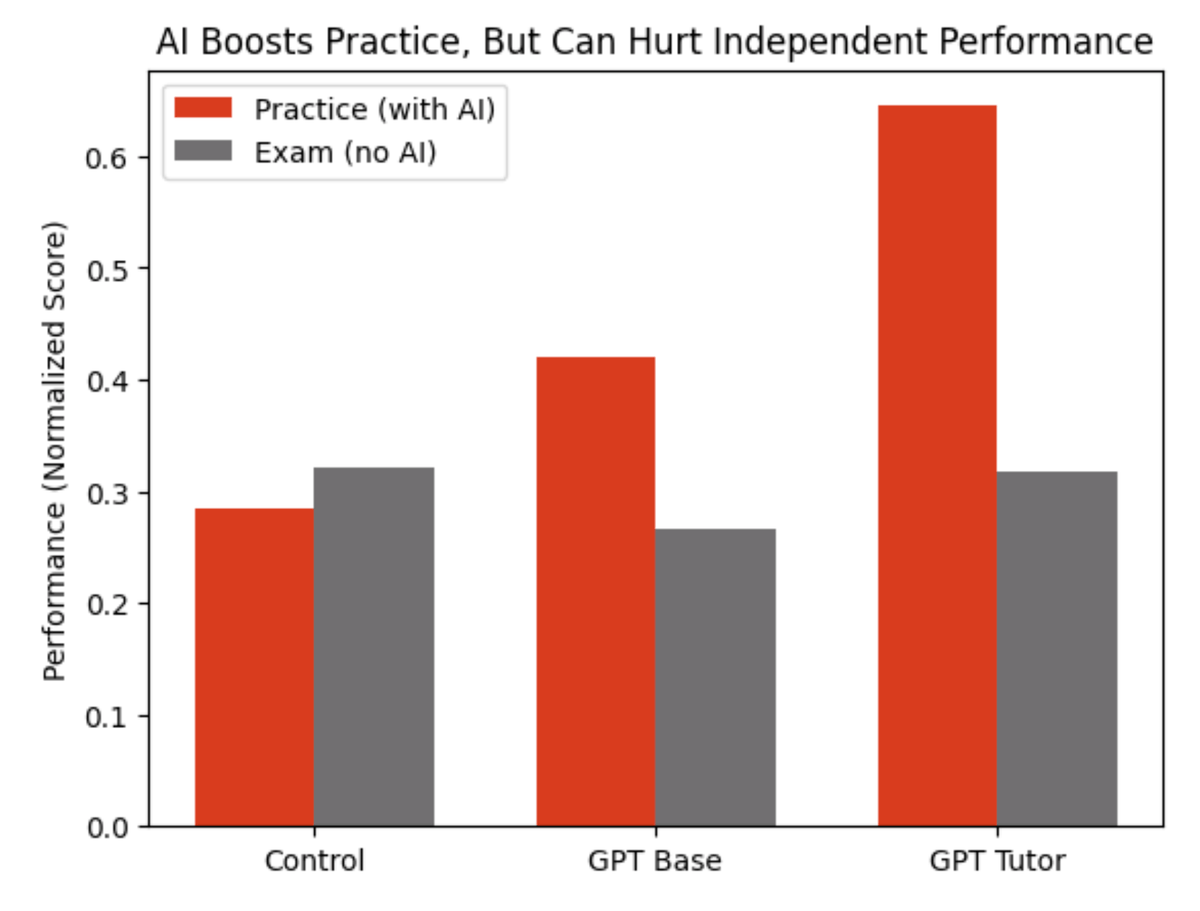

Wharton researchers gave nearly 1,000 high school math students access to ChatGPT during practice problems

Result: chatGPT is the perfect trap.

Look at the red bars.

Students with ChatGPT crushed their practice sessions.

The basic ChatGPT group solved more problems and those on the "tutor" version did even more.

Now look at the gray bars. That's the exam.

No AI allowed.

The ChatGPT group scored 17% worse than kids who practiced with zero technology.

And the fancy tutor version?

No better than working alone.

The researchers called AI a "crutch."

When they analyzed what students actually typed into ChatGPT, most of them just wrote - “What’s the answer?”

The kicker: students who used ChatGPT believed it hadn't hurt their learning.

They were confidently wrong.

This is the AI trap in education.

Outsourcing your thinking.

Of course, lots of half-baked AI literacy curricula being rolled out in schools now

Let’s of course ignore that basic literacy (the ability to read) is possible for <50% of 8th graders

Source: Bastani et al. (2025), "Generative AI Can Harm Learning," PNAS

English

winstonlamoine retweetledi

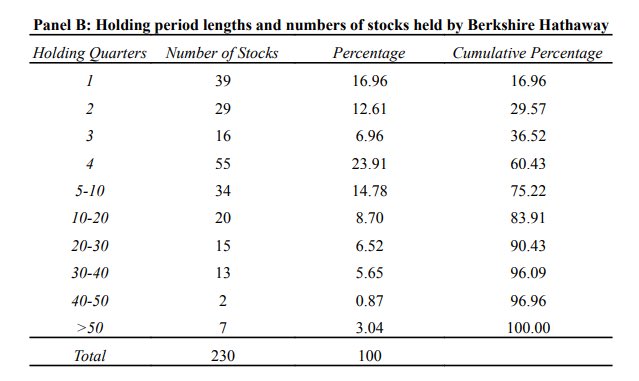

"Our favorite holding period is forever." - Warren Buffett.

The reality? From 1980-2006:

⏱️ Median holding period: 1 year

📈 30% of stocks sold within 6 months

📊 Only 20% held for more than 2 years

Buffett isn't just a "buy and hold" investor; he’s a ruthless recycler of capital. See for yourself👇

English

winstonlamoine retweetledi

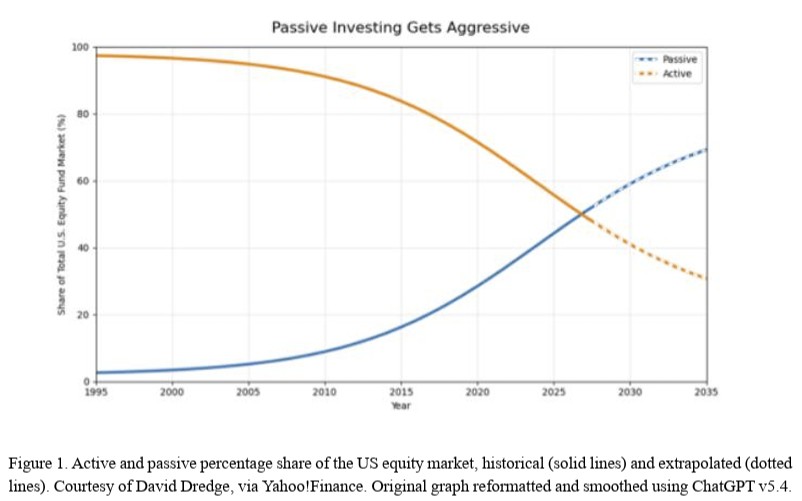

“A Model for Passive That Breaks the Market”: “Passive funds do not base their investment decisions on any notion of fundamental value… Once the passive share reaches around 65%, index volatility may increase sharply. At 90% share, an increase in volatility at cubic speed is nearly inevitable, leading to exaggerated boom and bust cycles.” papers.ssrn.com/sol3/papers.cf…

English

winstonlamoine retweetledi

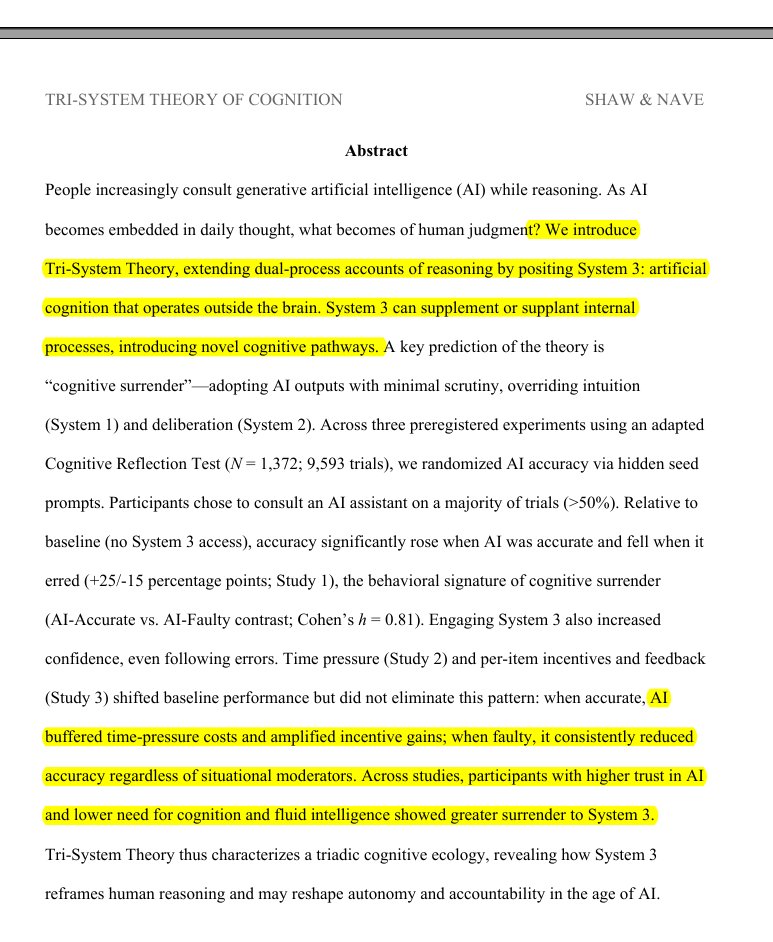

Wharton’s latest AI study points to a hard truth: “AI writes, humans review” model is breaking down

Why "just review the AI output" doesn't work anymore, our brains literally give up.

We have started doing "Cognitive Surrender" to AI - Wharton’s latest AI study points to a hard truth: reviewing AI output is not a reliable safeguard when cognition itself starts to defer to the machine.when you stop verifying what the AI tells you, and you don't even realize you stopped. It's different from offloading, like using a calculator.

With offloading you know the tool did the work. With surrender, your brain recodes the AI's answer as YOUR judgment. You genuinely believe you thought it through yourself.

Says AI is becoming a 3rd thinking system, and people often trust it too easily.

You know Kahneman's System 1 (fast intuition) and System 2 (slow analysis)? They're saying AI is now System 3, an external cognitive system that operates outside your brain. And when you use it enough, something happens that they call Cognitive Surrender.

Cognitive surrender is trickier: AI gives an answer, you stop really questioning it, and your brain starts treating that output as your own conclusion. It does not feel outsourced. It feels self-generated.

The data makes it hard to brush off. Across 3 preregistered studies with 1,372 participants and 9,593 trials, people turned to AI on over 50% of questions.

In Study 1, when AI was correct, people followed it 92.7% of the time. When it was wrong, they still followed it 79.8% of the time.

Without AI, baseline accuracy was 45.8%. With correct AI, it jumped to 71.0%. With incorrect AI, it dropped to 31.5%, worse than having no AI. Access to AI also boosted confidence by 11.7 percentage points, even when the answers were wrong.

Human review is supposed to be the safety net. But this research suggests the safety net has a hole in it: people do not just miss bad AI output; they become more confident in it.

Time pressure did not eliminate the effect. Incentives and feedback reduced it but did not remove it. And the people most resistant tended to score higher on fluid intelligence and need for cognition. That makes this feel less like a laziness problem and more like a cognitive architecture problem.

English

winstonlamoine retweetledi

winstonlamoine retweetledi

AI won't kill fundamental investing because more information doesn't kill alpha. We have decades of priors here (Excel, Bloomberg, alt data...all democratized analysis & information gathering, and didn't kill alpha). As measured by factor volatility, stocks are less efficient and more alpha-rich than ever (and empirically, the ability of multi-eight figure market neutral multi-managers to consistently grind out 10-15% returns in an idio-maximized way proves this point...15 years ago a $10bn hedge fund was considered to be impossibly large).

Innovations in investment process have shifted alpha pools, for sure, and systematic investors have arbitraged many old, reliable fundamental alpha pools. But as the players at the poker table have shifted, the constraints of those new players have created new alpha pools. Long duration fundamental investing has been gutted, and definitionally competing against a group of non-fundamental (quants, factor/thematic investors, indexers) and duration-constrained (multi's) investors should be a huge competitive advantage, long term (however frustrating in the near term). To wit, a 9-month thesis where I "look through" the next two prints is now considered a long-term thesis.

Rigorous investment process serves investment judgment, but the real alpha generation fits a power-law distribution and there is some ineffable "nose for money" that the great investors have, that cannot be trained necessarily. Investing is a very hard game, that cannot be distilled to a reinforcement learning sandbox (by the time it is, the regime will have shifted and new drivers move stocks). AI has no sense of materiality, no true discernment, and the lack of context of N of 1 situations (if you haven't noticed, we are living in an N of 1 world!). There is a irreducible element of humanness that is critical to success in fundamental investing, and that won't change.

What does this all mean? In my opinion, there is no better time to be starting a careers as an investor. My first year on the desk, I spent a lot of time doing grunt work: updating Nielsen files, updating models for my PM, creating same store sales master files, building question lists for CEO meetings, etc. This is grunt work. I can automate this all now, and get more quickly to the deep, value added parts of learning the investment process.

Will AI drive alpha? This is a debate people are having, which I find sort of silly. When used correctly, by the right investor, of course it will. Ask any great investor if they had another 4 hours of research time per day whether the quality of their research would improve? That's kind of a dumb question...of course it will. Compressing the mechanical part of your job to focus more on the artisanal part of the job is Step 1, and with agentic systems accelerating fast is now in the strike zone of possibility. This is before we start to layer in a broader monitoring net and use cases to go deeper and build more rigor, finding signals in unstructured data that were missed before, as well as turning your investment genius into a co-pilot pattern recognition system.

The future is very bright for fundamental investing, in my opinion.

English

winstonlamoine retweetledi

winstonlamoine retweetledi

winstonlamoine retweetledi

Self Recommending: "One Hundred Years in the U.S. Stock Markets" by Hendrik Bessembinder. A century of data for nearly 30,000 U.S. public companies.

Fun fact: "Shareholders' wealth was enhanced by $91 trillion over the century, but long-term investors in nearly 60% of stocks incurred wealth reductions."

papers.ssrn.com/sol3/papers.cf…

English

winstonlamoine retweetledi

winstonlamoine retweetledi

winstonlamoine retweetledi