Post-scarcity society

85 posts

it's settled.. 3x in go for 1 week

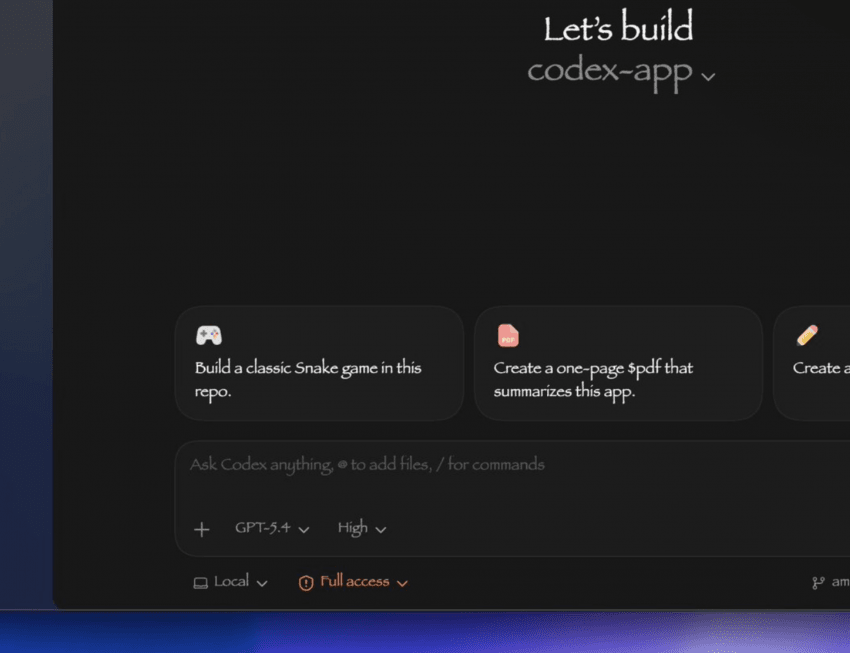

I built a new plugin! You can now trigger Codex from Claude Code! Use the Codex plugin for Claude Code to delegate tasks to Codex or have Codex review your changes using your ChatGPT subscription. Start by installing the plugin: github.com/openai/codex-p…

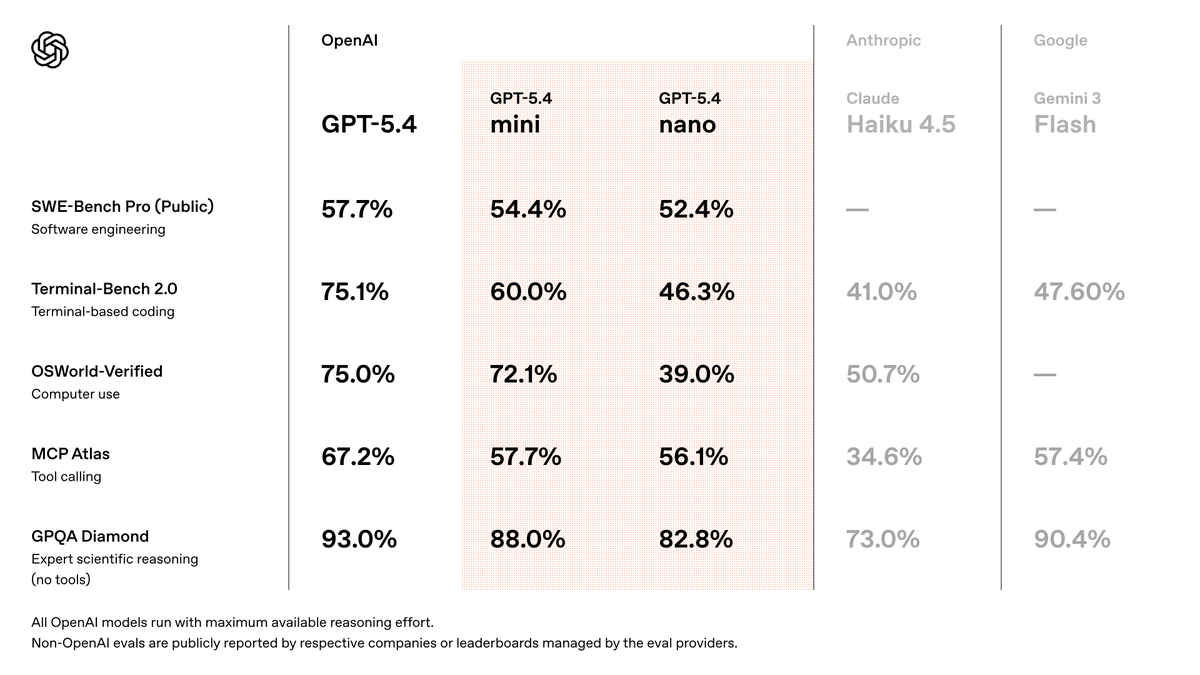

GPT-5.4 mini is available today in ChatGPT, Codex, and the API. Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini. openai.com/index/introduc…

OpenAI is going to put the open back into to open source.

Yesterday we finished the formalization of Erdos Problem 392 as part of the PNT+ project. And it really drove home for me just how far we still are from math being “solved” by AI. There was one task left. It was marked “small.” It had been claimed, then a few weeks later unclaimed after little progress (this happens all the time, someone thinks they’ll have more time, life intervenes, etc.). It sat in the middle of a 3000+ line proof. Supposedly just a stitching together of some already-proved elementary lemmas. So I said, sure, I’ll take the last task and get us over the finish line. I handed it to Claude 4.6, thinking it would finish in 20 minutes. It spun. And spun. Going in circles, unable to make the argument work. So I handed it to GPT-5.3Pro. It also spun — just in a different loop of confusion. At that point, having claimed the task, my pride was on the line. So I rolled up my sleeves and actually had to figure out what the hell was going on. It turned out we needed to slightly tweak the initial parameters (1000 lines above), modify the statement of the main lemma I was supposed to prove, and then repair all the downstream consequences 1000 lines later. It took two days. In the end it was ~700 lines, roughly a third human / Claude / GPT. It felt a bit like the transcontinental railroad, construction from both sides was supposed to meet in the middle, but the tracks were off by 200 miles. Maybe I would’ve gotten better results from the beginning by asking the AI to fix the entire 3000-line file. But a big, complicated file with shifting dependencies may simply be too much for even the largest models today. It didn’t help that earlier task-solvers had run into off-by-one bugs and updated the formal lemma statements without updating the blueprint. So by the time I got there, it was a mess. But we got through it. And if you want a concrete data point on where we are with autonomous mathematics, especially in Lean, this is one. I’m very bullish overall on Math+AI. But there’s still a LOT left to do.