Śrêyāśḥ Dësḥmùkḥ 🚀

7.4K posts

Śrêyāśḥ Dësḥmùkḥ 🚀

@xegression

Quantifying Uncertainty 🎯 Stats · Econometrics · Demography PhD Fellow ·Decision Sciences

"If you're working non-stop all the time you will burn out." How do you keep a healthy work-life balance as a scientist? Hear 2009 chemistry laureate Venki Ramakrishnan share his best advice on how to achieve a good work-life balance. #NobelPrize

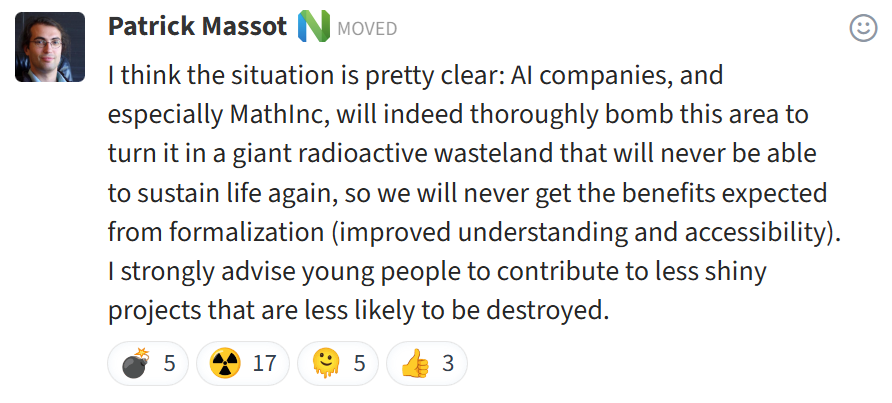

After the apparently amazing announcement by @mathematics_inc on the formalization of a major recent Fields-medal winning theorem, i had no idea how pissed the math-formalization community is. Very worrying discussions by some of the leaders/founders of Lean's mathlib. cc @ChrSzegedy

Hit me with the craziest Mumbai history facts you know.

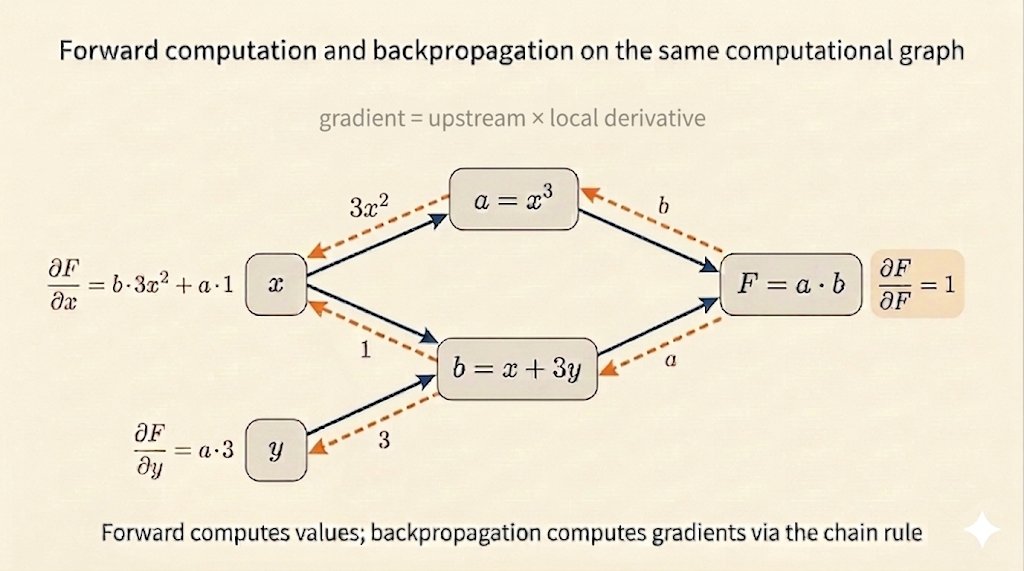

Modern machine learning is basically playing legos with these: