Florian Brand

34.2K posts

Florian Brand

@xeophon

evals @PrimeIntellect | open models @interconnectsai

Latest open artifacts (#21): Open model bonanza! Gemma 4, DeepSeek V4, Kimi K2.6, MiMo 2.5, GLM-5.1 & others. On CAISI's V4 assessment. An eventful month with one flagship release after another interconnects.ai/p/latest-open-…

I think it would be great if people were upfront about declaring their own understanding of a topic / their pull request. Now that everybody can talk confident with their clanker it becomes way too hard to understand if they knew what they were doing when they prompted it :(

People freaking out over my AI spend. What nobody sees: Part of what excites me so much about working on OpenClaw is that I'm trying to answer the question: How would we build software in the future if tokens don't matter? We constant run ~100 codex in the cloud, reviewing every PR, every issue. If a fix on main lands, @clawsweeper will eventually find that 6 month old issue and close it with an exact reference. We run codex on every commit to review for security issues (as it's far too easy to miss). We run codex to de-duplicate issues and find clusters and send reports for the most pressing issues. We have agents that can recreate complex setups, spin up ephemeral crabbox.sh machines, log into e.g. Telegram, make a video and post before/after fix on the PR. There's codex that watch new issues and - if it fits our documented vision well, automatically create a PR of it. (that then another codex reviews) We have codex running that scans comments for spam and blocks people. We have codex instances running that verify performance benchmarks and report regressions into Discord. We have agents that listen on our meetings and proactively start work, e.g. create PRs when we discuss new features while we discuss them. We build clawpatch.ai to split all our projects into functional units to review and find bugs and regresssions. We do the same split for security with Vercel's deepsec and Codex Security to find regressions and vulnerabilities. All that automation allows us to run this project extremely lean.

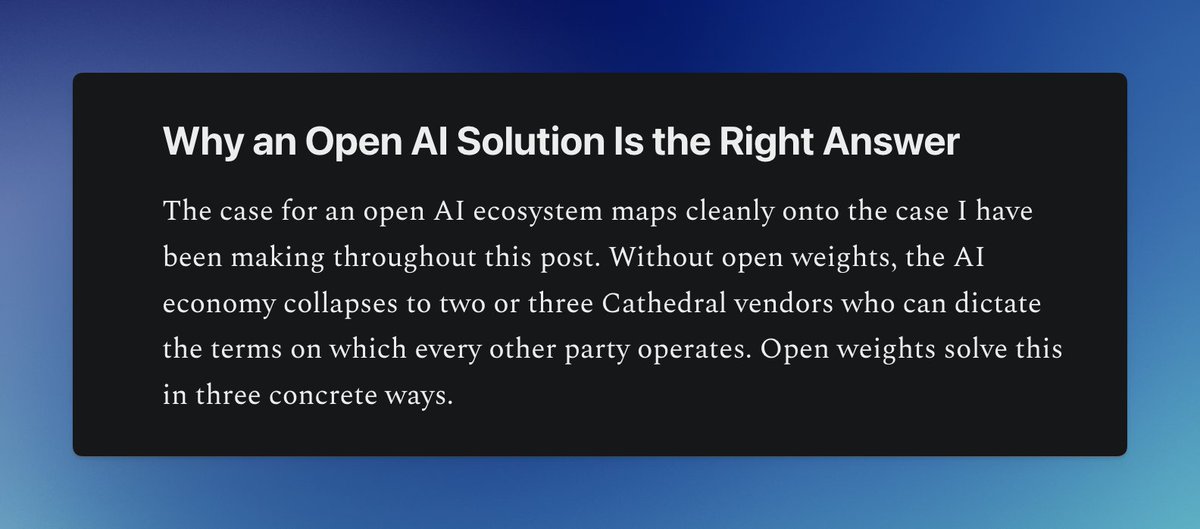

A new @bgurley blog post! I have been thinking about how sophisticated executives are using open source in super creative ways. Started writing this three years ago. Excited to finish it up and publish it! And with the new @p3institute brand. substack.com/home/post/p-19…