Xichen Pan

50 posts

Xichen Pan

@xichen_pan

PhD Student @NYU_Courant, Researcher @metaai; Multimodal Generation | Prev: @MSFTResearch, @AlibabaGroup, @sjtu1896; More at https://t.co/yyS8q316AV

🚀 Excited to share V-Co, a diffusion model that jointly denoises pixels and pretrained semantic features (e.g., DINO). We find a simple but effective recipe: 1️⃣ architecture matters a lot --> fully dual-stream JiT 2️⃣ CFG needs a better unconditional branch --> semantic-to-pixel masking for CFG 3️⃣ the best semantic supervision is hybrid --> perceptual-drifting hybrid loss 4️⃣ calibration is essential --> RMS-based feature rescaling We conducted a systematic study on V-Co, which is highly competitive at a comparable scale, and outperforms JiT-G/16 (~2B, FID 1.82) with fewer training epochs. 🧵 👇

Multimodal LLMs (MLLMs) excel at reasoning, layout understanding, and planning—yet in diffusion-based generation, they are often reduced to simple multimodal encoders. What if MLLMs could reason directly in latent space and guide diffusion generation with fine-grained, spatiotemporal control? 🤔 Introducing MetaCanvas 🎨 A lightweight framework that translates MLLM reasoning into structured spatiotemporal conditions for diffusion models. 🧵 👇

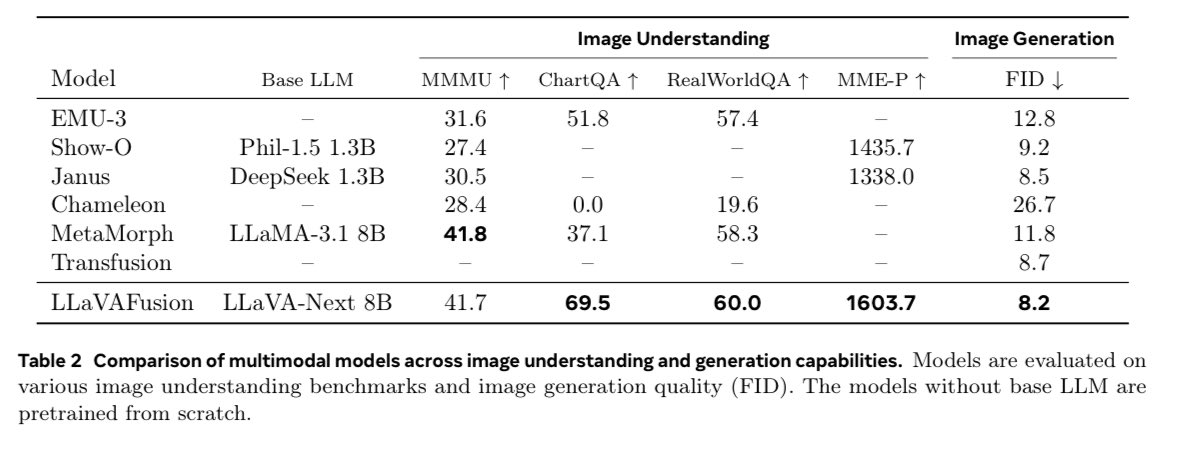

The code and instruction-tuning data for MetaQuery are now open-sourced! Code: github.com/facebookresear… Data: huggingface.co/collections/xc… Two months ago, we released MetaQuery, a minimal training recipe for SOTA unified understanding and generation models. We showed that tuning few learnable queries can transfer the world knowledge, strong reasoning, and in-context learning capabilities inherent in MLLMs to image generation. With the training code now available, you can train MetaQuery yourself almost as easily as fine-tuning a diffusion model. We have also open-sourced our 2.4M instruction-tuning dataset. Sourced from web corpora, it offers diverse supervision beyond copy-pasting and unlocks many new exciting capabilities. Thanks @metaai for their support in making it open source!

We find training unified multimodal understanding and generation models is so easy, you do not need to tune MLLMs at all. MLLM's knowledge/reasoning/in-context learning can be transferred from multimodal understanding (text output) to generation (pixel output) even it is FROZEN!

We find training unified multimodal understanding and generation models is so easy, you do not need to tune MLLMs at all. MLLM's knowledge/reasoning/in-context learning can be transferred from multimodal understanding (text output) to generation (pixel output) even it is FROZEN!