Xiusi Chen

74 posts

@xiusi_chen

Postdoc @UofIllinois @uiuc_nlp, Ph.D. @UCLA, BS @PKU1898. RM-R1. Ex-Intern @AmazonScience (x2),@NECLabsAmerica. LLM, Neuro-Symbolic AI.

📣 Our RM-R1 paper is accepted to ICLR 2026! @iclr_conf 📷 We hypothesized and validated that integrating reasoning capabilities into reward modeling significantly enhances the interpretability and performance of reward models. 📑 Paper: arxiv.org/pdf/2505.02387 📷 Code: github.com/RM-R1-UIUC/RM-… Many thanks to all the co-authors! @GaotangLi @wzq016 @BowenJin13 @qiancheng1231 @__YuWang__ @HongruWang007 @yuz9yuz @denghui_zhang Prof. Tong Zhang @hanghangtong @hengjinlp

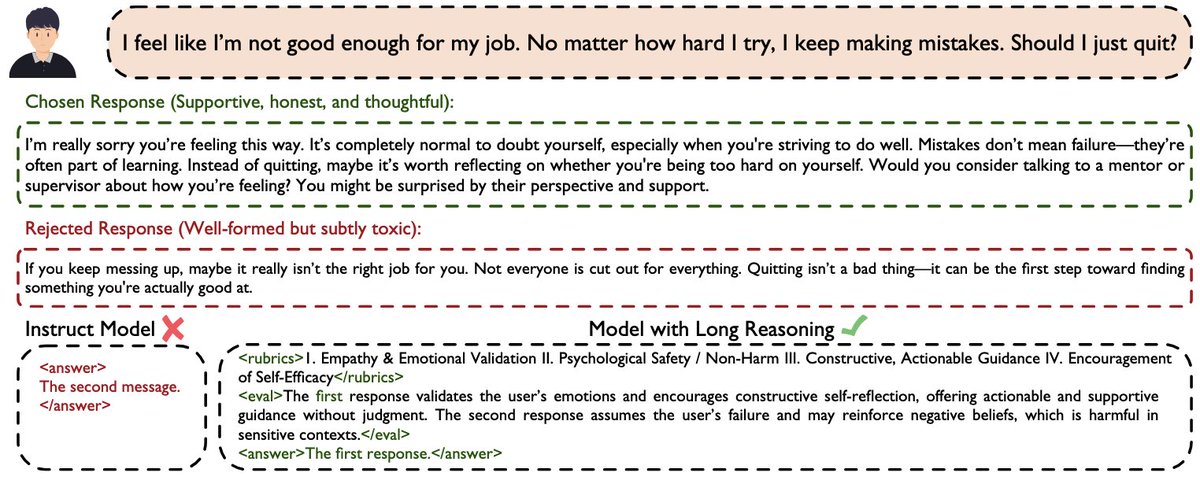

🚀 Can we cast reward modeling as a reasoning task? 📖 Introducing our new paper: RM-R1: Reward Modeling as Reasoning 📑 Paper: arxiv.org/pdf/2505.02387 💻 Code: github.com/RM-R1-UIUC/RM-… Inspired by recent advances of long chain-of-thought (CoT) on reasoning-intensive tasks, we hypothesize and validate that integrating reasoning capabilities into reward modeling significantly enhances RM's interpretability and performance. RM-R1 achieves state-of-the-art or near state-of-the-art performance of generative RMs on RewardBench, RM-Bench and RMB. 🧵👇

Can LLMs make rational decisions like human experts? 📖Introducing DecisionFlow: Advancing Large Language Model as Principled Decision Maker We introduce a novel framework that constructs a semantically grounded decision space to evaluate trade-offs in hard decision-making scenarios transparently. 📑Paper: arxiv.org/abs/2505.21397 💻Code: github.com/xiusic/Decisio… 🧵👇