For those in the niche of self-assembling structure, I hacked this simulator together amdson.github.io/blog/crystals/

It's a CTMC of transitions between quasi-static states in crystal growth, much like kTAM. And like kTAM it can build pretty much anything. E.g. ->

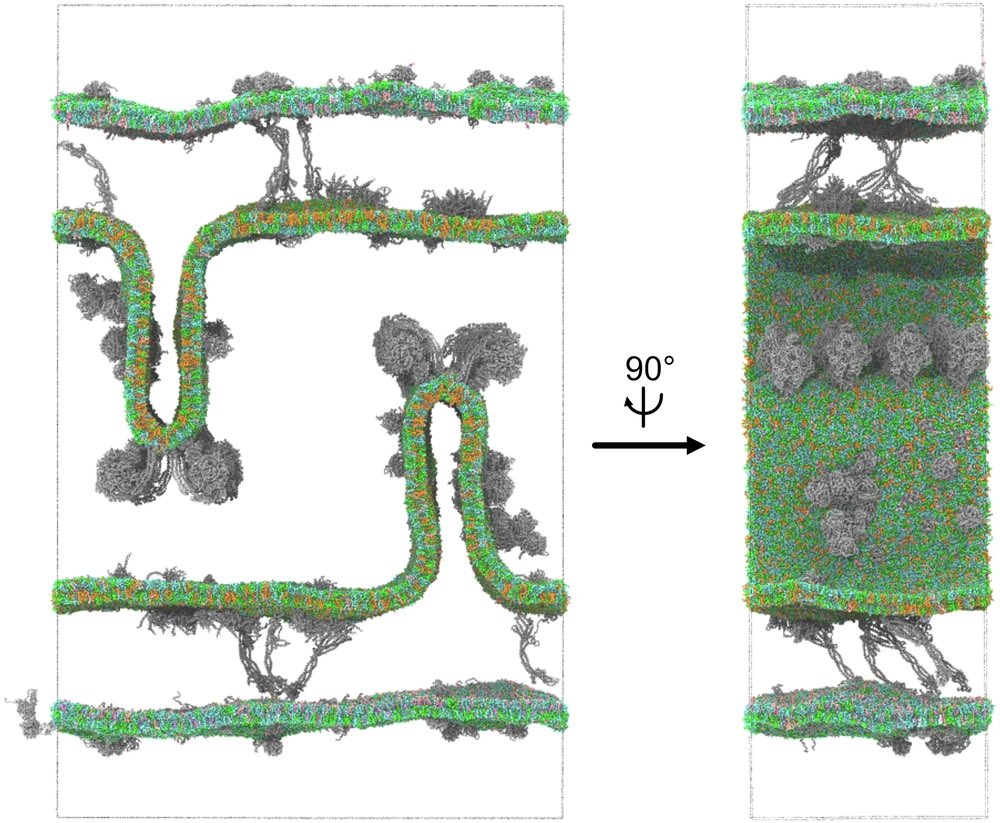

English