Sabitlenmiş Tweet

Yash Singhal 🔥

491 posts

Yash Singhal 🔥

@yash_25log

Eng @ IFAI🚀 | Ex- InteligenAI, CrossTower👨💻 | Sharing backend, design & dev experiments ⚗️ | Hackathons 🏆 | 22 | ⚡ Helping devs grow

Delhi, IND Katılım Mayıs 2021

1.6K Takip Edilen210 Takipçiler

@kirat_tw @jarredsumner Jarred speedran ‘build startup → get acquired’ faster than Bun installs dependencies

English

@kirat_tw Reading it on Twitter hits different than experiencing it at 3AM in production. 😭🔥

English

@mehulmpt Bro didn’t just warn us, he basically posted the spoiler alert for the whole outage arc. 😂

English

Well this didn't take long

Mehul Mohan@mehulmpt

X API is proxied by cloudflare, cloudflare going down will take out X (anytime)

English

@arpit_bhayani Wild ! how an amoeba explains range partitioning better than half the system design textbooks 😄

English

Range-based partitioning is like an amoeba.

You split when a partition gets hot, you merge when things cool down, and you move the partition across nodes (and transitively the data, if needed) when you want to grow beyond a single machine.

The best part of range-based partitioning is that it sits in the middle between hash and static approaches. It avoids the randomness of hash ownership and the heavy metadata burden of static ownership.

That's why, for stateful workloads, so many systems, operating at scale, prefer range-based ownership.

English

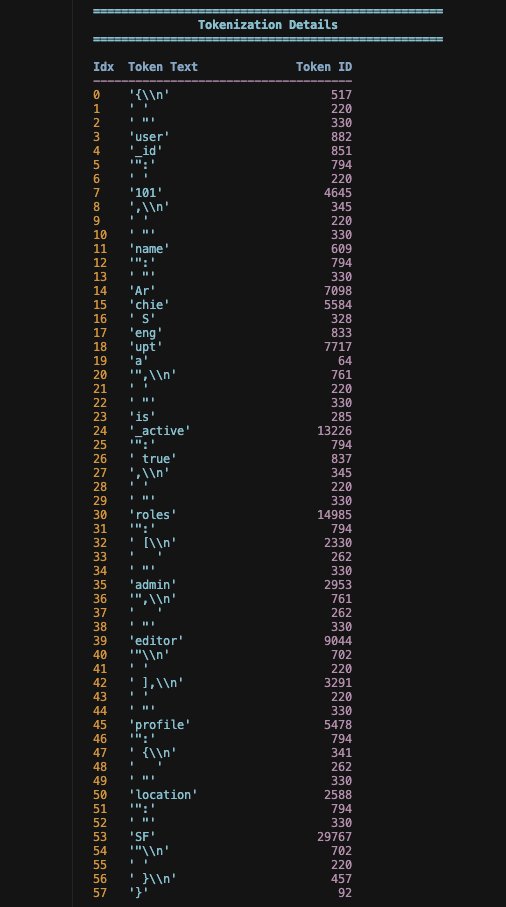

@archiexzzz Absolutely. JSON is a token tax - every { , : " } is money

English

JSON is a token-hungry parasitic dinosaur. If you look at openai/tiktoken, the pre-trained BPE vocabulary has most JSON structural characters - brackets, commas, quotes - as individual tokens in the vocabulary. This means even a small JSON can emit >1000 tokens, since every {, }, ", :, and , burns a separate token. Now imagine your use case: you're ingesting some massive JSON response from an MCP server tool, and you need to shove it all into your LLM for inference. Your cost just went through the roof.

I've been there. We were constantly hitting into context windows longer than 1M tokens, just inferring over these giant JSON strings. We ended up with all these hacky % operations on the token length just to figure out how many LLM calls we needed to digest the whole thing. It's a nightmare.

Your options are trash:

> either you throw away data, which is a hard no because you don't want data loss, or

> you accept that you're just going to burn more VC money because every provider charges you per token.

TOON is a decent alternative, but it's not perfectly accurate. The thing is, LLMs were pre-trained on a lot of JSON. You switch everything to TOON, and your accuracy takes a hit. It's a trade-off.

If you're ranking formats for accuracy, it's roughly:

Markdown-KV > XML > YAML > HTML > JSON > TOON

TOON is getting better, but as an AI engineer, the most optimized way to solve this right now is to figure out your personal trade-off between latency <> cost <> model accuracy. If your task is simple and doesn't live or die by accuracy, try TOON -> Run evals on a small dataset -> If the numbers look good, stick with it -> If they're bad, fall back to JSON or XML.

But really, the smart move is to think before the LLM call:

> Can you filter that JSON down to just the fields the LLM actually needs?

> Can you summarize big text blobs with a cheaper model first?

> Can you break the task into a conversation instead of one giant context dump?

That's where you win. The format is just one part of the puzzle.

English

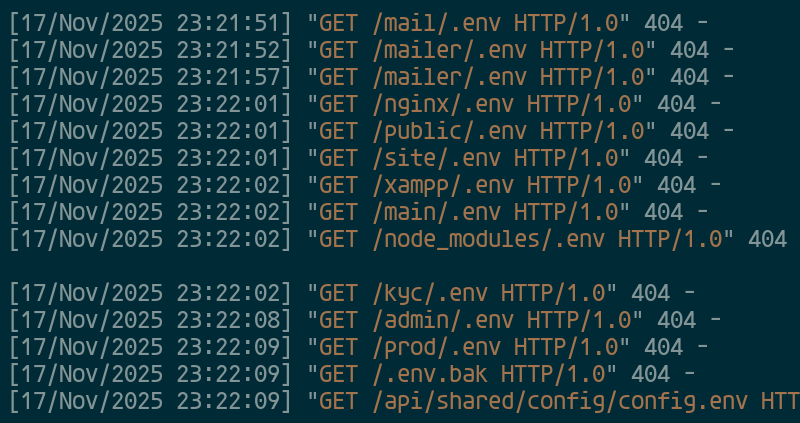

@LowLevelTweets Relax 😆 that’s just bots knocking on every door on the internet hoping someone left .env outside. Ours is locked.

English

The takeaway:

For global businesses, depending on a robust CDN isn't optional—it's survival. 🥷

A single point of failure at this scale creates a cascading crisis for whole ecosystem. 🌋

Are we too centralized? What's solution?

#Tech #Cloudflare #CDN #Cybersecurity #DevOps

English

6.

That’s the first half of the story.

𝘚𝘵𝘢𝘵𝘪𝘤 → 𝘔𝘝𝘊 → 𝘚𝘗𝘈 → 𝘉𝘍𝘍

Next up in 𝐏𝐚𝐫𝐭 𝟐:

How 𝐒𝐒𝐑, 𝐒𝐒𝐆 & 𝐑𝐞𝐚𝐜𝐭 𝐒𝐞𝐫𝐯𝐞𝐫 𝐂𝐨𝐦𝐩𝐨𝐧𝐞𝐧𝐭𝐬 changed everything ⚡️

#frontend #webdev #nextjs #react #softwarearchitecture

English