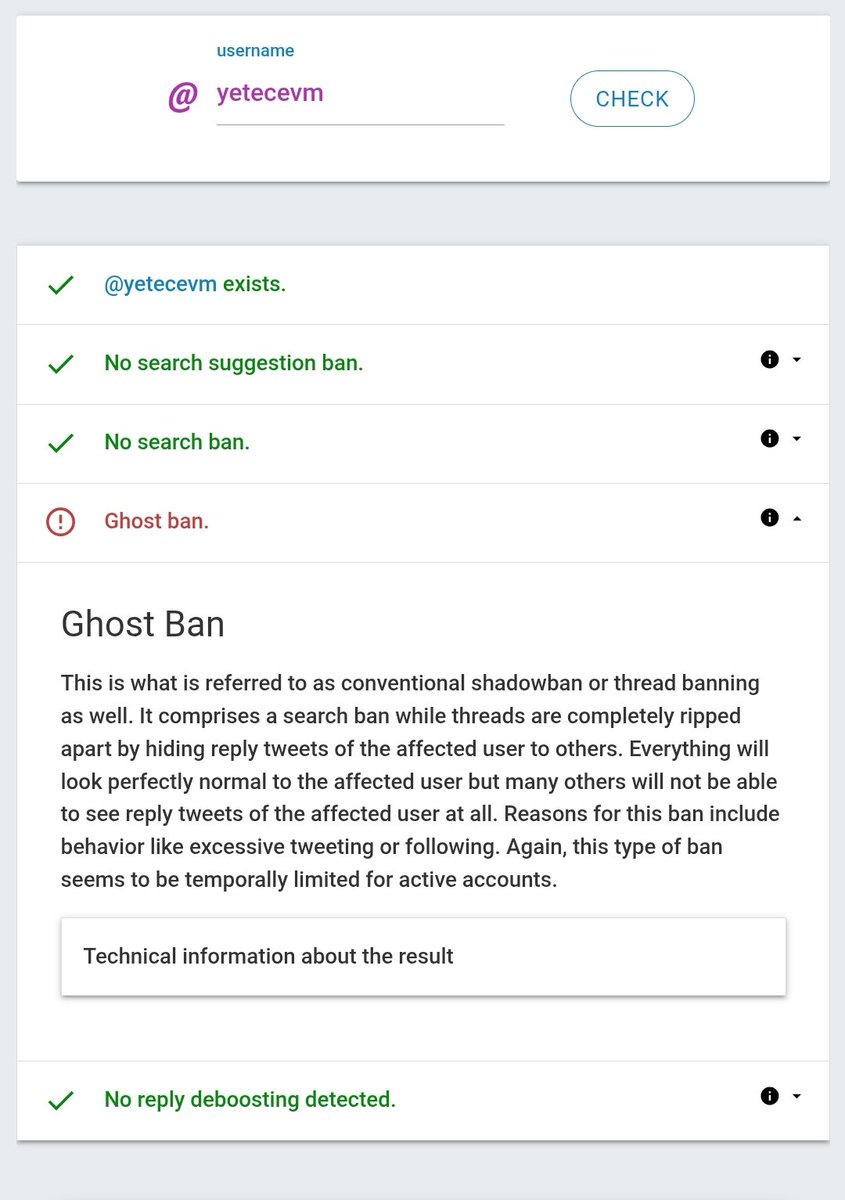

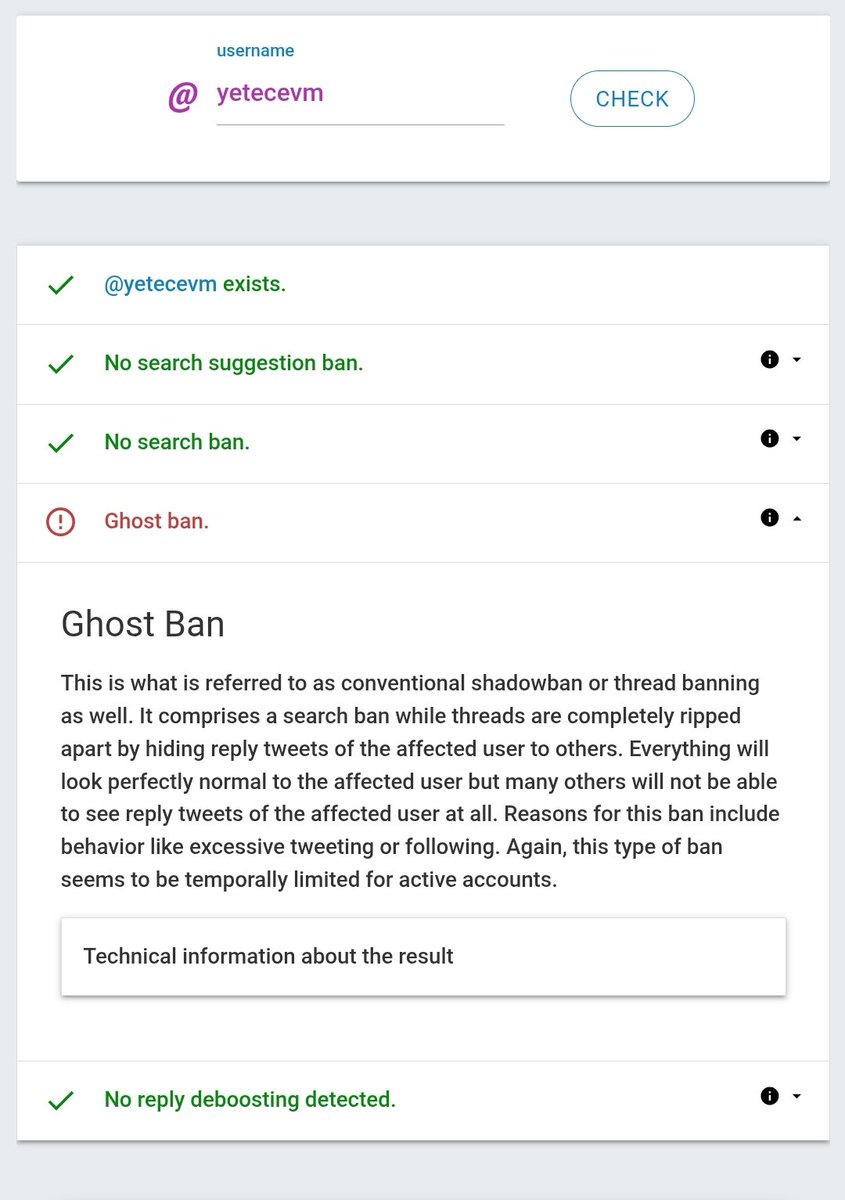

yetecevm (❖,❖)

175 posts

@yetecevm2

Second account @yetecevm contribute @ritualnet

Gritual & Good morning everyone This is my artwork for today Keep being consistent because consistency is a very necessary foundation in doing anything. @ritualnet @ritualfnd

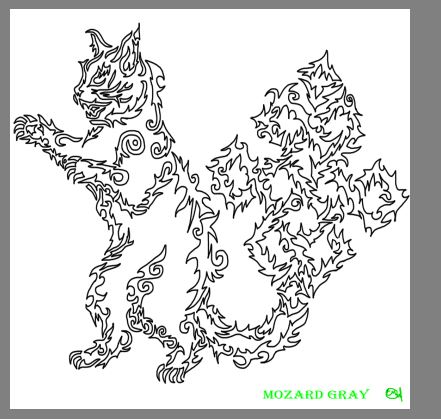

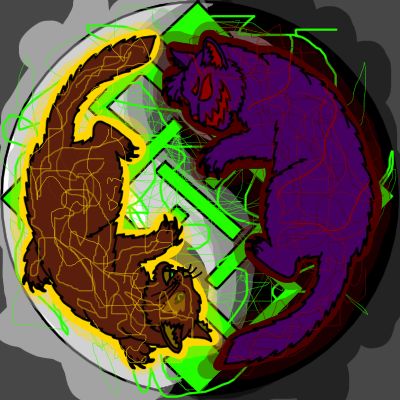

Gritual Good morning everyone Enjoy your pre-dawn meal This time I made a PFP art for @SuperSkyzee I hope you like it. @ritualfnd @ritualnet

Gritual fam Good morning Enjoy your morning meal, for those of you fasting, and happy Ramadan. This time, I created a profile picture for @0xArees Here's the result, I hope you like it. @ritualnet @ritualfnd

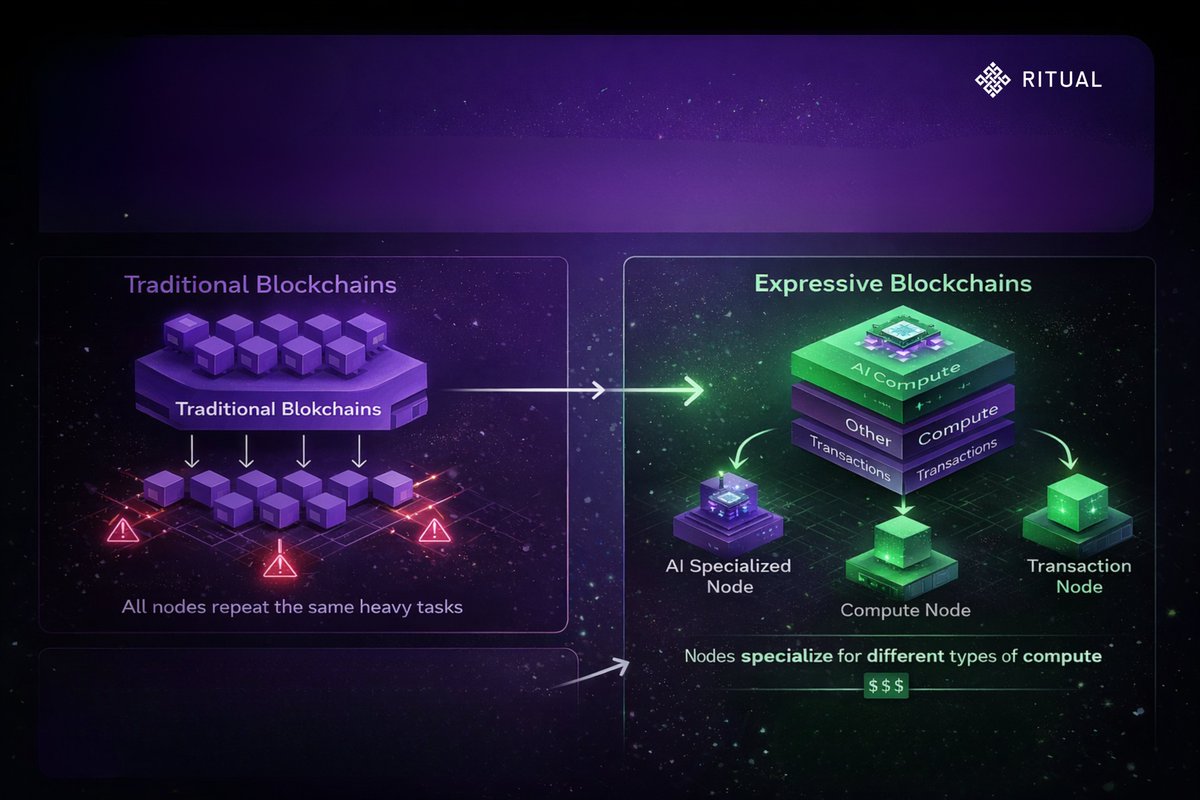

In Ep #5, we broke down how Ritual protects AI developers through Verifiable Provenance and on-chain monetization. The core idea is making sure your AI model doesn't get stolen. But securing the model itself isn’t enough if the computational results can be manipulated mid execution. Imagine an on-chain AI model managing your multi million dollar DeFi portfolio. Suddenly, without warning, that AI commands a smart contract to dump all your assets into a single anonymous wallet. How do you know that was purely a logical algorithmic decision, and not the result of a rogue node runner manipulating the data to drain your funds? Welcome to @Ritualnet Deep Dive #6 : Modular Computational Integrity & Defeating Rogue Nodes. In the Web2 world, AI is a secret black box. You feed it a prompt, you get an answer, but you never actually know the mathematical processes happening inside that massive corporate server. You are forced into blind trust. But in Web3, our core mantra is, Don't trust, verify. The problem is, reverifying an AI computation involving billions of parameters directly on a blockchain is technically and financially impossible. If we delegate this AI processing to external off-chain nodes, what’s the guarantee those nodes won’t spoof the results for their own financial gain? To solve this fatal manipulation risk, Ritual introduces a fundamental concept called Modular Computational Integrity. Instead of forcing the entire ecosystem and its developers into one rigid security standard, Ritual designed its architecture to be completely modular. This approach acknowledges reality, not all decentralized applications (dApps) require the same level of security, compute cost, or execution speed. Ritual gives developers the absolute freedom to choose and stack the proving mechanisms that best fit their specific business models and use cases. Within this modular integrity framework, dApp builders can secure their AI computations through three highly adaptable options. The first option is Zero-Knowledge Proofs for absolute cryptographic guarantees. If a dApp handles high value financial transactions, like an autonomous DeFi lending protocol or AI controlled on-chain insurance, developers can opt for ZK proofs. ZK provides absolute mathematical proof that the AI computation was executed exactly according to the original model, with zero data leakage or logic manipulation. This is the highest level of unhackable security, though it demands more time and compute costs. The second option is Optimistic Fraud Proofs, leaning on game theory and economic guarantees. For use cases requiring high speed and low costs, like AI driven NPC movements in Web3 games or SocialFi apps, developers can use the Optimistic model. The default assumption is that all network nodes act honestly. But, if a node is caught lying or returning fake AI results, other validator nodes can submit a Fraud Proof to the main network. This instantly slashes and confiscates the crypto assets of that rogue node. The third option relies on Trusted Execution Environments (TEE) as a hardware guarantee. Heavy computations can also prove their integrity by running inside highly isolated hardware safes (enclaves). TEEs send cryptographic attestations to the main network, proving that the AI matrix code running inside that hardware was never intervened with, altered, or even snooped on by outsiders, not even by the physical owner of the server itself. Why is this modular design so brilliant? The answer is simple, because the landscape of AI and ZK cryptography is evolving at an exponential rate. If cryptographers invent a brand new integrity proving method next year that is significantly lighter and faster than current ZK or Optimistic proofs, the Ritual network won't need to tear down its entire blockchain architecture with a massive hard fork. The network can simply add this new proving module like a plug and play Lego brick. With Modular Computational Integrity, Ritual isn't just securing on-chain AI for today's infrastructure. They are effectively building a decentralized compute ecosystem that is immune to technological obsolescence and ready to absorb any future innovation The series continues. Stay locked in. 🕯️ __________________________ 📚 Source: Official Ritual Documentation ritualfoundation.org/docs/ @joshsimenhoff @Jez_Cryptoz @0xMadScientist @dunken9718 @cryptooflashh #Ritual #AI #Blockchain #Verify

gRitual This is Part #42 Drawing Ritual PFP 📷 : @rizann67 How important Privacy Preserving LLM Inference? The goal is simple Let a third party run the model without them being able to see your input. Approaches like Secure Multi Party Computation (SMPC) offer a cryptographic solution by splitting the data across multiple servers, ensuring that no single party can reconstruct or read the full input. Without privacy preserving inference, AI will always rely on trust. And in centralized systems, trust always has limits. The future of AI isnt just about building smarter models. Its about whether your data remains yours when the model runs. #gRitual @ritualnet @ritualfnd

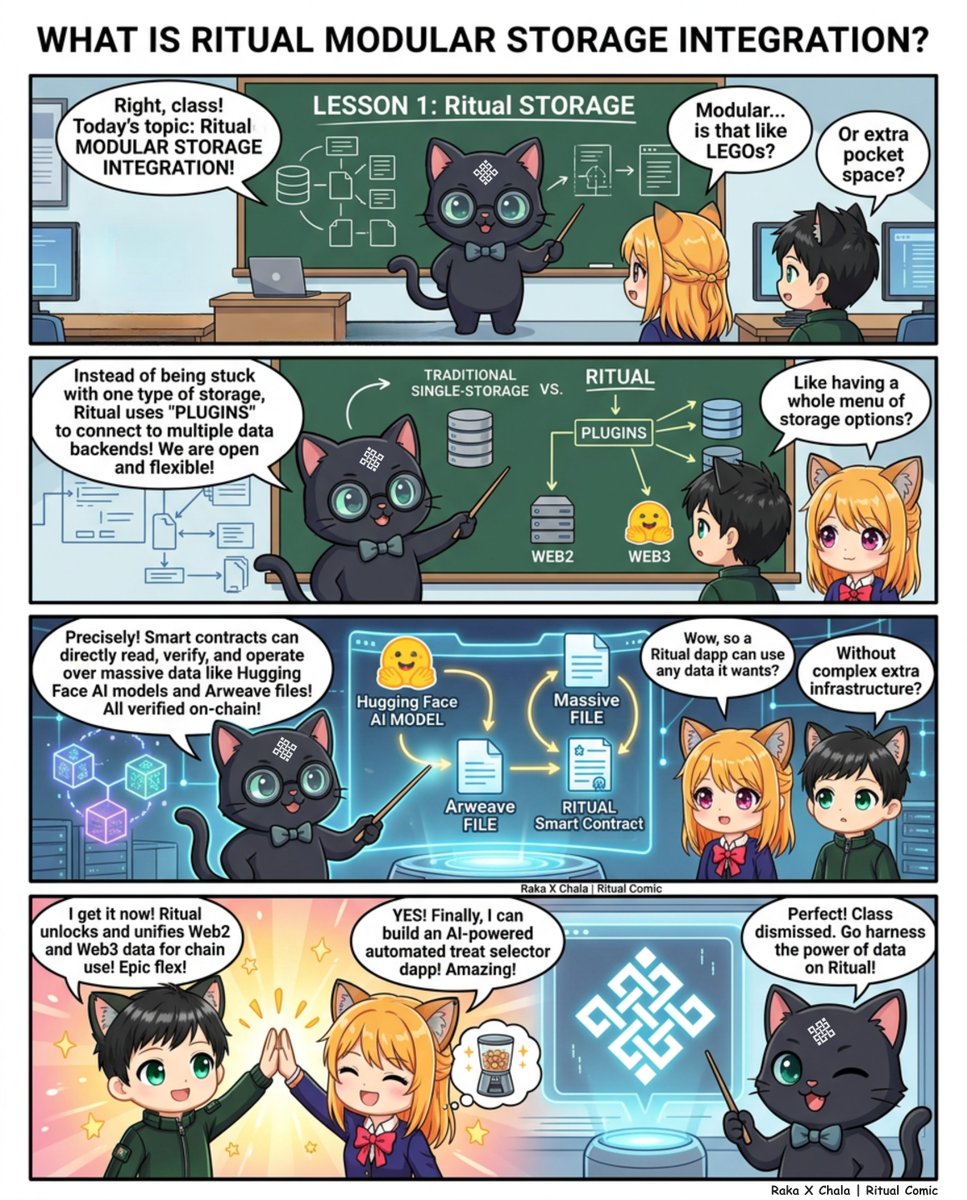

Ritual × Arweave Decentralized Storage for Trustless AI AI sovereignty isn’t just about decentralized execution. It’s also about where models live, how their data is stored, and whether their history can be trusted. That’s why the integration between Ritual and Arweave is a meaningful step forward for decentralized AI infrastructure. Through this collaboration, Ritual brings permanent, trustless storage into its AI stack ensuring that models, proofs, and data remain immutable and verifiable over time. • Why Storage Matters for Decentralized AI AI models are more than just code. They evolve, get finetuned, generate outputs, and produce proofs. When this entire lifecycle depends on centralized servers, it introduces trust assumptions and single points of failure. By integrating Arweave, Ritual ensures that : > AI models can be stored permanently > Metadata and proofs remain tamper-proof > Historical versions are preserved > Data is accessible across the ecosystem • How Arweave Integrates Into the Ritual Stack The integration spans both Ritual Chain and Infernet in two key ways : Storage Layer for AI Models Arweave functions as a decentralized storage layer for AI models. Nodes within the Ritual network can dynamically upload and download models directly from Arweave. This removes reliance on centralized hosting providers and enables transparent model versioning. Every update can be preserved, creating an immutable record of development over time. Permanent Storage for Artifacts and Metadata AI systems generate critical artifacts such as : > Model metadata > Proof related data > Privacy artifacts > Zero knowledge programs > NFT assets > Static application data Arweave serves as the permanent repository for these artifacts. Ritual Chain nodes can asynchronously reference this data for verification, compliance, or proof validation workflows. • Dedicated Arweave Bundlers To ensure efficient processing of storage requests, Ritual and Arweave are collaborating on Dedicated Arweave Bundlers. These bundlers aggregate multiple storage transactions into optimized batches before submission to the Arweave network. The result is improved reliability, streamlined workflows, and greater efficiency for developers building on Ritual. • Unlocking a New AI Primitive The integration enables new design possibilities for decentralized AI applications. Model Versioning and Provenance For applications requiring auditability such as DeFi protocols or governance systems maintaining a transparent history of model updates is critical. Arweave provides an immutable ledger of model development, ensuring full traceability and integrity verification. • Verifiable AI Through ZK Artifacts Zero knowledge proofs are increasingly important for verifying AI computations. By storing ZK programs and their associated metadata on Arweave, Ritual ensures these artifacts remain transparent and tamper proof. Users can independently verify computation results long after execution. • Immutable Vector Database Storage For RAG based workflows, the integrity of embeddings and source data is essential. By storing vector data immutably on Arweave, Ritual enables : > Permanent embeddings > Verifiable source data > Auditable AI responses • Advancing AI Sovereignty True AI sovereignty requires decentralization across the full stack execution, storage, proofs, and historical data. Ritual provides decentralized AI execution infrastructure, arweave provides permanent, censorship resistant storage. • Looking Forward This integration unlocks new opportunities for : > Fully auditable AI agents > Permanent AI artifacts > Trustless model distribution > Compliance ready decentralized AI systems As decentralized AI continues to evolve, integrations like Ritual × Arweave demonstrate how infrastructure layers can combine to enable more secure, transparent, and resilient applications. gRitual💚 @ritualnet @ritualfnd

Yo guys welcome back hahah Gritual This time I created a profile photo art for @0xbadel I hope you like it. @ritualfnd @ritualnet