kai

153 posts

kai

@yourkaisensei

print(writing code..)

CODEX SKILL TO BRUTALLY TEST ANY STARTUP IDEA! Most startup ideas sound good. This Codex skill tells you why they probably won’t work. Just give Codex your idea and it pressure-tests it for you -> finds the core assumption -> exposes fatal flaws -> checks if the problem is real -> maps real competitors -> plans your first 10 customers -> defines a 2 week MVP Install: npx --yes codex-startup-pressure-test-skill@latest 100% open source. Repo in bio

@sudoingX How do you organize projects and separation? Like would you use the same instance for managing work and personal things?

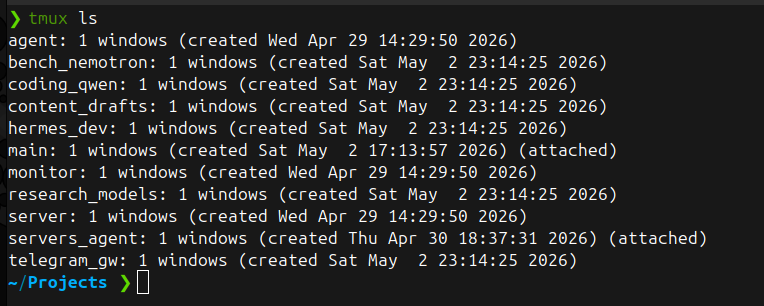

nemotron 3 omni q8 on dgx spark 128gb vram cranking via hermes agent at 56 tok/s. first night of real local agentic on this box and local hits harder than i thought it would. q8 (near lossless quant, perplexity loss <1% vs fp16) running 256k context on 33 gb of unified memory, 90+ gb still free. multimodal omni weights included. hermes agent driving from telegram, talking to it from bed. speed: 56 tok/s generation, 1,300 tok/s prefill. for context, qwen 3.6 27b at q4 (heavy quant) on 3090 = 40 tok/s. nemotron at higher precision quant on spark beats qwen at lower precision quant on 3090. moe 3.5b active params architecture earns its keep. what i tested tonight: agentic tool calling works clean. ask it to check disks, it autonomously runs df -h through hermes agent. ask it to set up telegram gateway, it invokes the hermes-agent skill, walks through the prompts, completes the flow. overthinks a bit before tool calls (reasoning model trait) but lands the right move every time. researches api docs, internalizes, tests, documents. completes tasks. current models on dgx spark: 9 gguf files, 305 gb total, mix of qwen 3.6 27b dense (5 quants), nemotron omni (4 quants), deepseek v4-flash 158b q4 (the 112gb flagship test). more data coming this week as i benchmark each.