Yusuf Young

436 posts

Yusuf Young

@yusufyoung

Building Aether – an AI that joins your company, learns how you work, and spawns agent teams to run it. Exited FunnelBud (Sweden's #1 SMB CRM)

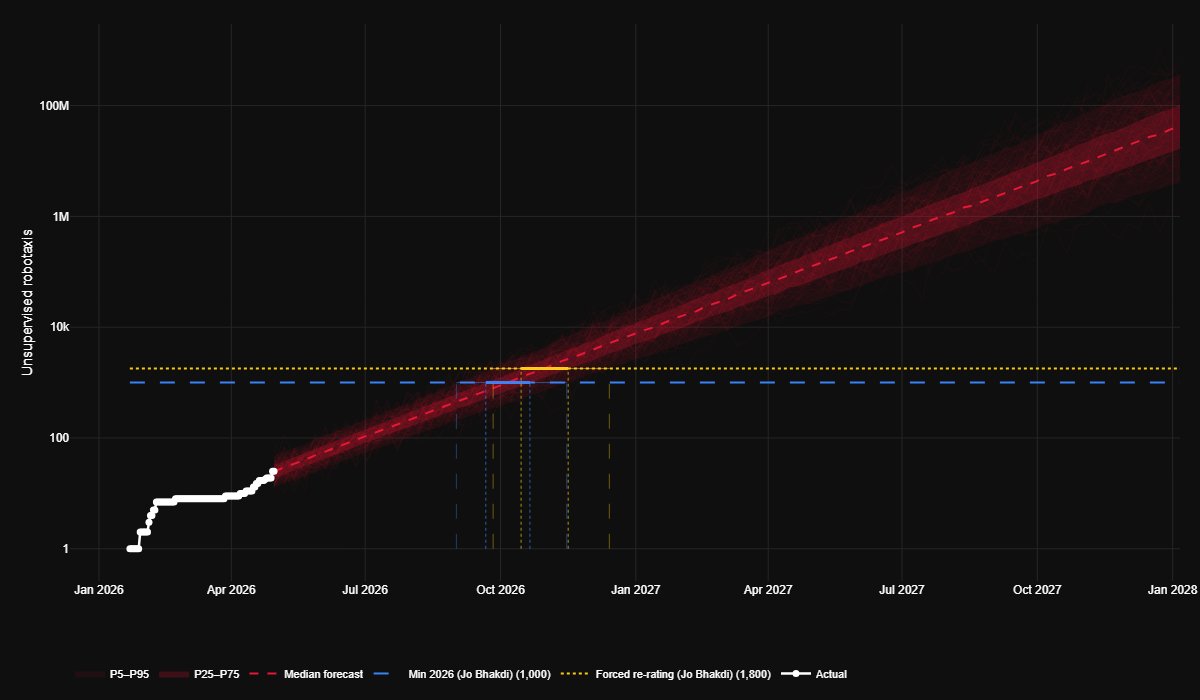

Tesla's unsupervised Robotaxi fleet is 2.4x larger than it was last month 🙈

1 million robotaxis. That's what Tesla needs to break even with their automotive revenue. Check this out: To reach its current annual automotive revenue of ~$70b with ride-hailing, Tesla would need to provide 70b annual miles (at $1/mile, which many say is the goal) – which happens to be pretty much exactly what Uber likely did in 2025. Assume the robotaxis do ~70,000 miles per year (a possibly realistic high-utilization target with 16–20 hours of daily operation) and you would need a fleet of exactly 1 million robotaxis.

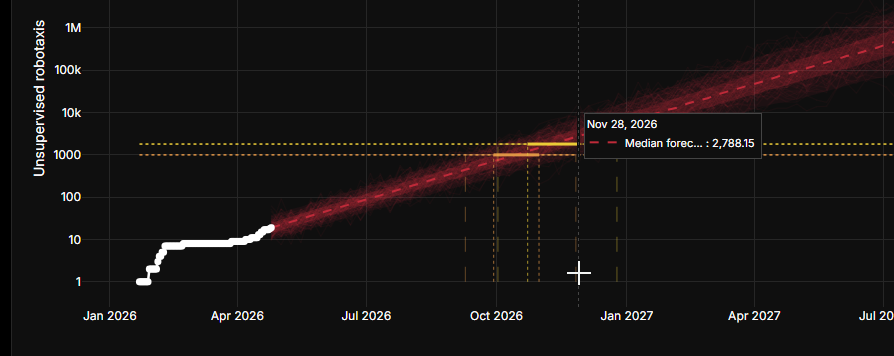

I made a Robotaxi scaling predictor: yusufhgmail.github.io/tslaRobotaxiPr… It's taking actual data and simply adding a graph assuming the line we have so far is the start of an exponential increase. If we continue scaling at this pace and this is the start of an exponential, we will cross 1000 Robotaxis Seb-Dec 2026 and we will reach your "forcing function re-rating" number 1800 robotaxis Oct 2026-Feb 2026.

@JOBhakdi Sure but the 12 month outlook is very questionable. It would not be unfair to assume full on robotaxi could still be more than a year away. Remains a long term bet. Automotive/FSD could save the day if FSD is widely approved. Optimus will take even longer.

I think privacy in AI agents is a dead end if you try to solve it technically. I've been designing Aether's privacy model for weeks now, and the deeper I go, the clearer this gets. You can't classify information as "private" or "not private." It doesn't work. Take a user's name. On a business card, it's fine. Linked to the fact that the user is in therapy, it's private. The name didn't change. The context did. And "the user is in therapy" isn't inherently private either. It's private because the user doesn't want others to know. Social judgment, embarrassment, vulnerability. That's a preference, not a property of the information. Follow any piece of "private" information to its root and you arrive at the same place: someone's preference. A financial transaction: sharing it with the counterparty is expected. Sharing it with a stranger? Sometimes fine, sometimes catastrophic. Sometimes you'd actually want to share it, to signal status or further your interests. Same data, different answer depending on the person and the moment. There is no universal rule that says "health information is private." In some cultures it isn't. Even within a culture, the same information in the same context may be private to one person and not to another. The only variable is what they prefer. We initially had a requirement: "the agent must be able to distinguish what is private from what is not." That requirement was wrong. It assumes privacy is a derivable property of data. It isn't. No classification algorithm, no tagging system, no rule engine can determine what is private for a given user in a given context. Somewhere, someone's judgment is required: the user's (at the cost of autonomy) or the agent's (which the user must trust). I've decided to go with autonomy and build trustability into Aether instead. The agent learns your preferences over time through feedback and alignment, the same way you'd build trust with a human assistant. This is different from NVIDIA's NemoCloud, for example, which takes the opposite route: require explicit approval for every share, every channel, every piece of information. It's safe. It's also not autonomous. I think the harder path is the right one.