zeb

2.6K posts

@zebassembly I KEEP TELLING PEOPLE ABOUT THIS. NO CLUE HOW PEOPLE ARE DESENSITISED TO THIS, THE SCALE HERE IS INSANE

For every person who replies with a screenshot of their cancelled Claude Code plan, I will donate $10 to open source.

The clickhouse team are so so brilliant

SECURITY ADVISORY — TanStack npm packages A supply-chain compromise affecting 42 @tanstack/* packages (84 versions total) was published to npm earlier today at approximately 19:20 and 19:26 UTC. Two malicious versions per package. Status: ACTIVE — packages are deprecated, npm security engaged, publish path being shut down. Severity: HIGH — payload exfiltrates AWS, GCP, Kubernetes, and Vault credentials, GitHub tokens, .npmrc contents, and SSH keys. If you installed any @tanstack/* package between 19:20 and 19:30 UTC today, treat the host as potentially compromised: • Rotate cloud, GitHub, and SSH credentials immediately • Audit cloud audit logs for the last several hours • Pin to a prior known-good version and reinstall from a clean lockfile Detection — the malicious manifest contains: "optionalDependencies": { "@tanstack/setup": "github:tanstack/router#79ac49ee..." } Any version with this entry is compromised. The payload is delivered via a git-resolved optionalDependency whose prepare script runs router_init.js (~2.3 MB, smuggled into each tarball at the package root). Unpublish is blocked by npm policy for most affected packages due to existing third-party dependents. All 84 versions are being deprecated with a SECURITY warning, and npm security has been engaged to pull tarballs at the registry level. Full technical breakdown, complete package and version list, and rolling status updates: github.com/TanStack/route… Credit to the security researcher for responsible disclosure.

FYI physics is still physics. NVMe is fast. Felt like a good day to update you all on this

ill bless my timeline with this on a sunday. gemini 3.1 pro is the ONLY model I've seen REMOVE heaps of shitty code to refactor with a net-negative churn. do with that what you will.

99.8% of bun’s pre-existing test suite passes on Linux x64 glibc in the rust rewrite

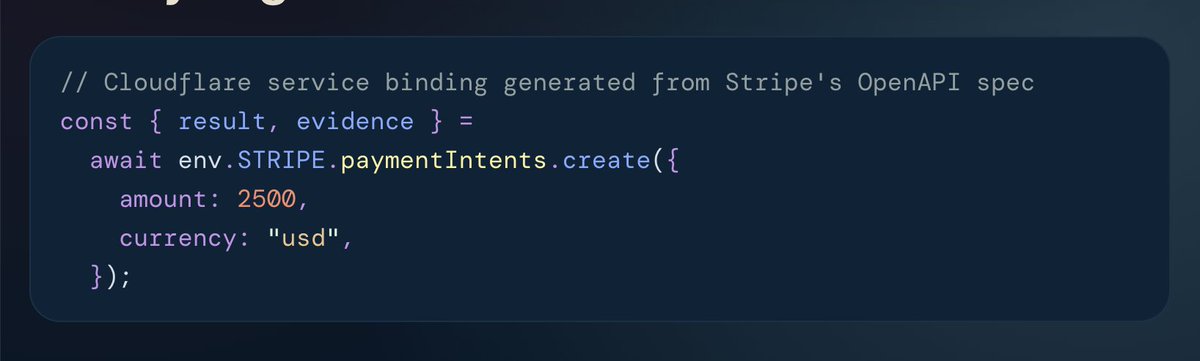

An update regarding the future at @Cloudflare. I’ve shared my full message to the team and details on the support we're providing those departing here: blog.cloudflare.com/building-for-t…