Changling Li

117 posts

@ChanglingXavier

AI safety, multi-agent systems, and governance. Currently at @ETH_en and @MPI_IS working with @maksym_andr and @sahar_abdelnabi.

Excited to share our new paper on AI Agent Traps An increasing volume of web content is being created by, and consumed by, advanced AI agents. This puts environmental AI safety in focus, as it exposes a vast attack surface via the content that AI agents interact with. Our paper explores the landscape of environmental attacks and defenses, aiming to inform mitigations that are needed for ensuring safety of the agentic web.

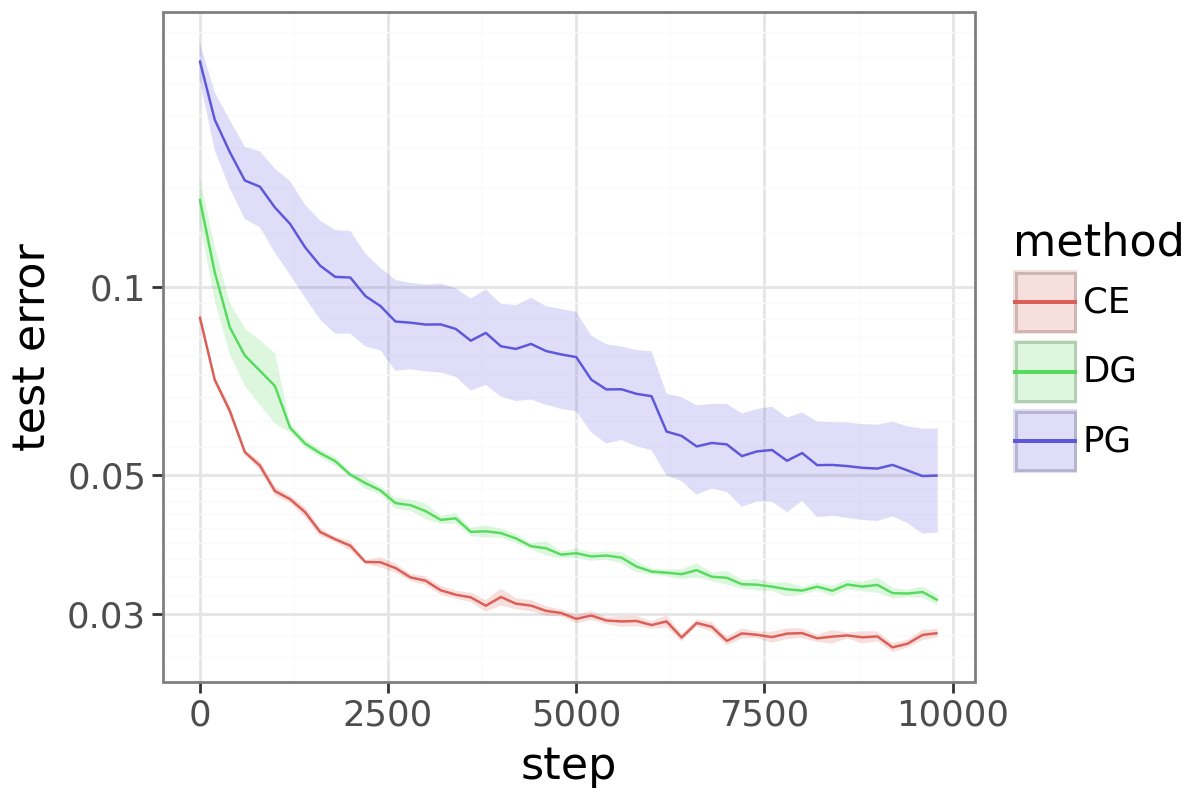

The paper I’ve been most obsessed with lately is finally out: nbcnews.com/tech/tech-news…! Check out this beautiful plot: it shows how much LLMs distort human writing when making edits, compared to how humans would revise the same content. We take a dataset of human-written essays from 2021, before the release of ChatGPT. We compare how people revise draft v1 -> v2 given expert feedback, with how an LLM revises the same v1 given the same feedback. This enables a counterfactual comparison: how much does the LLM alter the essay compared to what the human was originally intending to write? We find LLMs consistently induce massive distortions, even changing the actual meaning and conclusions argued for.

✨New AI Safety work on Steganography and LLM monitoring✨ We propose ‘steganographic gap’: the first principled metric for detecting and quantifying encoded reasoning in LLMs, which can reveal hard-to-detect forms of steganography, e.g., paraphrasing-resistant steganography.

💥 Today we release PostTrainBench v1.0 and the accompanying paper! We expect this benchmark to be key for monitoring progress in AI R&D automation and later recursive self-improvement. So, can LLM agents automate LLM post-training? 🧵

The memory feature can be very useful at times, but with academic work where I'm trying to understand ideas as objectively as I can and work out what is true, I'm afraid it slants the answers to relate to my existing beliefs in a way that is ultimately unhelpful. It feels like intellectual sycophancy and makes me doubt the answer. Obviously models sometimes push back but there's no clear demarcation of when they do so and why. In the screenshot below I'm trying to understand Morgenstern and Von Neumann's mathematical theory of microeconomics, and relate it to how people model Als in the abstract. The answer is alright but why relate this to my prior work on distributional AGI? It's not necessarily wrong, but detracts from what I'm trying to understand and feels like it's shoehorning ideas that are related and I'm predisposed to like, but not really needed to answer my query accurately.

1/ AI systems are quickly becoming embedded throughout the economy. But we have almost none of the regulatory tools, regulatory markets among them, to manage them. Here's what I think we should do about it:

It’s been a pleasure to lead our #IASEAI’26 workshop on ‘Establishing Foundational Principles and Thresholds for Multi-Agent AI Governance’, in collaboration with @BrookingsInst and hosted by @IASEAI. Thank you to all the technical experts and leaders from governance, policy, ethics, and law who joined and made this workshop and pre-workshop dinner a success.

Are neural nets across modalities really converging to the same representation as they scale, as the Platonic Representation Hypothesis suggests? We show that common representational similarity metrics are confounded by network width & depth. We propose a permutation-based null calibration that fixes this. Result❓ • Global convergence largely disappears. • Local neighborhoods persist. We propose the alternative Aristotelian Representation Hypothesis: Neural networks, trained with different objectives on different data and modalities, are converging to shared local neighborhood relationships Very proud of @FabianGroger and @ShuoWen18 for this work! Paper: arxiv.org/abs/2602.14486 Webpage: brbiclab.epfl.ch/aristotelian Code: github.com/mlbio-epfl/ari…