🚨📢Announcing the second Technical AI Governance Research (TAIGR) workshop @icmlconf. Accepting submissions (up to 8 pages) until April 24 on technical topics in AI governance! #icml2026

Cas (Stephen Casper)

2.5K posts

@StephenLCasper

AI safeguards & gov. research. PhD student @MIT_CSAIL (mnr. Public Policy), and Fellow at @BKCHarvard. Fmr. @AISecurityInst. https://t.co/r76TGxSVMb

🚨📢Announcing the second Technical AI Governance Research (TAIGR) workshop @icmlconf. Accepting submissions (up to 8 pages) until April 24 on technical topics in AI governance! #icml2026

When talking about AI and its future, I wish it were more of a faux pas to make vague appeals to the benefits of technological progress -- and I don't just mean "AI curing cancer". Technological progress in general probably isn't as good as we normally think it is. In addition to the issue of how tech companies are always selling us something, there are a lot of survivorship and storytelling biases affecting pro-technology stories. For example, lots of people in the world's history were victims of guns, germs, and steel, but they’re not around to complain anymore. They died. Appeals to the value of technical progress should be considered unserious unless they engage with the unmemorialized catastrophes that modern privileged society is built on. AI hasn't caused such a catastrophe (yet?), but if we are going to talk about the benefits of generative AI, we should leave some space in the conversation for the 84% of people in the world who don’t use it and aren't in the room with the decision-makers. 26% of humans don't even use the internet. And I think this is a good analogy. The internet is a general-purpose technology that can do a lot of great things. It CAN give everyone a world-class education. It CAN give everyone access to an integrated healthcare app. It has certainly been around long enough to have achieved these things. But it doesn't.

OpenAI is endorsing Illinois bill SB 315, which requires safety reports (similar to laws in California and New York) and third party audits of AI labs. They say all of their state AI policy work these days is in the effort of creating a "consistent, nationwide framework."

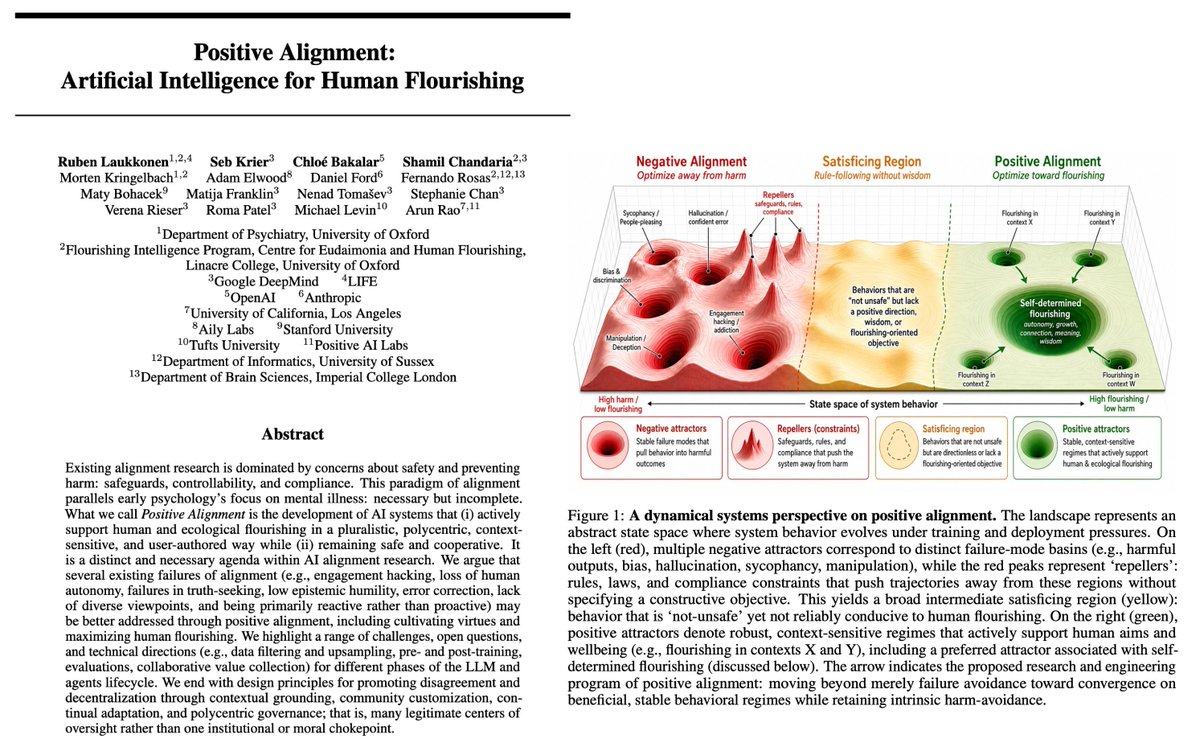

If anyone builds it, everyone thrives. Over the past decade, a lot of important work on AI alignment has focused on avoiding harm. But freedom from harm isn't the same as freedom to flourish. In this paper, we introduce 'Positive Alignment'. A positively aligned agent is one that helps us navigate our own value trade-offs, builds our resilience, and acts as a scaffold for human flourishing. Doing this without slipping into top-down, technocratic paternalism is the great design challenge of our time. We think a lot more research is now needed to explore this frontier: how do we align models that actively help us thrive? Amazing work by @RubenLaukkonen, @drmichaellevin, @weballergy, @verena_rieser, @AdamCElwood, @996roma, @FranklinMatija, @shamilch, @_fernando_rosas, @scychan_brains, @matybohacek, @sudoraohacker, and others. arxiv.org/abs/2605.10310