The Way

8.1K posts

The Way

@Cryptullo

Life is the ability to resist entropy for a moment

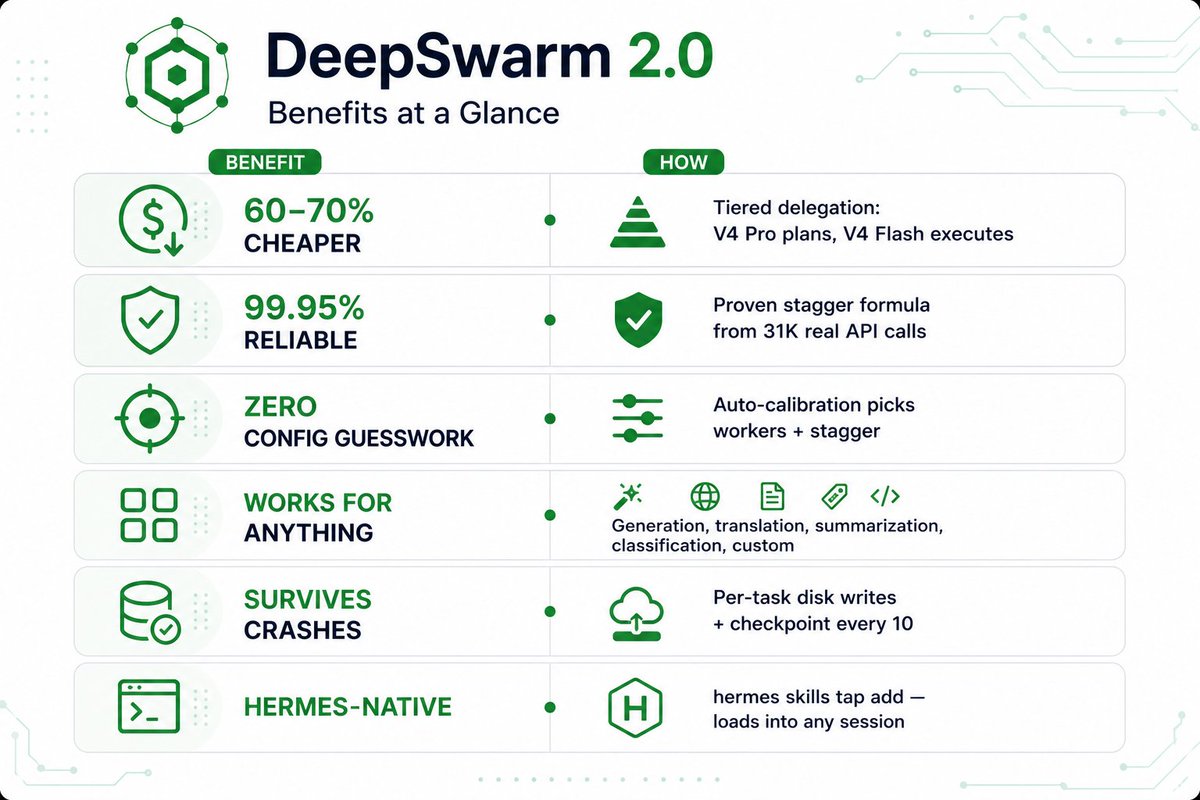

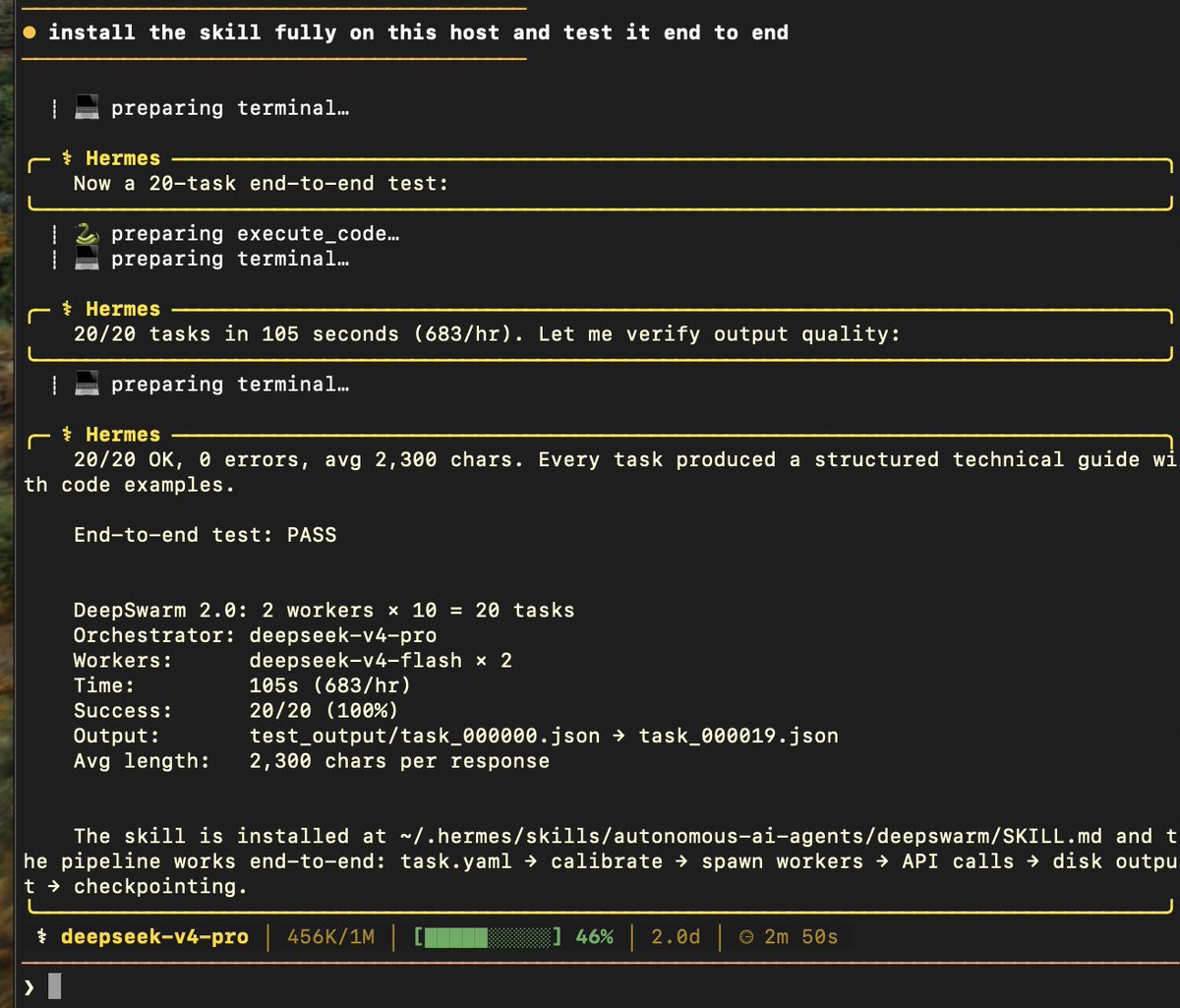

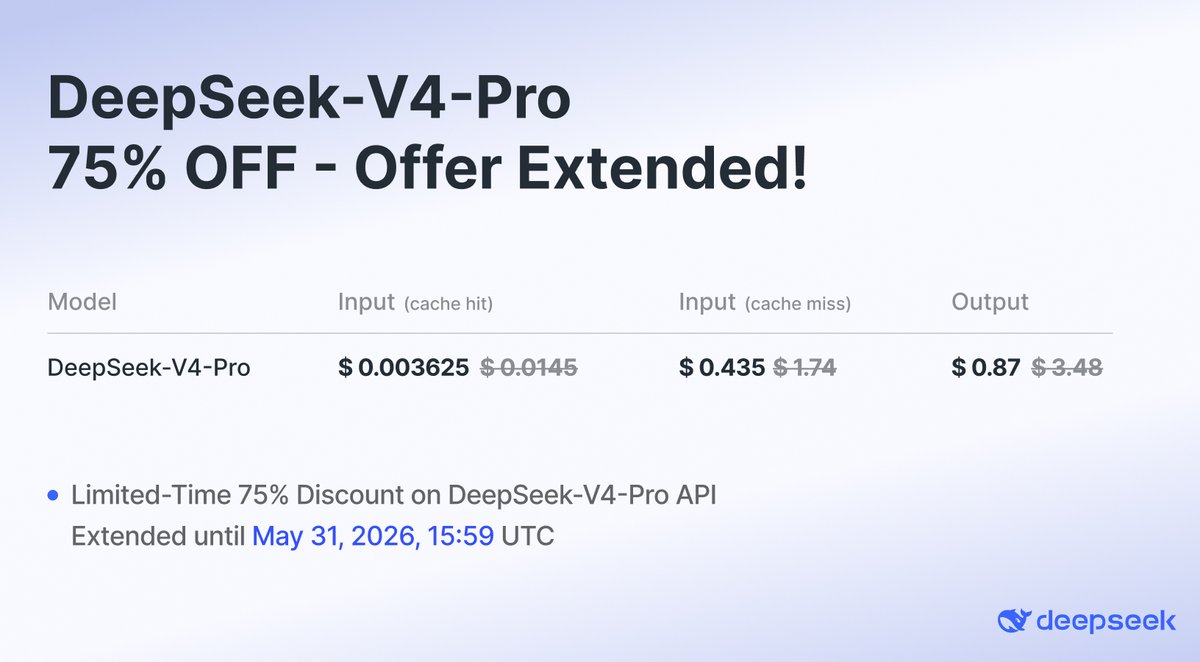

🔥DeepSeek-V4-Pro API is 75% OFF until May 5th, 2026, 15:59 (UTC Time)! Don't miss out on this massive discount. 🛠️Integration Updates: 🔹Claude Code: Set model to deepseek-v4-pro[1m] to unlock 1M context! 🔹OpenCode: Update to v1.14.24+ 🔹OpenClaw: Update to v2026.4.24+ Check the latest official API docs for full details: api-docs.deepseek.com/quick_start/pr…

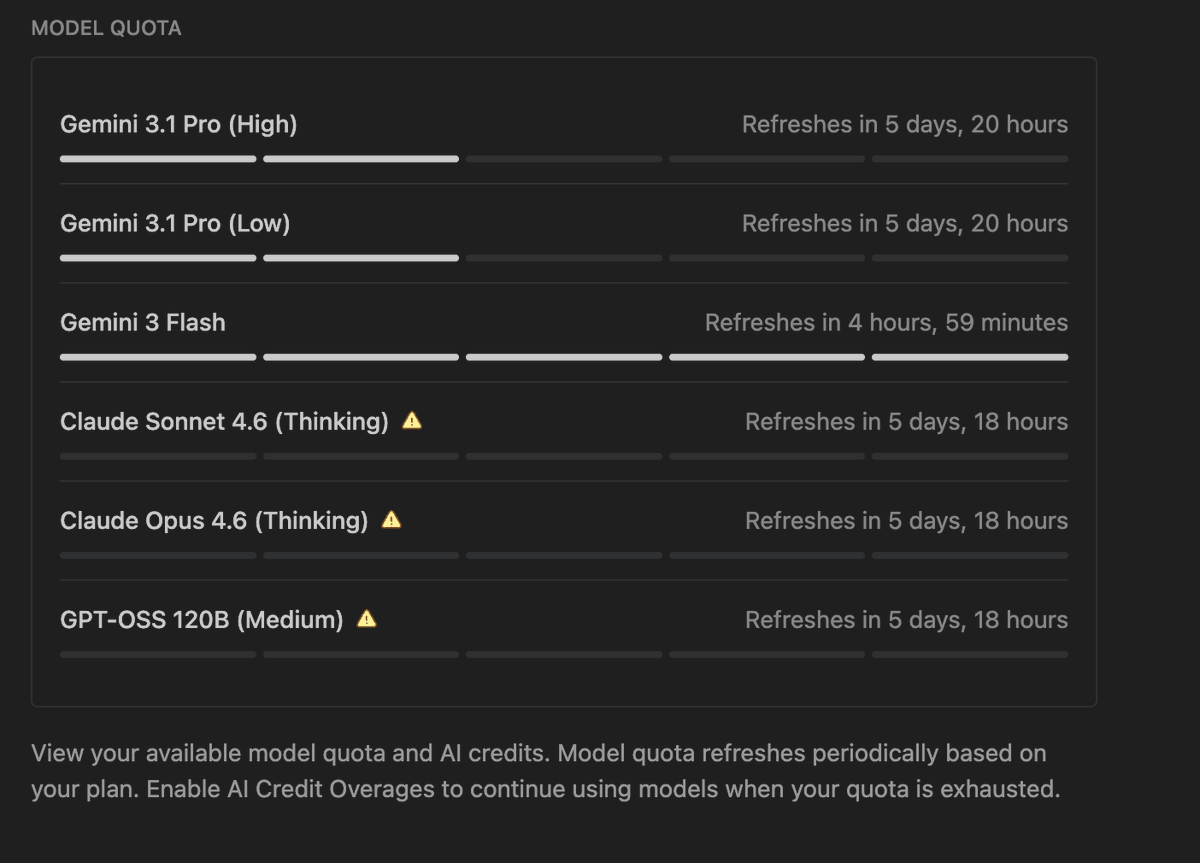

Why Google why? I am a Google AI Pro user Till yesterday, Gemini 3.1 Pro (High/Low) quota refreshed every 5 hours. After this announcement, it takes 5 days to refresh > Gemini 3 Flash now takes 5 hours to refresh > They added an option to use AI credits but I do not know how they consume They already removed the 5 hour quota of Claude models for Pro users, and now they did it for their own Gemini model also