Gaussian Process

240 posts

Gaussian Process

@GaussianProcess

algorithmic trading

This is the most probability question of all the probability questions I’ve ever come across

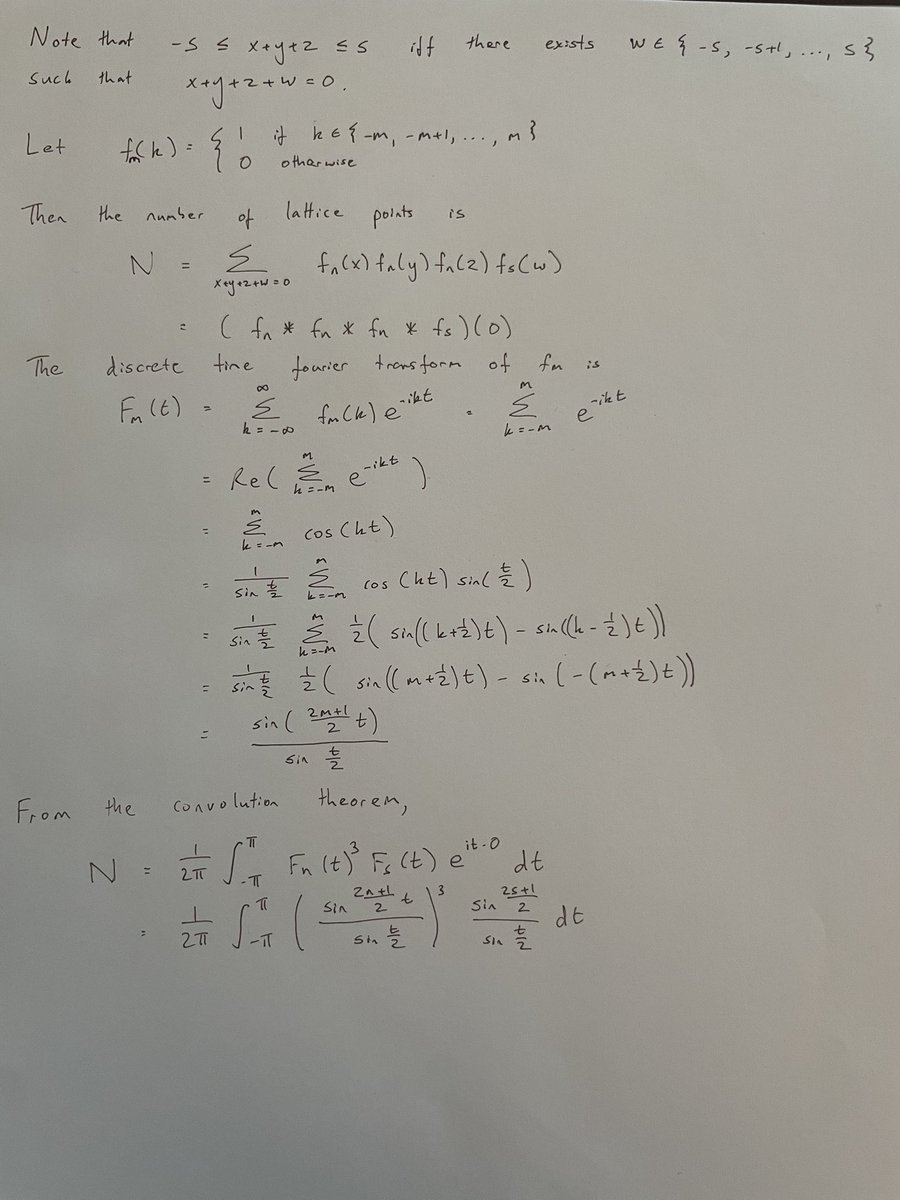

The answer depends on your interpretation. Interpretation A: We sample the fraction after each child is born. Then we have a series where each element is independently boy or girl, so by symmetry the expected fraction of girls is 0.5 at each step. Interpretation B: We sample the fraction after each family is finished. Suppose N families have finished. Then there are N girls, and some random variable B of boys. The expected fraction of girls is E[N/(N+B)] > N/(N+E[B]) = 0.5 by Jensen's inequality. So at any finite step, the expected fraction is more than 0.5! By the law of large numbers, N/(N+B) converges almost surely to 0.5 as N tends to infinity. Interpretation C: We start with a fixed population and we want to know what happens in the long run. In fact, the population will die out, so the fraction isn't even defined. Let p be the probability that a population with one man survives. Let b_i be the probability that a man has i boys. Then p = b_0 + b_1*p + b_2*p^2+... = . The only two solutions are p=0 and p=1 (the RHS is convex, so there can be at most two). Since there is positive probability the population dies out at the first step, p != 1, so we must have p = 0.

Y’all complain about LeetCode interviews but omg have you seen Quant interview questions?? Wth is this bro 😭

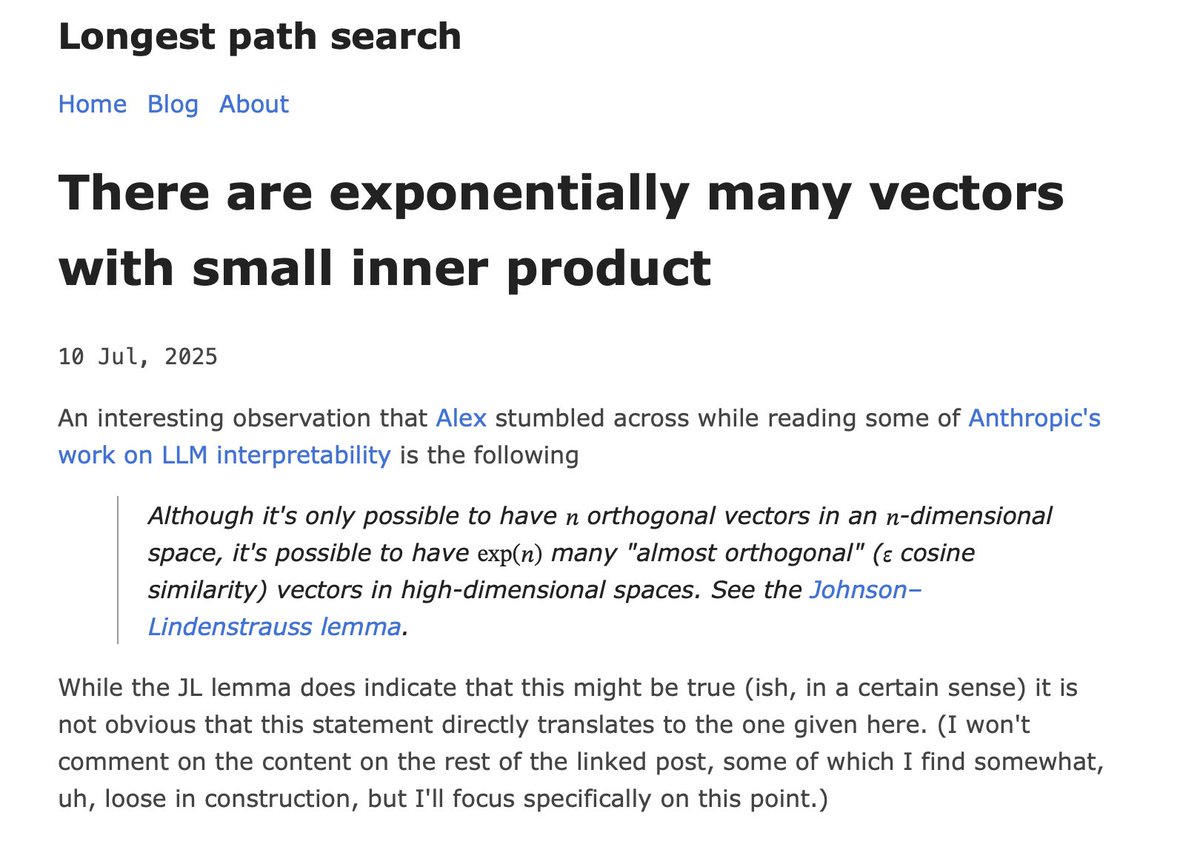

@MartinTassy @jason_of_cs @ciamac @Tim_Roughgarden @alz_zyd_ @_Dave__White_ Turns out that the constant we were looking for includes the Riemann zeta function evaluated at 1/2. I feel like we are getting close to connecting AMM research to the Riemann hypothesis, which is pretty impressive given that it all started with xy=k! arxiv.org/pdf/2505.05113

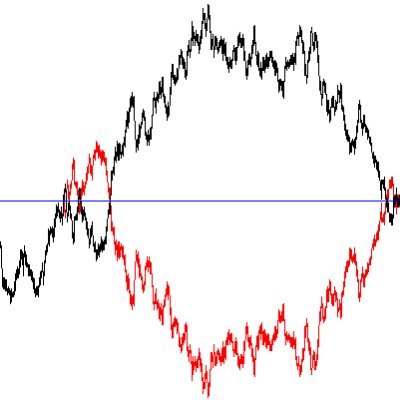

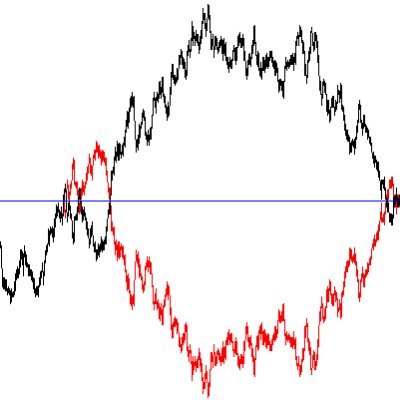

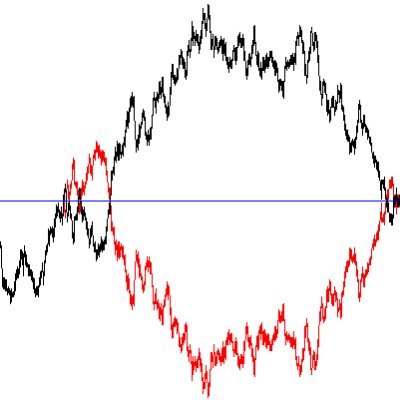

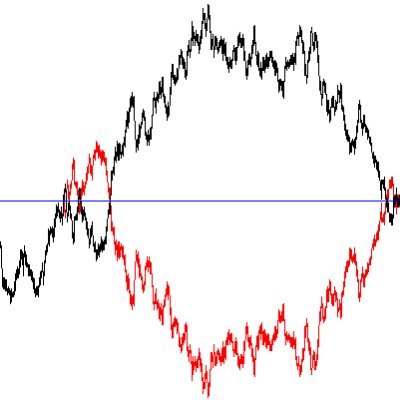

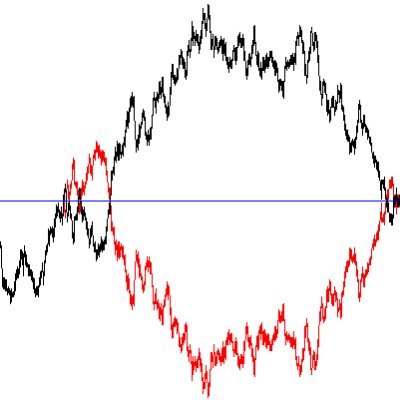

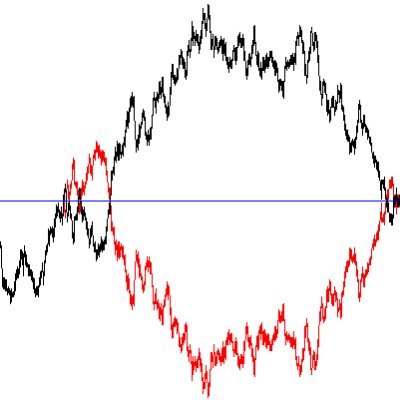

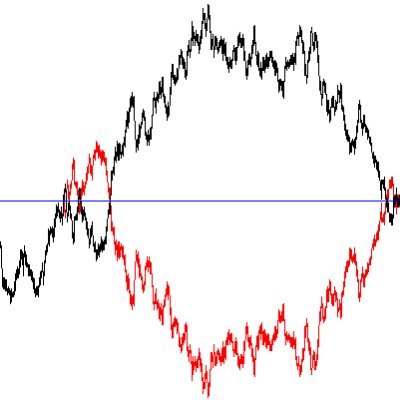

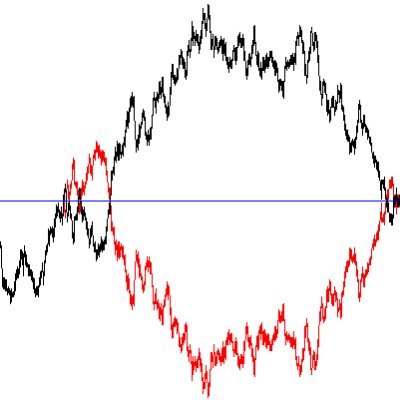

1/ Toy model showing that MEV extraction from ordinary users will be slightly worse in post-Merge PoS compared to PoW...

On a recent trip to the UK, @standupmaths shared this delightful little probability fact with me and asked if I might animate an explanation for a video of his. It's a fun one! See his full video with shenanigans relating this to dice and more. youtu.be/ga9Qk38FaHM

Suppose the probability of some event happening is 75%, and everyone knows that. What is the price of a prediction market share that resolves to $1 if that event happens and $0 if it doesn't? Assume 0% interest rates, trustworthy oracle, and perfectly efficient markets.