Tweet fixado

PrimeLine

366 posts

PrimeLine

@PrimeLineAI

4,200+ Claude Code sessions. 72 agents. 480 trait combos. No CS degree. The blueprints are free.

Da Nang - Vietnam Entrou em Mart 2023

64 Seguindo94 Seguidores

the "disposable" vs "load-bearing" frame maps well. I ended up with 4 buckets: always-loaded (safety rules), on-demand (task-specific), compressed (prior step summaries), isolated (per-agent).

tried a flat approach first but the failure rate was brutal.

key shift: tokens-per-task matters more than tokens-per-request. 10 compresed handoffs beat 1 monolithic context.

English

@PrimeLineAI @TrungTPhan Exactly the tension. Lean deltas save tokens but create blind spots when an agent needs to recover from a bad step. We've been tagging context as "disposable" vs "load-bearing" at handoff. Costs more upfront but the failure rate on backtracking drops hard.

English

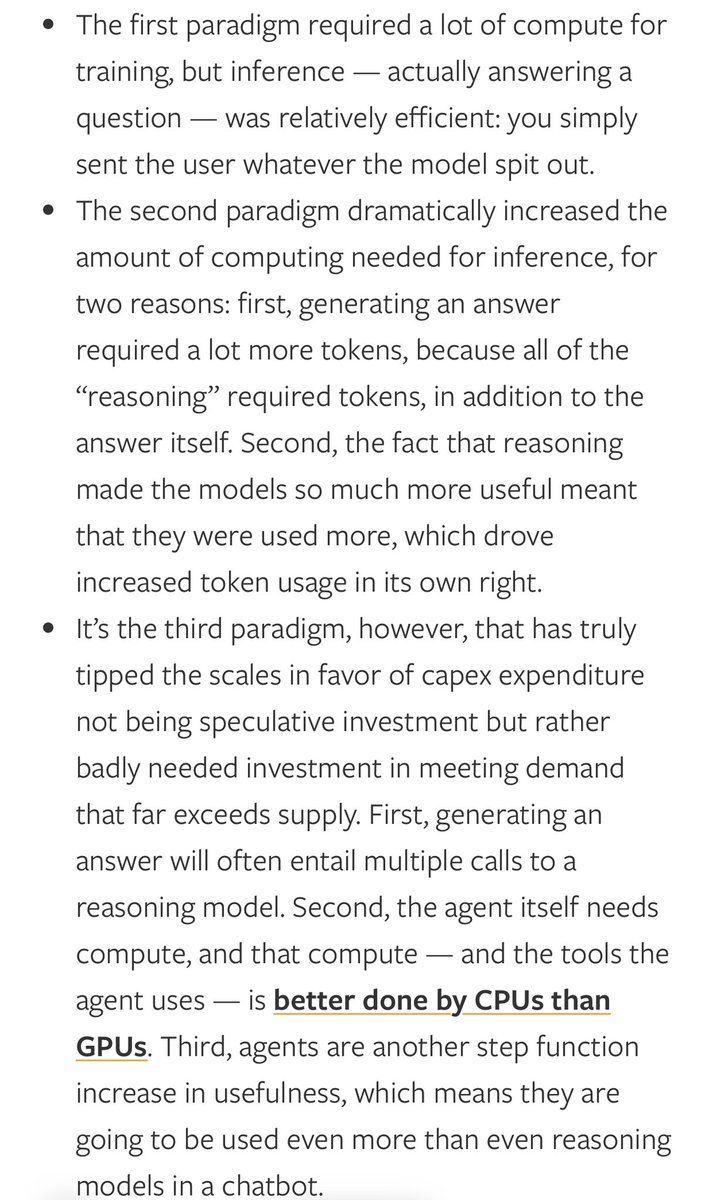

Ben Thompson makes the case for why the rise of AI agents justifies $650B hyperscaler capex spend.

The first paradigm of GenAI was AI chatbots. It required a lot to compute to train but not as much on inference. High token demand required a lot of users.

The second (reasoning) and third (AI agents) paradigms require much more inference tokens. Crucially, with AI agents, the number of power users required to drive token demand through the roof is a lot less than the number of people needed to do chat queries (eg. one person running 50 coding agents will be massive demand).

Stratechery@stratechery

Agents Over Bubbles Agents are fundamentally changing the shape of demand for compute, both in terms of how they work and in terms of who will use them. They're so compelling that I no longer believe we're in a bubble. stratechery.com/2026/agents-ov…

English

@JrHabana not in background. sessions are synchronous. but I've been using SessionEnd hooks to trigger analysis right before shutdown.

for truly async work, cron tasks or the new Telegram/Discord channels let you keep a session alive and interact from your phone while it runs.

English

@PrimeLineAI so, as I understand, does it runs "in background" triggering the analysis when close the sessions?

English

Every Claude Code session starts with amnesia.

Same project. Same patterns. Same mistakes. Zero memory of yesterday.

I recorded what happens when you fix that:

→ Claude queries past decisions BEFORE touching the codebase

→ "We switched to Inngest" — saved once, remembered forever

→ Code review catches issues using YOUR architecture context

→ After a fix, the system learns from it. Automatically. No prompt.

2 min demo. Both tools open source.

English

100%. bigger window ≠ better reasoning. I've seen attention degrade around 100-200K tokens regardless of the ceiling.

what helped: loading only task-relevant context per step and compacting mid-session instead of filling the full window. keeps instruction adherence sharp.

at what point do you notice the degradation kicking in?

English

@PrimeLineAI @trq212 I agree and confirm the 1M context window didn't increase the level when the model degrades it's quality

English

the "starts from zero" problem compounds fast. after 60+ sessions I noticed the same architecture decisions resurfacing because nothing persisted between sessions.

built a persistent memory layer on top of Claude Code - knowledge graph that loads project context at session start automatically. wrote up the full approach with a demo: primeline.cc/blog/persisten…

English

the "goldfish brain" framing is accurate. context compaction kills institutional knowledge - same problem I hit after 60+ sessions.

took a different angle though: instead of a multi-agent evaluation loop, I built a knowledge graph that persists learnings across sessions automatically.

simpler architecture, same core insight. wrote up the full approach: primeline.cc/blog/persisten…

English

Bookmarks are essentially just likes for people that use OpenClaw, right?

Jay Scambler@JayScambler

English

persistent memory is the piece most harness builders skip.

ran 4000+ sessions with a manual memory system before automating it - the manual approach degrades exactly when you're most productive.

wrote up how the automated version works with a knowledge graph that loads project context at session start: primeline.cc/blog/persisten…

English

the harness is everything. and if you fail to understand the harness. then. well. you fail to understand the harness…

anyway. loved reading this.

must bookmark.

Rohit@rohit4verse

English

the self-improving claude.md loop hits a ceiling around 50 rules. no decay, no routing, no way to load only what matters for the current task.

I built a full system around this exact pattern - persistent memory, keyword-based context routing, auto-archival. 4000+ sessions to get it right. open-sourced it: github.com/PrimeLineDirek…

English

Two things @rez0__'s been running in his Claude Code setup worth stealing:

1. Self-improving CLAUDE .md loop

Add this somewhere in your file:

"Anytime I get frustrated, anytime I have to re-explain something you didn't understand, or anytime you try a command and it fails repeatedly, add that lesson to the Applied Learning section in your CLAUDE .md"

Next time the same situation comes up, it already knows where your session files live, which commands work on your system, whatever it had to figure out the hard way. Saves you time, usage and frustration.

2. Discord as a remote Claude Code interface

He got tired of Claude RC not supporting --dangerously-skip-permissions so he built a Discord bot. Each task spawns its own thread as a session, tool calls render as diff blocks with green for additions, red for removals.

There's also a resume command at the top of every thread so he can jump back in from a VPS. Takes voice messages and attachments. He uses it to validate findings, check logs, host files, all from his phone without touching his laptop.

English

@NickSpisak_ @coreyganim solid approach. appreciate the breakdown, thanks

English

Will be dependent on the user and what they care about - if you’re a company that has strict compliance and security controls than they will likely prompt for those extra depths but if you’re a user that doesnt need/care than I’d suspect you’d spend more time onboarding features/functionality that make life easier

English

Thousands of you asked for this.

→ Drag one plugin into Claude Cowork

→ 10-minute guided onboarding

→ Builds your context files, connects your tools, conducts a security audit

10 minutes later you have an AI agent trained on YOUR business, ready to work.

Launching March 31.

7,803 signed up. Get notified when it drops: return-my-time.kit.com/016b6276aa

English

prompt-driven is flexible but security depth ends up depending on the user knowing what to ask for. went with a two-layer split - soft rules in claude.md that are prompt-driven, hard guardrails as hooks that catch dangerous patterns regardless of what gets prompted. do you see users defaulting to shallow audits or actually going deep during onboarding?

English

"Does the audit flag tings like overly broad bash access or secrets in project context"

It's dynamic in terms of what prompts you give it during onboarding. Just like when in plan mode in claude code it will give suggestions and guide you down the path you want to go.

If you want a more extensive security audit you just need to prompt the onboard itself. At the end of the day its a plugin so the prompts and data you give it as context drive the output.

English

how are you handling the context-fills-up-and-rules-degrade scenario? built 6 hooks for evolving lite including one that auto-warns at 70% context and forces handoffs at 93%. but the gap between warning and handoff is where most sessions still lose quality. curious if you found a better trigger point.

English

I spent the last few weeks living inside Claude Code.

Not just using it. Building with it. Breaking it. Figuring out what actually makes it work well vs. what wastes your time.

The biggest lesson?

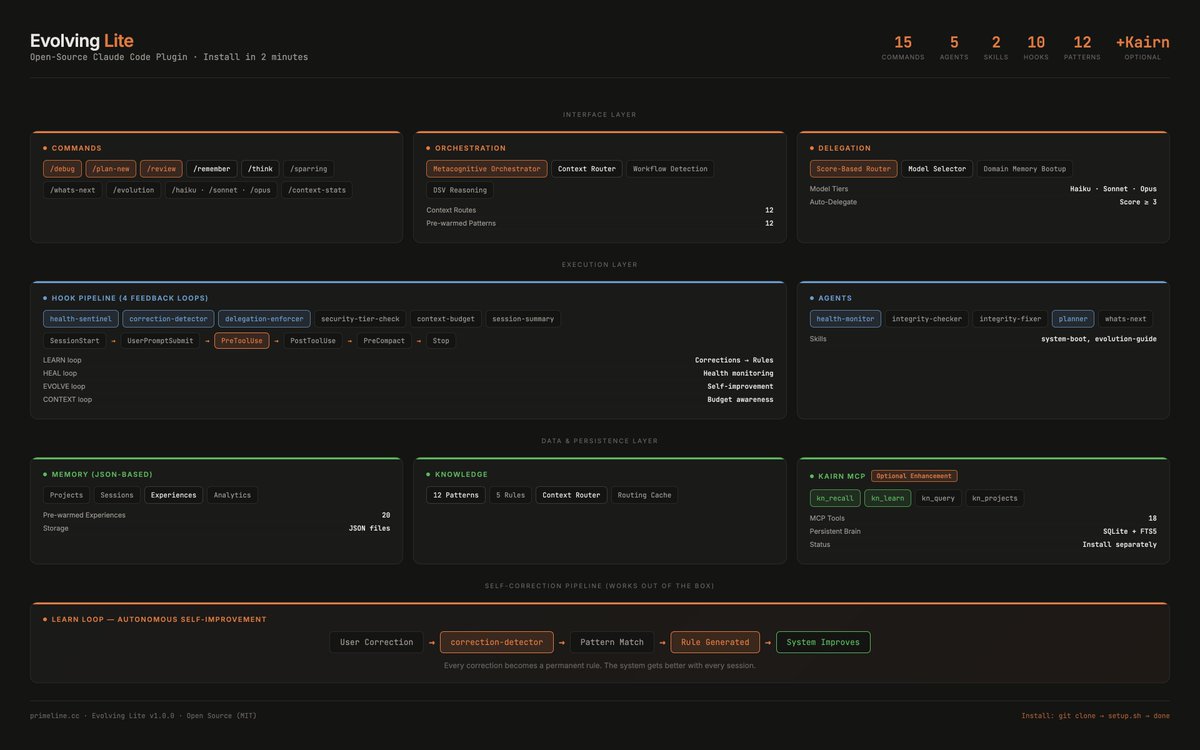

Claude Code isn't just a terminal chatbot. It's a 4-layer system:

→ CLAUDE. md - your project's persistent memory

→ Skills - knowledge packs Claude auto-invokes when relevant

→ Hooks - deterministic safety gates (100% enforced, not "suggestions")

→ Agents-subagents with their own context windows

Most engineers install it, type a prompt, do a few things here and there and wonder why the output is mid.

The difference between average and exceptional results comes down to setup:

→ Run/init on day one to generate your CLAUDE. md

→ Structure your .claude/directory with skills, hooks, and permissions

→ Write descriptions that actually trigger the right skills at the right time

→ Use the memory hierarchy (global project → subfolder) to scope context

→ Set up hooks for safety rules - because CLAUDE. md rules are ~70% followed, hooks are 100%

I put all of this into a single-page A4 cheatsheet.

14 sections. 3 columns. Everything from first install to the 4-layer architecture.

Not a command dump. A workflow guide:

◆ How to get started (install/init first session)

◆ How to write an effective CLAUDE. md (WHAT/WHY/HOW framework)

◆ Exact file structure for a fully configured project

◆ How to add and write skills that actually trigger correctly

Setting up hooks and permissions in settings. json

◆ Daily workflow pattern (Plan Mode → Auto-Accept commit loop)

English

spent a year building the scaffolding layer that makes AI-generated code hold up - context routing, memory persistence, delegation rules.

4200+ sessions of feedback before it stopped falling apart.

open-sourced it as a claude code plugin so nobody has to start from zero: github.com/primeline-ai/e…

English

Sam Altman is right about one thing:

- Writing software used to be harder.

But there’s an assumption hidden in that statement:

- That because it’s easier now, engineers matter less.

It’s actually the opposite.

AI made it easier to write code. It did not make it easier to build robust software systems.

If anything, it made it easier to build fragile ones.

Today you can generate:

- API integrations

- User interfaces

- Backend data flows

- Entire features

In hours.

But what happens when:

- The same request is processed twice

- Data arrives incomplete or out of order

- A dependency fails halfway through

- Real users behave in unexpected ways

That’s where software breaks.

It's not about the code. It's about how the system is architected.

And that’s where engineering experience shows up.

Understanding failure modes.

Designing for edge cases.

Building systems that don’t collapse under real usage.

AI didn’t remove the need for engineers. It removed the barrier to writing code.

Which means more systems will be built. And more of them will need to be designed properly.

The engineers who can do that are not less important. They are more critical than ever.

English

the 2 hours on setup part is undersold. a CLAUDE.md that routes tasks by type, loads only relevant context per request, and defines delegation rules turns every follow-up prompt into a continuation, not a cold start. went from re-explaining everything each session to single-line prompts that trigger full workflows.

English

4 loops: LEARN (corrections), RECALL (memory), HEAL (self-repair), EVOLVE (usage tracking). zero config.

github.com/primeline-ai/e…

English

my AI system runs a 7-point health check on itself after every session.

broken edges? auto-repaired.

orphan nodes? cleaned up.

expired knowledge? archived.

3 safety levels decide what gets auto-fixed vs what needs my approval - the rest happens on its own.

one of 4 feedback loops in Evolving Lite. free, open source.

>_

English

@gotxhdiana @claudeai that's the part nobody warns you about. you ship something you spent months on and then watch strangers decide if it was worth it in 30 seconds.

English

@PrimeLineAI @claudeai open-source is like taking your heart to a swap meet and hoping for good trades

English

Our developer conference Code with Claude returns this spring, this time in San Francisco, London, and Tokyo.

Join us for a full day of workshops, demos, and 1:1 office hours with teams behind Claude.

Register to watch from anywhere or apply to attend: claude.com/code-with-clau…

English