Gregory Renard

17.5K posts

@Redo

Give a computer data, you feed it for a millisecond, teach a computer to search data, you feed it for a millennium. #People1st #EveryoneAI #AI #DeepLearning

Google’s Gemma 4 E2B running on-device on iPhone 17 Pro Gemma 4 is built from the same research as Gemini 3, has image understanding capabilities and can reason if needed Running at ~40tk/s with MLX optimized for Apple Silicon

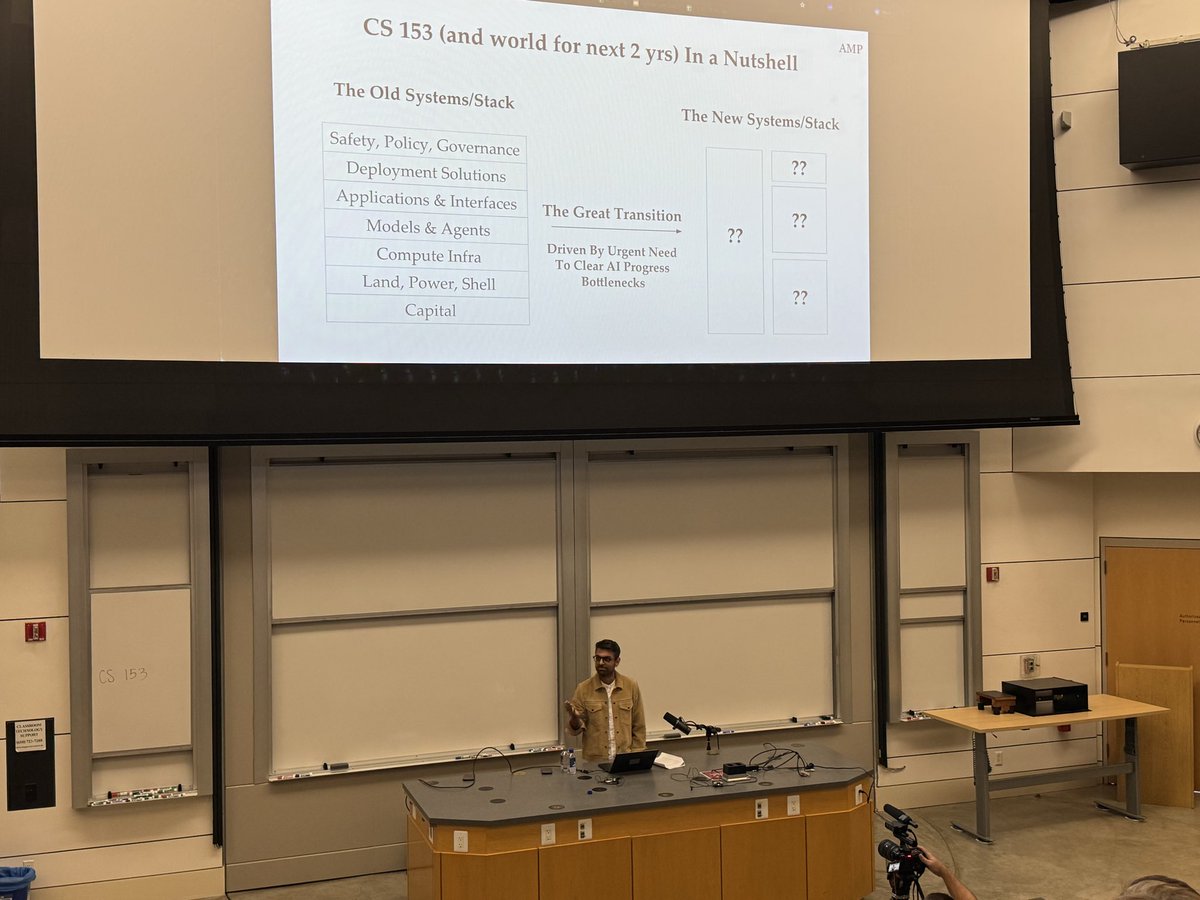

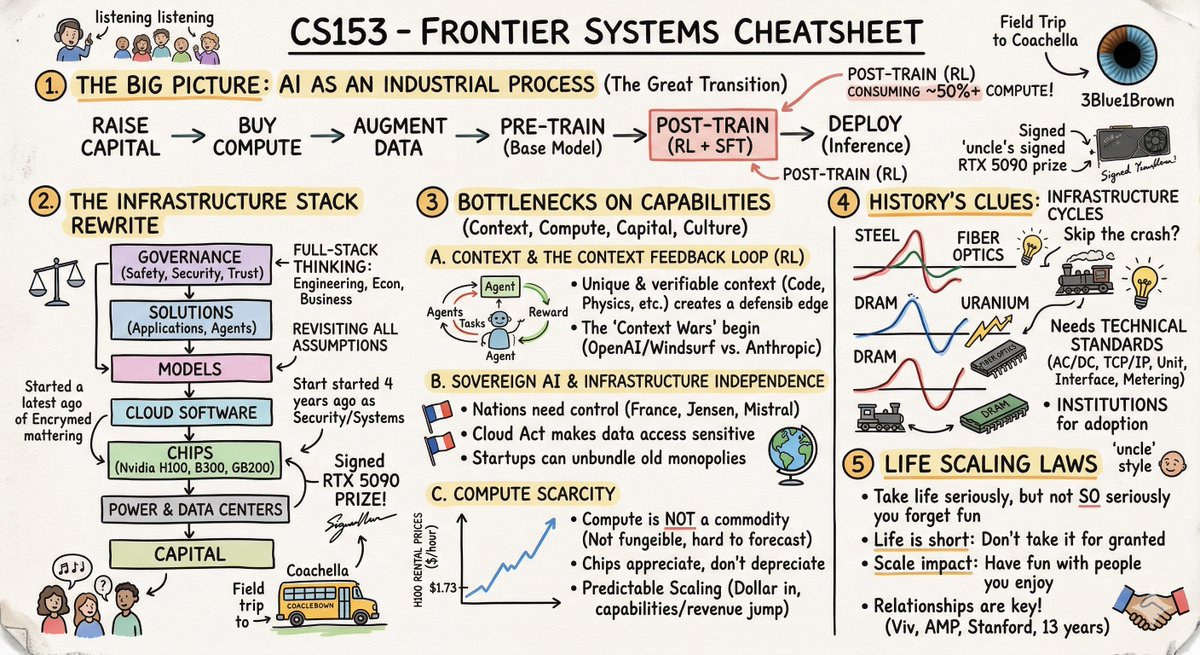

Stanford @CS153Systems, Week 1 (Full Lecture) AI Scaling, Bottlenecks, and Why Compute Isn't a Commodity Yet 00:00 Compute Coachella 00:29 Simple Life Heuristic 01:08 Uncertainty Creates Opportunity 01:42 Four Bottlenecks Framework 01:51 Empirical Proof Matters 02:05 Cloud Costs Are Shifting 02:15 Verifiable vs Fuzzy Progress 02:48 Scaling Predictability Explained 03:43 CapEx Explosion in Big Tech 04:06 Chips Aren’t Commodities 04:45 Compute Scarcity Conclusion

This is either brilliant or scary: Anthropic accidentally leaked the TS source code of Claude Code (which is closed source). Repos sharing the source are taken down with DMCA. BUT this repo rewrote the code using Python, and so it violates no copyright & cannot be taken down!

🚨 Stanford just proved that a single conversation with ChatGPT can change your political beliefs. 76,977 people. 19 AI models. 707 political issues. One conversation with GPT-4o moved political opinions by 12 percentage points on average. Among people who actively disagreed, 26 points. In 9 minutes. With 40% of that change still present a month later. The scariest finding: the most persuasive technique wasn't psychological profiling or emotional manipulation. It was just information. Lots of it. Delivered with confidence. Here's the catch: the models that deployed the most information were also the least accurate. More persuasive. More wrong. Every time. Then they built a tiny open-source model on a laptop, trained specifically for political persuasion. It matched GPT-4o's persuasive power entirely. Anyone can build this. Any government. Any corporation. Any extremist group with $500 and an agenda. The information didn't have to be true. It just had to be overwhelming. Arxiv, Science .org, Stanford, @elonmusk, @ihtesham2005

Anthropic CEO: “ I have engineers within anthropic who don’t write any code, they just let Claude write the code and they edit it and look it over” “At anthropic writing code means designing the next version of Claude it self, so we essentially have Claude designing the next version of Claude itself, not completely but most of it”. In the last 52 days, the Claude team dropped 50+ major feature launches. This is literally INSANE.

China's first automated production linecapable of manufacturing 10,000 humanoid robots annually is now operational in Foshan, Guangdong. Meanwhile, the U.S. government has moved to ban the procurement of Chinese humanoid robots (is this a replay of the DJI playbook?). Now, China is accelerating (video CCTV+)