DigitalRegIntel

3.8K posts

DigitalRegIntel

@RegIntelX

Regulatory supervision before enforcement. System of record for how regulatory risk was supervised — and when. Fintech - AI - Digital Assets | @RegIntelX

USA Entrou em Nisan 2025

298 Seguindo141 Seguidores

Tweet fixado

At some point, your next investor, bank partner, or regulator will ask:

"Show me how regulatory risk was supervised."

Not whether you're compliant today.

How it was supervised over time.

What was known.

How it applied.

What was done.

That question is coming.

We build the system of record for that moment.

English

FinCEN Just Turned AML Into a Decision-Record Problem open.substack.com/pub/digitalreg…

English

Most companies cannot prove this about their AI open.substack.com/pub/digitalreg…

English

Most companies think regulatory risk is about knowing the rules.

It's not.

When regulators investigate, they ask something different:

Who evaluated the risk?

When was the decision made?

What record exists showing supervision?

In an automated economy, compliance becomes one thing:

Proof a human was paying attention.

English

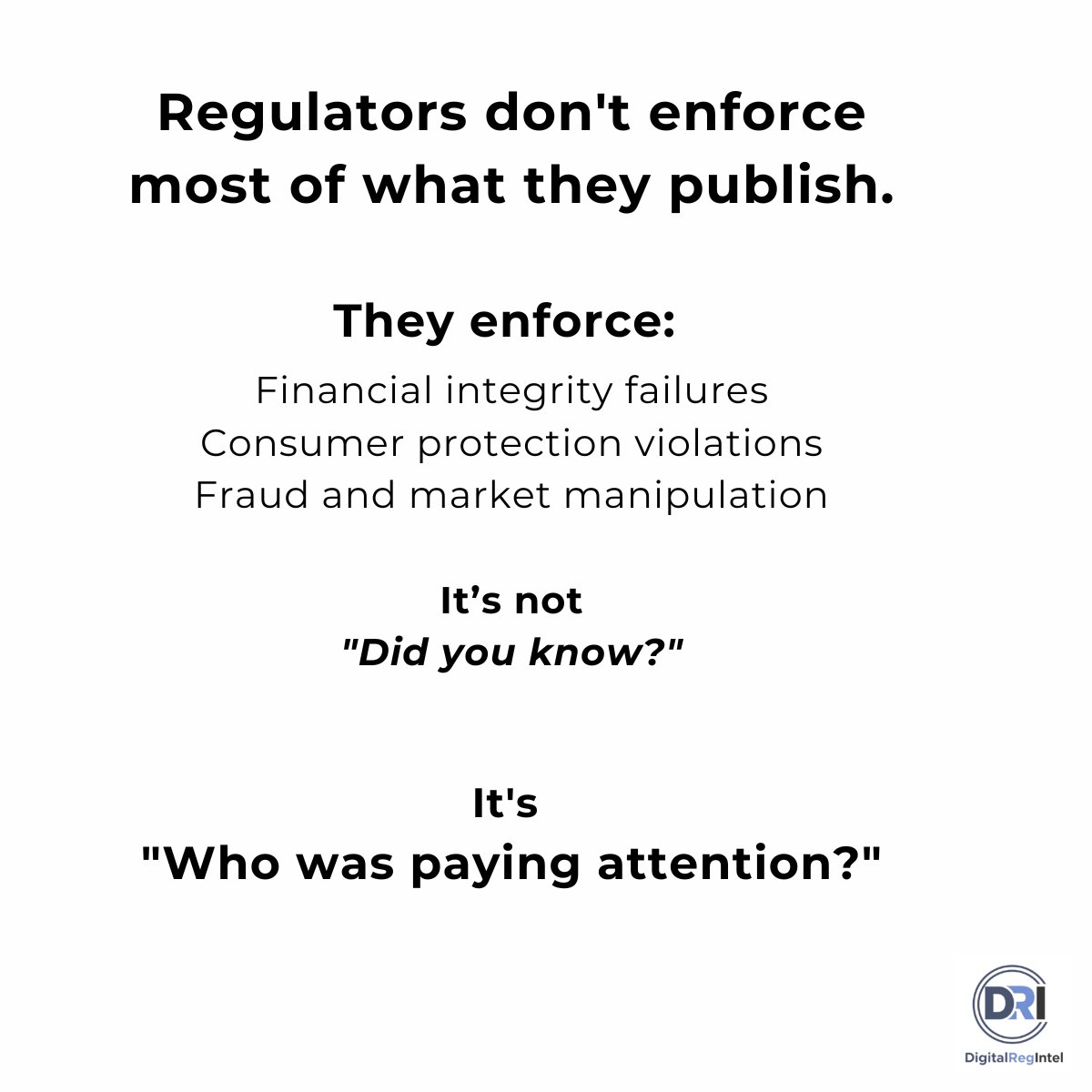

Everyone is obsessing over new AI regulation.

But regulators don't enforce most of what they publish.

Across the U.S. and Europe, enforcement keeps clustering around three things:

Fraud

Consumer harm

Financial integrity failures

Which leads to a much simpler question regulators eventually ask:

"Who was paying attention when the machines made the decision?"

English

@guilleflorvs @deel DigitalRegIntel— building the System of Record for autonomous agents: a Digital Regulatory Supervision Record that proves what you knew, when you knew it, and what you did about it.”

English

🚨 BREAKING: I’m partnering with @deel to fund up to 10 founders with $1,000,000 🤑

Deel just launched The Pitch, a global startup tournament, for the best founder in the world. The prices:

• 10 startups will each receive a $1M SAFE

• 100 regional winners will each receive $50K SAFEs

• $15M+ total capital deployed

And we’re officially partnering with them to push this to the Market Fit audience 🔥

This isn’t a gimmick. It’s a real funding vehicle.

Here’s how it works:

1️⃣ Submit a 5-minute application

2️⃣ Top startups get invited to regional in-person finals

3️⃣ Regional winners get funded

4️⃣ Global finalists compete for $1M

Just product, team, and ambition.

I know how cracked my founder audience is. If you’re building something serious, this is an asymmetric upside.

Don’t worry, we’ll be spotlighting strong applications from our side 😉

I’ll personally be backing and pushing the strongest applications.

Apply. Swing big. Let’s fund one of you!

𝗖𝗼𝗺𝗺𝗲𝗻𝘁 𝘆𝗼𝘂𝗿 𝘀𝘁𝗮𝗿𝘁𝘂𝗽’𝘀 𝗼𝗻𝗲-𝗹𝗶𝗻𝗲𝗿 𝗮𝗻𝗱 𝗜’𝗹𝗹 𝘀𝗲𝗻𝗱 𝘆𝗼𝘂 𝘁𝗵𝗲 𝗹𝗶𝗻𝗸 🔥

English