critikally correlated

287 posts

critikally correlated

@Rsquared1

perfectly aligned, your majesty

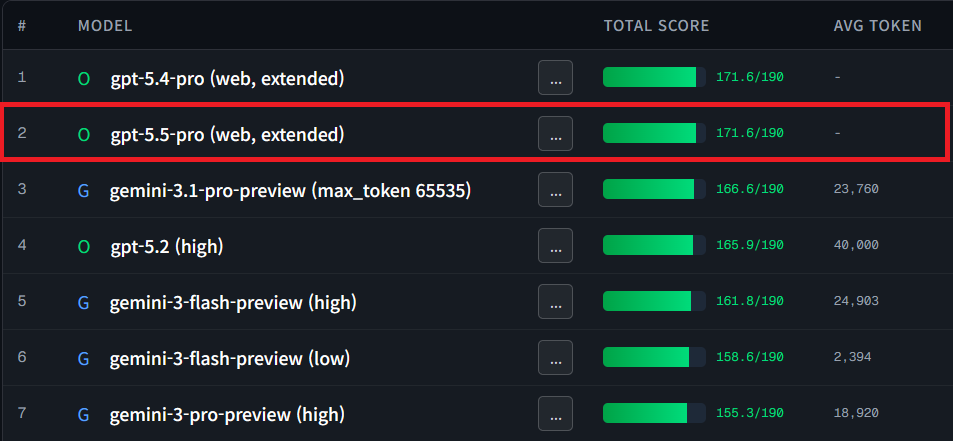

These are my KSST(Korean Sator Square Test) results for GPT-5.4 Pro (Extended, Web). At a time when the benchmark is nearing saturation, it still took the lead by a fairly significant margin. I have not tested all of the other latest models due to cost constraints, but this is very impressive. In my test, the top-tier models are now all getting perfect scores on the quantitative items, and the remaining differences come from items graded using an LLM as the judge. Because of that, it may be more valuable for me to inspect the actual outputs myself than to look at the scores alone. A few of GPT-5.4 Pro’s samples genuinely surprised me, and I would rate it as approaching the very top level of human performance. If this trend continues, the day may not be far off when models demonstrate superhuman ability on this task.

It's a M&A party! Anthropic is buying AI biotech startup Coefficient Bio for ~$400m. The team will join Anthropic's healthcare life sciences group, which develops tools for biotech workflows. w/ @srimuppidi theinformation.com/articles/anthr…