Abraham Mathews

1.3K posts

[1/D] 🤔 What are drifting models really connected to? 📢 Our new paper, A Unified View of Drifting and Score-Based Models, shows that the bridge to score-based models is clear and precise (w/ team and @mittu1204, @StefanoErmon, @MoleiTaoMath)! ✍️ Main takeaway: drifting is more closely connected to score-based (diffusion) modeling than it may first appear! 🔗 arxiv.org/abs/2603.07514 🎯 Here’s why: Drifting’s mean-shift moves a sample toward the kernel-weighted average of nearby samples. Score function points toward regions of higher density. So both describe local directions that push samples toward where data is denser. We show that this link is exact for Gaussian kernels (Section 4.1): 📌drifting’s mean-shift = a rescaled score-matching field between the Gaussian-smoothed data and model distributions — the vector field underlying score matching (Tweedie!). 📌This also clarifies the bridge to Distribution Matching Distillation (DMD): both use score-based transport directions, but only differ in how the score is realized—drifting does so nonparametrically through kernel neighborhoods, whereas DMD relies on a pretrained diffusion teacher. 🤔 So what happens for the default Laplace kernel used in drifting models? Let’s look below 👇

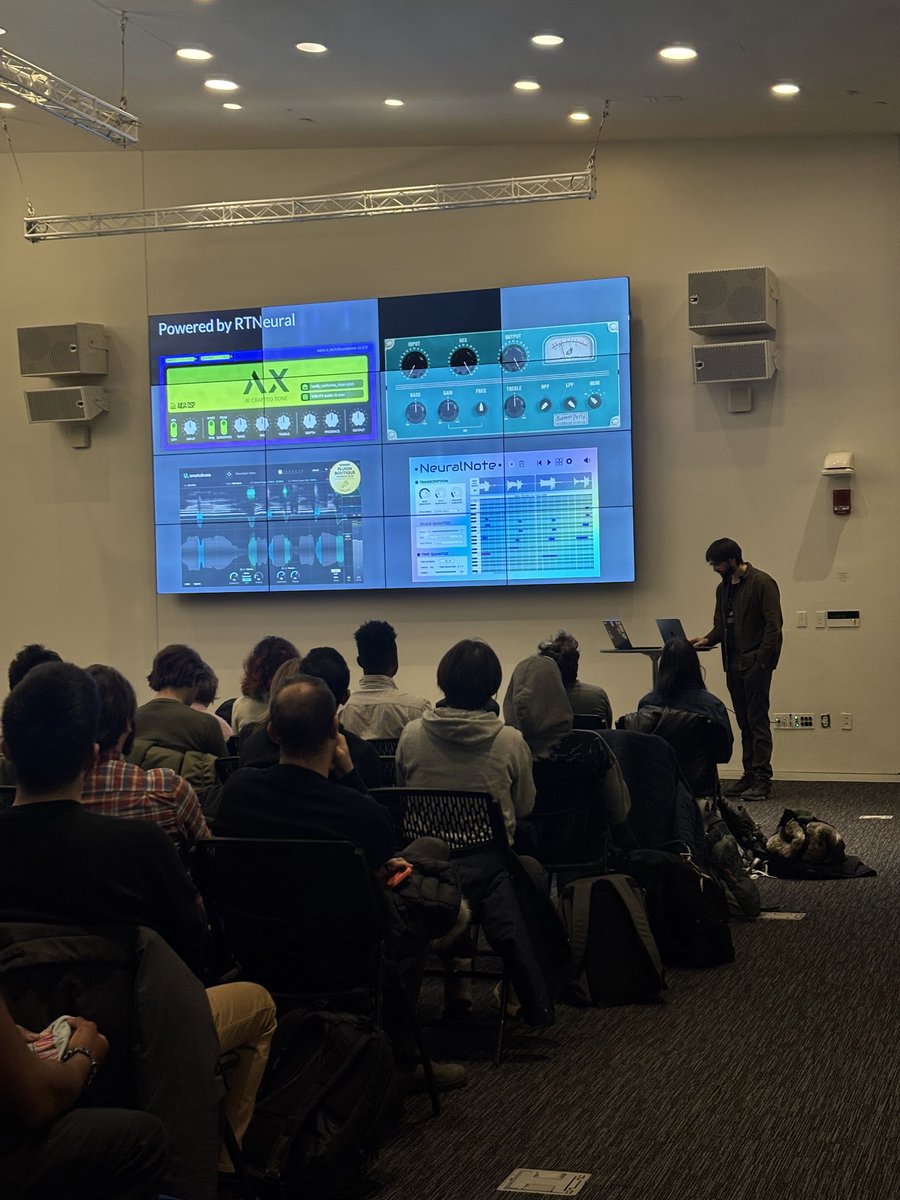

The winter storm won't stop us. The next Boston AI Music Meetup is now happening this Wed, Feb 25th at MIT Media Lab. Looking forward to talks from two awesome speakers: Jatin Chowdhury and Ethan Manilow (@ethanmanilow). RSVP at the link in the comments, we have limited spots.

1/ AxiomProver got 12/12 of Putnam 2025. Today we release the Lean proofs AxiomProver generated autonomously. We also provide our take of the problems, proof visualizations, and compare how humans vs AI approach differently. Tons of fun math and Lean! Our findings in thread.