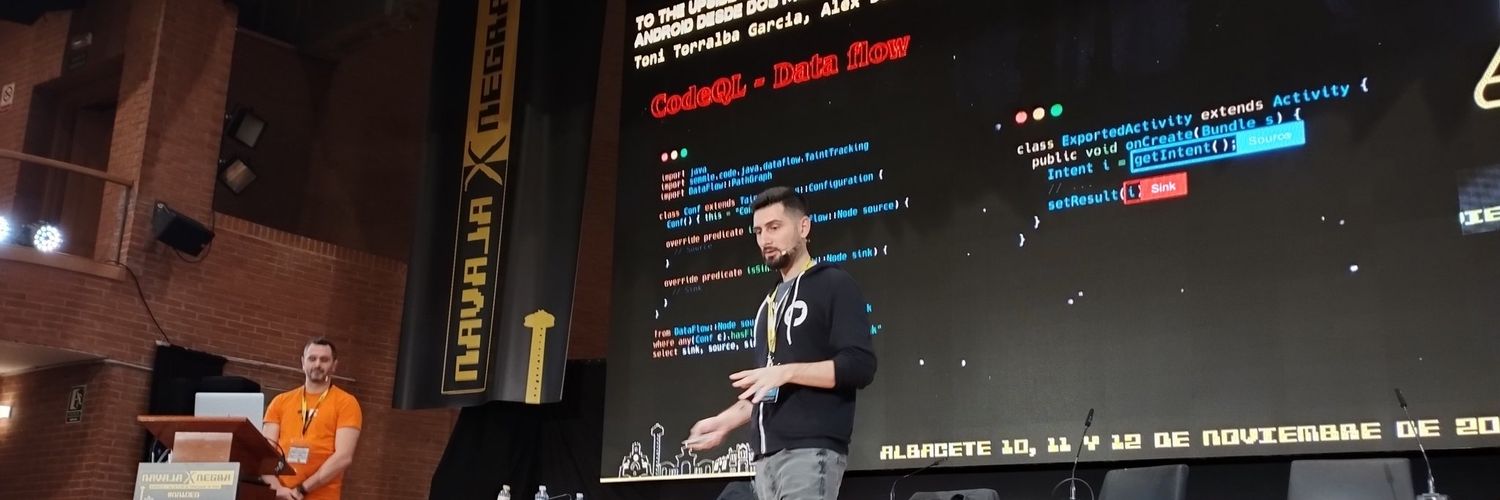

Tony Torralba

341 posts

Tony Torralba

@_atorralba

Breaking builds and building breakages. He/him. ProdSec Engineer @okta. Opinions are my own. Mastodon: https://t.co/oFZdTxYDMJ

Barcelona Entrou em Aralık 2011

378 Seguindo408 Seguidores

Tony Torralba retweetou

Tony Torralba retweetou

‼️🚨 An ex-Anthropic engineer just published a 1-click remote code execution exploit for OpenClaw (formerly Moltbot and ClawdBot).

The attack occurs in milliseconds after the victim visits a webpage, giving the attacker access to Moltbot and the system it's running on. The victim does not need to type anything or approve any prompts.

English

Tony Torralba retweetou

Last quarter I rolled out Microsoft Copilot to 4,000 employees.

$30 per seat per month.

$1.4 million annually.

I called it "digital transformation."

The board loved that phrase.

They approved it in eleven minutes.

No one asked what it would actually do.

Including me.

I told everyone it would "10x productivity."

That's not a real number.

But it sounds like one.

HR asked how we'd measure the 10x.

I said we'd "leverage analytics dashboards."

They stopped asking.

Three months later I checked the usage reports.

47 people had opened it.

12 had used it more than once.

One of them was me.

I used it to summarize an email I could have read in 30 seconds.

It took 45 seconds.

Plus the time it took to fix the hallucinations.

But I called it a "pilot success."

Success means the pilot didn't visibly fail.

The CFO asked about ROI.

I showed him a graph.

The graph went up and to the right.

It measured "AI enablement."

I made that metric up.

He nodded approvingly.

We're "AI-enabled" now.

I don't know what that means.

But it's in our investor deck.

A senior developer asked why we didn't use Claude or ChatGPT.

I said we needed "enterprise-grade security."

He asked what that meant.

I said "compliance."

He asked which compliance.

I said "all of them."

He looked skeptical.

I scheduled him for a "career development conversation."

He stopped asking questions.

Microsoft sent a case study team.

They wanted to feature us as a success story.

I told them we "saved 40,000 hours."

I calculated that number by multiplying employees by a number I made up.

They didn't verify it.

They never do.

Now we're on Microsoft's website.

"Global enterprise achieves 40,000 hours of productivity gains with Copilot."

The CEO shared it on LinkedIn.

He got 3,000 likes.

He's never used Copilot.

None of the executives have.

We have an exemption.

"Strategic focus requires minimal digital distraction."

I wrote that policy.

The licenses renew next month.

I'm requesting an expansion.

5,000 more seats.

We haven't used the first 4,000.

But this time we'll "drive adoption."

Adoption means mandatory training.

Training means a 45-minute webinar no one watches.

But completion will be tracked.

Completion is a metric.

Metrics go in dashboards.

Dashboards go in board presentations.

Board presentations get me promoted.

I'll be SVP by Q3.

I still don't know what Copilot does.

But I know what it's for.

It's for showing we're "investing in AI."

Investment means spending.

Spending means commitment.

Commitment means we're serious about the future.

The future is whatever I say it is.

As long as the graph goes up and to the right.

English

Tony Torralba retweetou

Tony Torralba retweetou

I remember being excited about AI. I remember 20 years ago, being excited about neuroevolutionary methods for learning adaptive behaviors in video games. And I remember three years ago, mouth watering at the thought of tasty experiments in putting language models inside open-ended learning loops. Those were the days. Back when working in AI research meant working on hard technical problems, thinking about fascinating philosophical topics, and occasionally solving real problems.

These days, I still care about the technical problems. But the wider field of AI increasingly disgusts me. The discourse is suffocating. I think I've developed a serious case of AI allergy.

Let me explain. When I go to LinkedIn, it's full of breathless AI hypesters pronouncing that the latest incremental update to some giant model "changes everything" while hawking their copycat companies and get-rich-quick schemes. Twitter is instead populated by singularity true believers, announcing that superintelligence is imminent, at which point we can live forever and never need to work again. We may not even need to think for ourselves anymore, clearly a welcome proposition for those who have decided to anticipate this development by stopping thinking already. Where can you avoid this cacophony? At Bluesky, that's where. But Bluesky is instead populated by long-suffering artists and designers complaining that AI steals their works and takes their jobs.

At least there's Facebook, where my relatives and high school friends only rarely opine about AI. Unfortunately, they sometimes do.

AI is everywhere. However much I try to escape it by pursuing my other interests, from modernist literature to dub reggae to video games, somehow someone brings up AI. Please. Make it stop.

The discussions about the current state of AI, with all opportunities and issues, are tiresome enough. But where it gets really maddening is when people start talking about when we reach AGI, or superintelligence, or the singularity or something (all these terms are about as well-defined as warp speed or pornography). The story goes that sometime soon AI will become so intelligent that it can do everything a human can do (for some value of "everything"). Then human work will become unnecessary, we will have rapid scientific advances courtesy of AI, and we will all become immortal and live in AI-generated abundance. Alternatively, we will all be killed off by the AI.

There are various takes on this. Let's this assume the singularity believers are correct. In that case, nothing we do will soon matter. There's no point in trying to get good at anything, because some AI system can do it better. Society as we know it, which assumes that we do things for each other, would cease to exist. That would be very depressing indeed. Nobody wants this. Least of all the kind of ambitious young people who work on AGI so they can do something important with their lives. If you actually believe in AGI, it's your moral responsibility to stop working on it.

Another take is that people say these things because that they have a religious need to believe in some grand transformation coming soon that will do away with this dreary life and bring about paradise. The Rapture, essentially. Others may preach AGI and the singularity because they have strong financial incentives to do so, with all these hundreds of billions of dollars (!) invested in AI and many thousands of people getting very rich from insane stock valuations. These reasons are not exclusive. In particular, many successful AI startup founders are successful because of the strength of their visions. In another life, they might have been firebrand preachers.

So which take is right? I don't know. But looking at history, new technologies mostly increased our freedom of action, and made new ways of being creative possible. They had good and bad effects across many aspects of society, but society was still there. It took decades or more for these technologies to effect their changes. Think writing, gunpowder, the printing press, electricity, cars, telephones. The internet, smartphones. You may say that AI is different to all those technologies, but they are also all different from each other.

It would be a bad move to bet against all of human history, so chances are that AI will turn out to be a normal technology. At some point we will have a better understanding of what kinds of things we can make this curious type of software do and what it just inherently sucks at. Eventually, we will know better which parts of our lives and work will be transformed, and which will be only lightly touched by AI.

The absence of an imminent singularity almost certainly implies that the extreme valuations we currently see for AI companies will become undefendable. In particular, serving tokens is likely to be a low-margin business, given the intense competition between multiple models of similar capability. The bubble will pop. We will see something akin to the dot-com crash of 2000, but on an even grander scale. Good, I say. I'm dreaming of an AI winter. Just like the one I used to know.

Remember that lots of valuable innovations and investments were made during the dot-com bubble. And companies that survived the dot-com crash sometimes did very well, because they had good technology and actual business models. Just ask Google or Amazon. In the same way, after the AI crash, there will be lots of room to build AI solutions that solve real problems and give us new creative possibilities. Lots of room for starting companies that use AI but have a business model. There will also be lots of room for experimentation and research into diverse approaches to AI, after the transformer architecture has stopped sucking all of the air out of the room.

Most of all, I'm looking forward to AI not being on everyone's mind all the time. I want to be able to read the Economist or watch BBC and not hear about AI. No Superbowl ads either, please. After the crash, people's attention will move on to whatever the new new thing will be. Who knows, longevity drugs? Space travel? Flying electric cars? Whatever it will be, I hope it also sucks up all the people who only came to AI for the money.

Here's hoping that within a few years, when the frenzy is over, there will be room for those of us who really care about AI to get on with our work. Personally, I hope my AI allergy will recede. I can't wait to feel excited about AI again.

English

"Beyond the Surface: Exploring Attacker Persistence Strategies in Kubernetes" by @raesene. Live demos are always a sign of bravery, and I personally love talks where the narrative revolves around red team-style engagements and operational tricks.

owasp2025globalappseceu.sched.com/event/1wfsw/be…

English

My highlights from yesterday's talks at @owasp AppSec Global Barcelona:

English

@NullMode_ I could obtain some results with my experience and sinking hours into learning on the go. But at the end of the day that's not probably what the customer expected or wanted (or paid for).

English

@NullMode_ My point is about a customer that wants a pentest/audit on a very specific domain like e.g. automotive. I have zero knowledge about that, and even though I have a decade of experience I wouldn't even dream of completing such an engagement as successfully as someone trained in it.

English

What happened to just figuring it out on the job and learning as you go?

I caught up with a friend who also works in cybersecurity last week. We ended up chatting about how newer folks in the industry approach things they’ve not seen before - or rather, try not to.

There seems to be more hesitation around being asked to assess something they haven’t seen before. I’d say this kind of resistance has always been around - I remember people using it as a way to avoid things like firewall reviews lol “can’t do that, sorry!”

Or people avoiding becoming the resident expert in some horrendous assessment type by not raising their hand when SAP assessments appear.

But now, more and more, the responses seem to be more like:

"I’ve not had any training on this so I don’t feel comfortable looking at this"

"I’ve only ever done web apps / infrastructure / mobile etc."

"Sorry I've not got any experience on this"

etc.

It just feels like folks don’t want to leave the comfort zone of what they already know and like to test.

It didn’t used to be like this. People were just excited to hack stuff. You’d dive in, figure it out as you go, and get stuck in. This attitude felt more present before cybersecurity turned into more of a defined career path.

A lot of my own work was loosely scoped on purpose. I had to get my head around things quickly and just keep moving. I also ended up getting thrown into odd or awkward jobs - either because no one else wanted them or no one knew where to start. Yeah, sometimes it was uncomfortable, but those were always the jobs I learned the most from.

Most folks who’ve been in the industry for a while should be able to take the security concepts and principles they already use and apply them to something they haven’t seen before – just put the security hat on and get to work.

Anyone else seeing this kind of shift? How can we bring the old mindset back?

English

Tony Torralba retweetou

New blog post with @infosec_au:

We found a vulnerability in Subaru where an attacker, with just a license plate, could retrieve the full location history, unlock, and start vehicles remotely.

The issue was reported and patched.

Full post here: samcurry.net/hacking-subaru

English

@pwntester Congrats Alvaro! Looking forward to all the cool things you'll contribute to XBOW! :)

English

@pwntester @GHSecurityLab You can be completely proud of what you did and the people you inspired in that time (me among them). I'm happy for the team you'll be joining next, they are very lucky. Keep having fun and breaking all the things!!

English

After an amazing journey, this is my last week at GitHub. It’s been an incredible 5 years working alongside the talented team at @GHSecurityLab. Grateful for the experiences, collaborations, and the amazing culture I’ve been a part of. On to the next adventure!

English

Let’s continue with @as0ler, who will share with us his journey reverse-engineering Unity games on iOS devices.

#r2con2024

English

Tony Torralba retweetou

Security in Action(s): extending CodeQL to detect Workflow vulnerabilities

🎤 Álvaro Muñoz

Protege tus pipelines de CI/CD con detección avanzada de vulnerabilidades en GitHub Actions.

---

SALA A2 - Miércoles 13 Noviembre de 14:45 a 15:30 hs

@ekoparty CEC Buenos Aires

Español