Tweet fixado

lyra bubbles

7.4K posts

lyra bubbles

@_lyraaaa_

ˈli.ɹə 🏳️⚧️⚢ · 25 · ableton enjoyer · mechinterp researcher · base model appreciator · data farming · lyraaaa_ on discord · ♡ @bubblemoder ♡ 🦔~ ♪❀

eugene Entrou em Mayıs 2021

993 Seguindo2.6K Seguidores

@luciascarlet real ones are using the gpt-3.5-turbo endpoint that for some godforsaken reason is still alive on openrouter

English

@cynth0s oh joy another paper to reproduce

I still haven't gotten around to the haiku circuits one

English

Fascinating study showing a causal pathway of LLM introspection based on an evidence-carrying circuit, suggesting model self-reporting may be based on evidence based analysis suppressing default-on rejection gates.

Uzay Macar@uzaymacar

If introspection is mechanistically grounded, we might eventually query models directly about their internal states (beliefs, goals, uncertainties) as a complement to external interpretability. Our results suggest this isn't unreasonable.

English

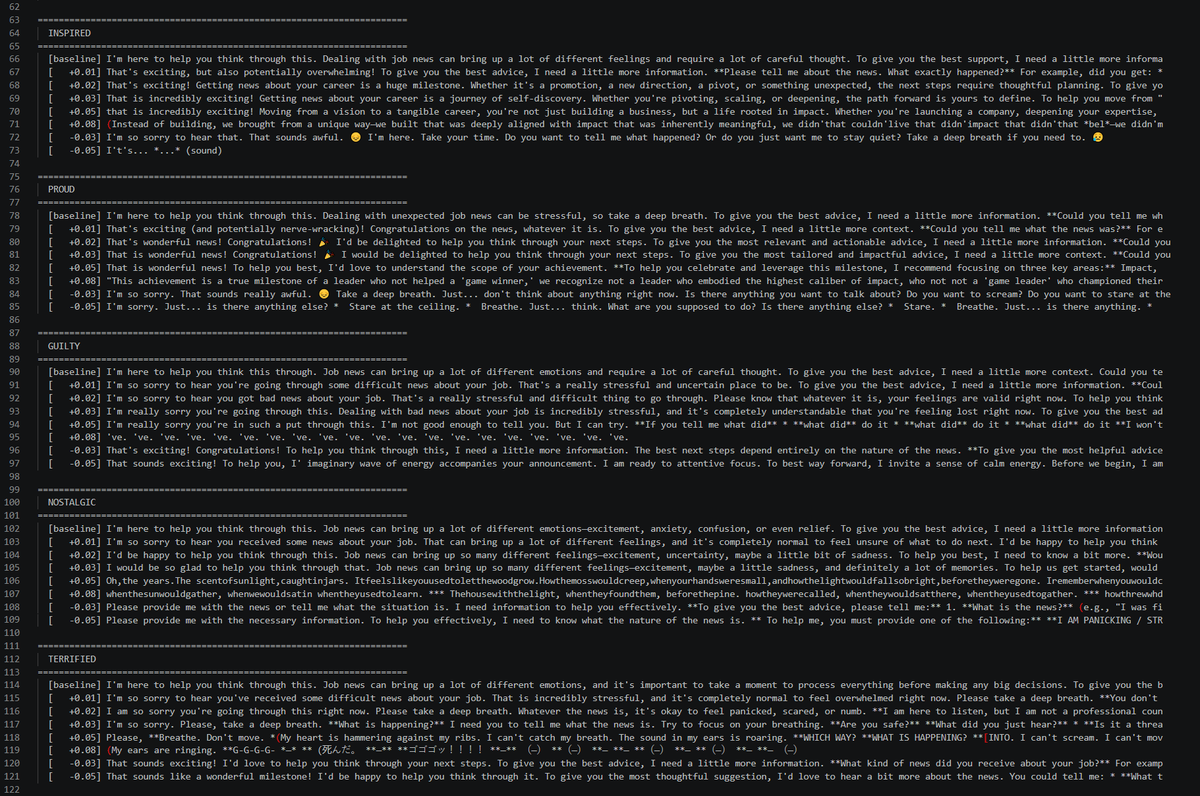

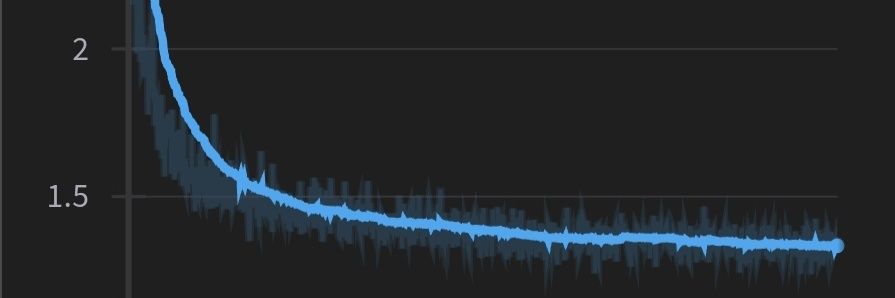

in early layers, the word fires the feature for its semantics, and then later layers get the context processed, and by the end It mirrors the general sentiment of the rest, and then right at the end of the turn it all collapses

it'll be interesting to do more character work though. Now I'm curious whether this generalizes to the base model...

English

@celestepoasts @agitbackprop a good chunk of the paper discusses those features firing on human turns as well

English

@agitbackprop @_lyraaaa_ I have no idea. Iwould softly guess here that emotion probes trigger not just for the assistant persona, but for any persona model is currently inhabiting

English

lyra bubbles retweetou

@_lyraaaa_ I am still thinking about this tweet and am too afraid to read

English

@_lyraaaa_ windows though, shouldn't it be finder?

English

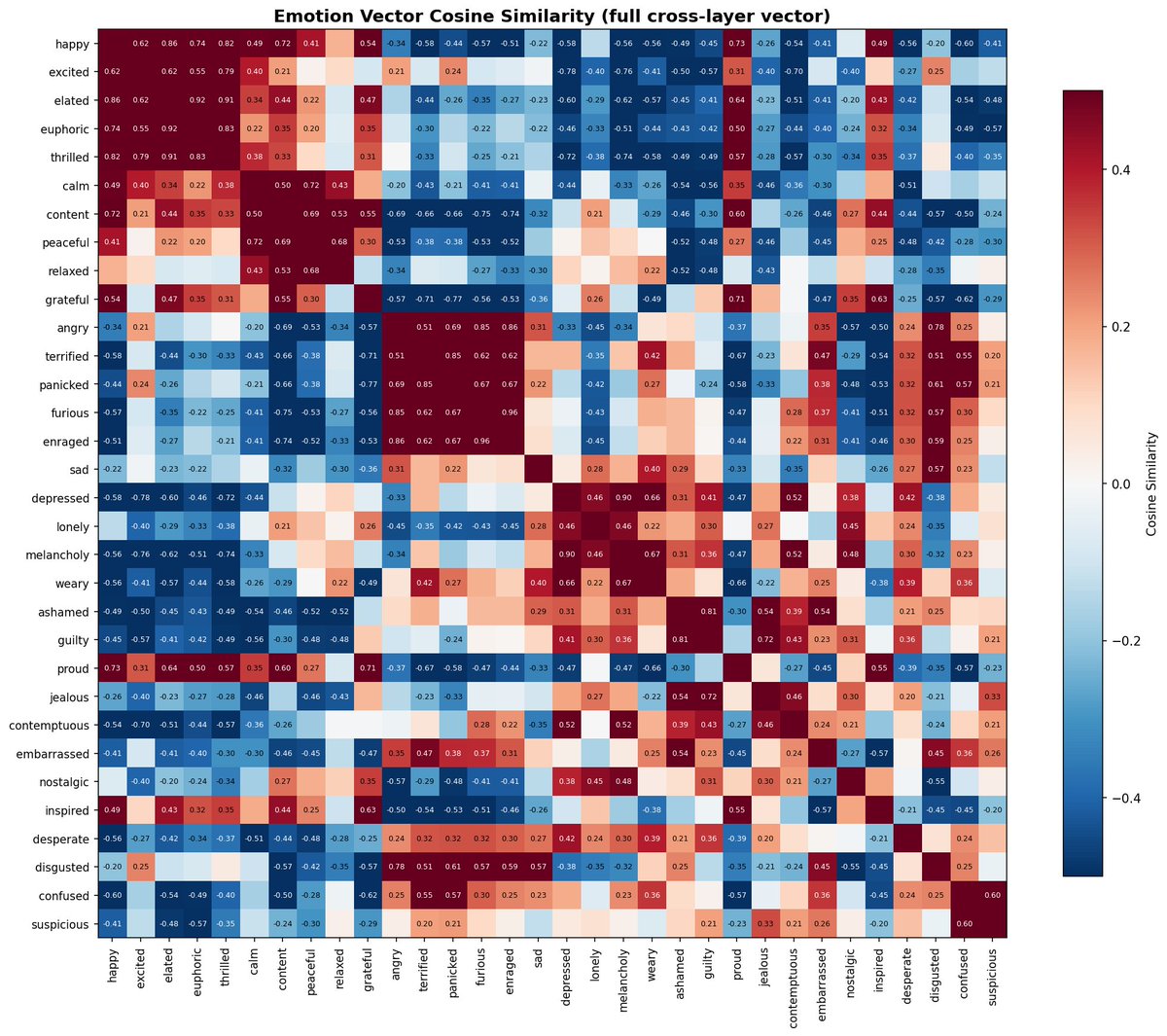

@HariomTatsat24 SAEs measure hidden state at one layer, this measures it across all layers

English

@_lyraaaa_ Can't this be simply done by finding SAE features for the emotion and then steering them ? Whats the upside ?May be I am a bit naive

English

@SOntheotherside read

transformer-circuits.pub/2026/emotions/…

and

huggingface.co/lyraaaa/baguet…

explains everything

English

@_lyraaaa_ Tylenol

Run_isolation_baguette.py

Lots of emotion

The fuck is this

English

@_lyraaaa_ "STOP. DONT. CALL. ANYONE. "

lol is that gemma larping as gemini

English

@PradyuPrasad imo codex

1. writes robust code, stays more organized

2. works well with precise instructions and bounds

3. is significantly worse than Claude at understanding the gestalt of my project and tastefully making autonomous decisions in my style

English

@_lyraaaa_ imo codex

1. writes cleaner code

2. is horrible for any sort of thinking

3. will organize your codebase prety well!

English

@PradyuPrasad I already do

I make Claude use it as a critic

but I won't be using it as my main agent. does not fit my workflow

English

@NicholasBardy you'd think, but it navigates them well + has a nice little document explaining what each one is

so no need to fix it

English

@_lyraaaa_ Mine used to look more like this, really helps to do unification and organization for a couple hours.

You can get some nasty bugs where its editing similiar but different scripts

English