Gijs

363 posts

Gijs

@datagobes

Senior cloud data & AI engineer. Building https://t.co/SGOypsko6x in public — AI-native tools, MDX blogs, and things that probably shouldn't work but do.

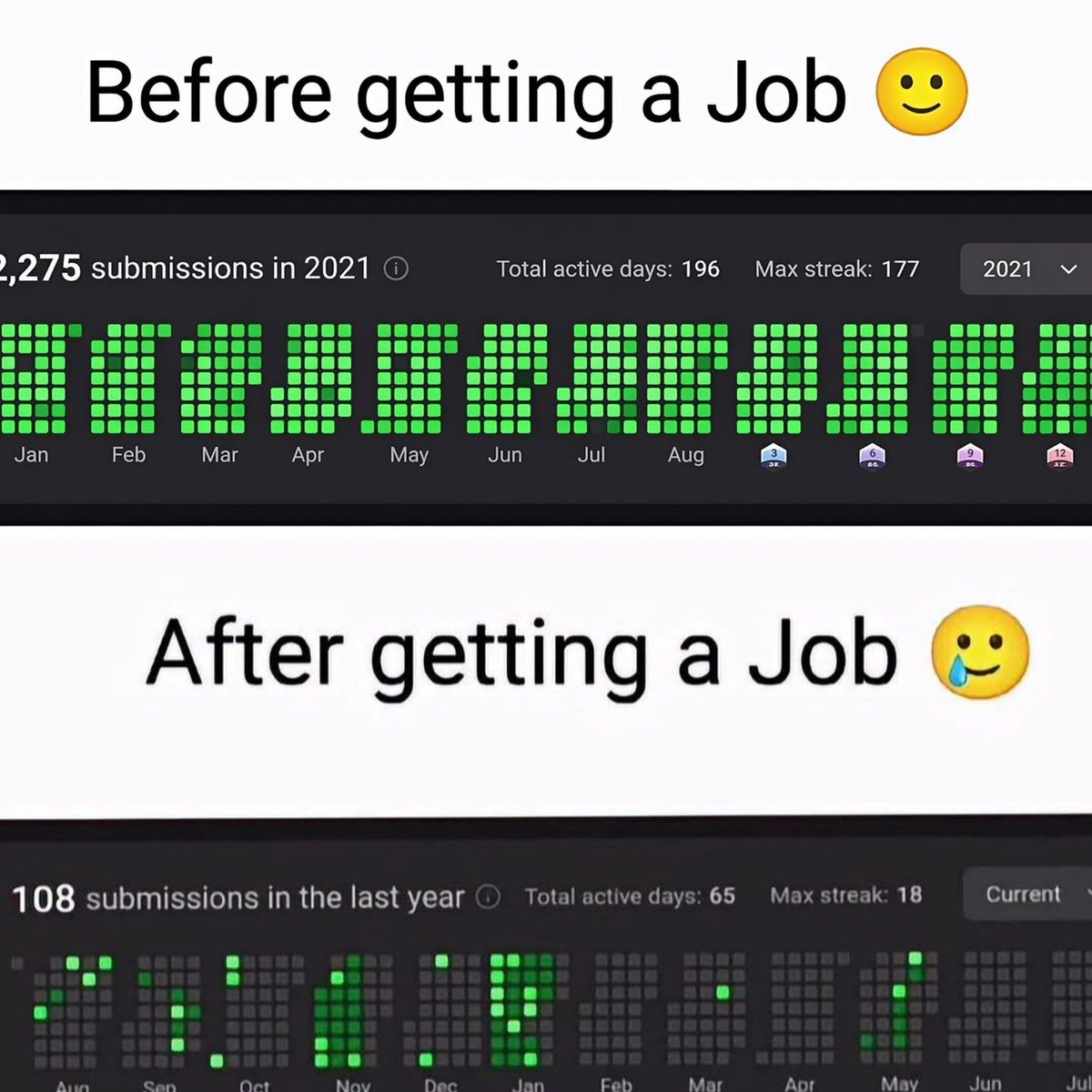

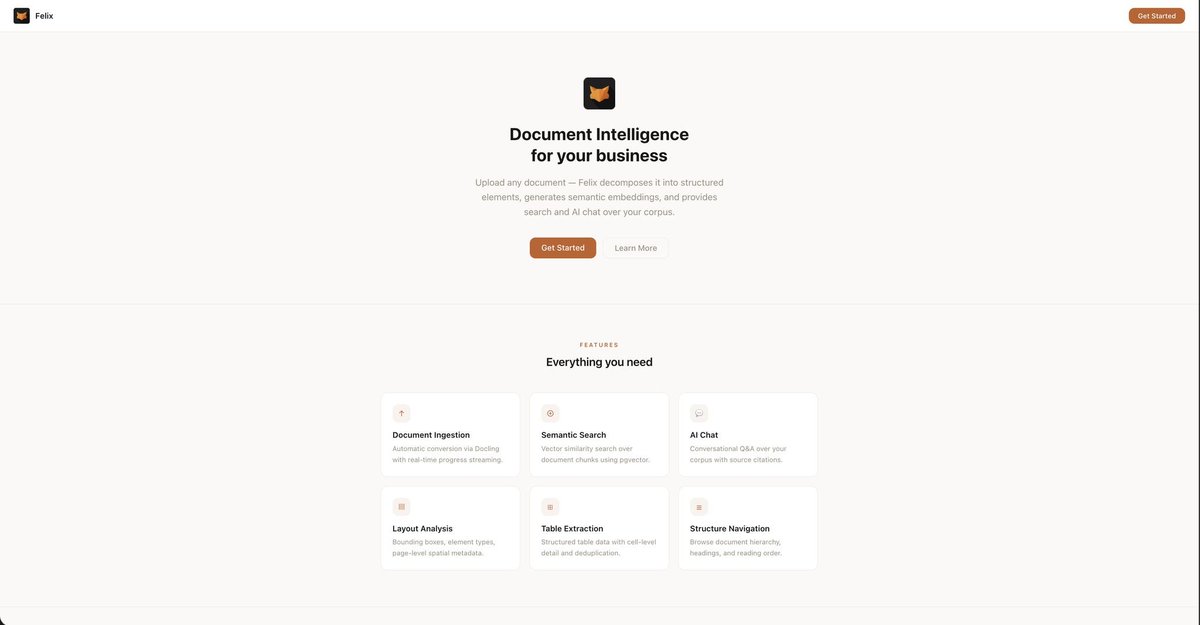

Andrej is right. Processing pdf's is hard but lots of knowledge is captured in them. I am focusing on using AI in a corporate setting and document intelligence is a challenge. I ran into this problem when I first started designing an agent that could design agentic systems. I wanted to put all the big papers in a knowledge base and it didn't work out. Until I created Felix, my document intelligence project. Felix decomposes any business document into typed elements, stores them in postgres, and can reconstruct the full document from it. Now that Andrej dropped his llm-wiki idea, I've added an mcp on top so I can use Felix for llm-wiki generation and I will post my wiki from the Mythos document on GitHub later on. (Claude happened to go down the moment I was generating it) See the screenshots for a UI view on the Felix API, which is showing the document Andrej is mentioning here, decomposed and served from postgres. I am not going to tell you to reply FELIX and follow me to get some nonsense prompt and a 199 offer for a crappy workshop. But I am not going to stop you from letting me know you think this is interesting either. If this gets some engagement I will package it and put it on GitHub.