Pulkit retweetou

Pulkit

166 posts

Pulkit retweetou

Pulkit retweetou

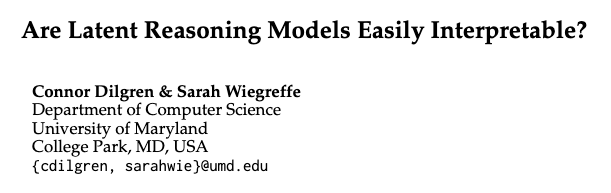

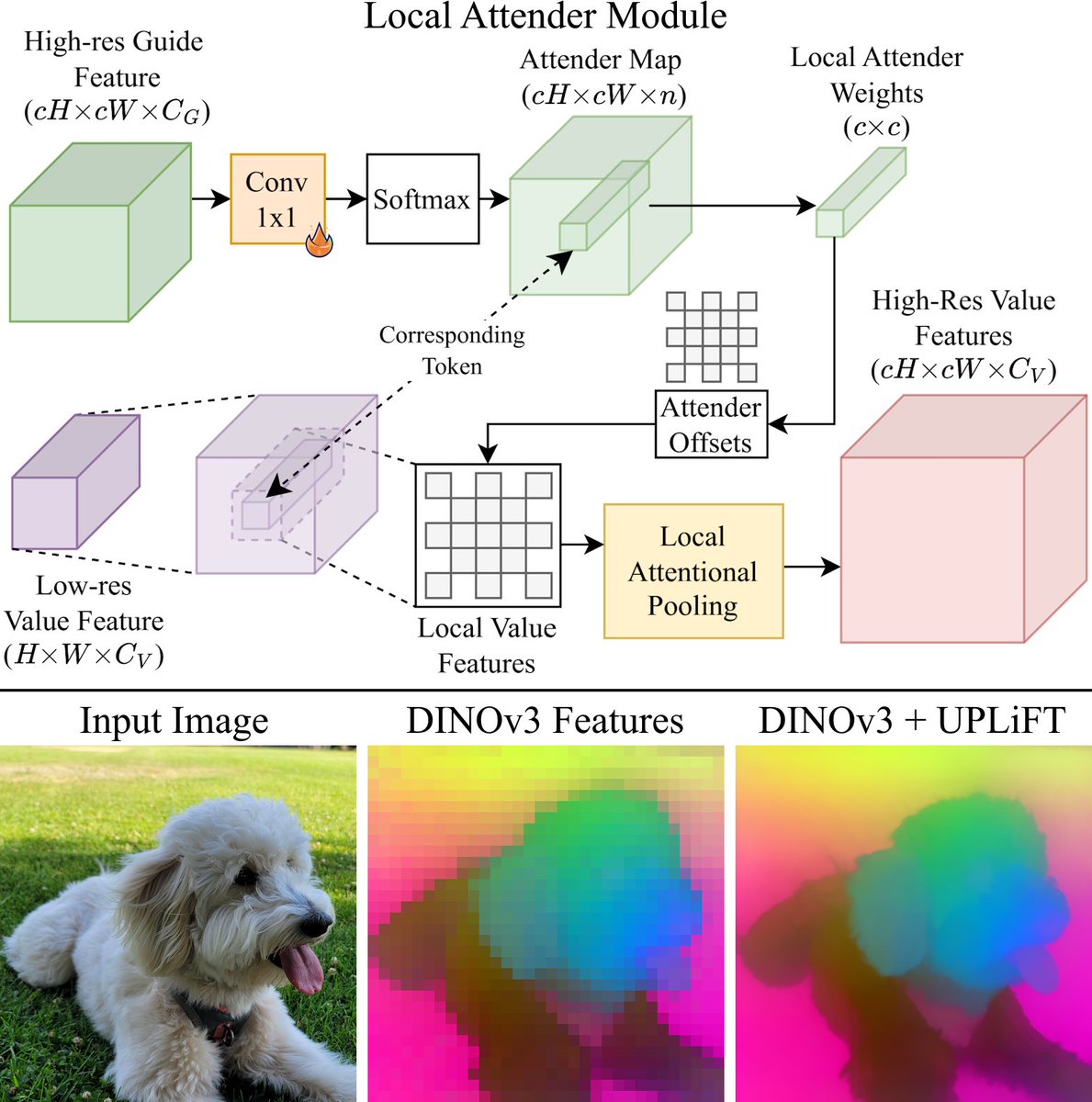

Excited to announce that UPLiFT has been accepted to #CVPR2026!

You can also try out UPLiFT right now to extract pixel-dense DINOv3 features with our pretrained models linked below!

Code: github.com/mwalmer-umd/UP…

Paper: arxiv.org/abs/2601.17950

Website: cs.umd.edu/~mwalmer/uplif…

English

Pulkit retweetou

We’re excited to announce UPLiFT, our lightweight, pixel-dense feature upsampler. UPLiFT boosts feature density, preserves semantics, and has better efficiency scaling than recent SOTA methods. See all links in the thread below.

Coauthors: @_sakshams_ @AnirudAgg @abhi2610

🧵[1/6]

English

Pulkit retweetou

🚨 PhD Opening: 3D/4D SLAM & Inverse Rendering for Endoscopy (NIH funded) at @UNC_CS! 🚨

The Project:

🟩 Ideal for students with a strong background in 3D Computer Vision & interested in medical robotics & visualization.

🟩 Highly collaborative work with roboticists, medical imaging researchers, and clinicians (GI, ENT, Pulmonology).

🟩Aim to publish in top-tier CV/ML/Robotics/Medical Imaging venues.

🟩 Build on our prior works:

1⃣Endoscopy depth estimator: PPSNet [ECCV'24 - ppsnet.github.io]

2⃣ 3D SLAM for endoscopy: NFL-BA [NeurIPS'25 -asdunnbe.github.io/NFL-BA/]

Apply now! 📧 Email CV to ronisen@cs.unc.edu 📝 Formal UNC CS application required (Deadline soon!)

RTs appreciated! 🙏

English

Aloha #ICCV2025! 🌺

Join @ShuaiyiH, @abhi2610 and me today (21 Oct) at poster #334, 11 AM–1 PM!

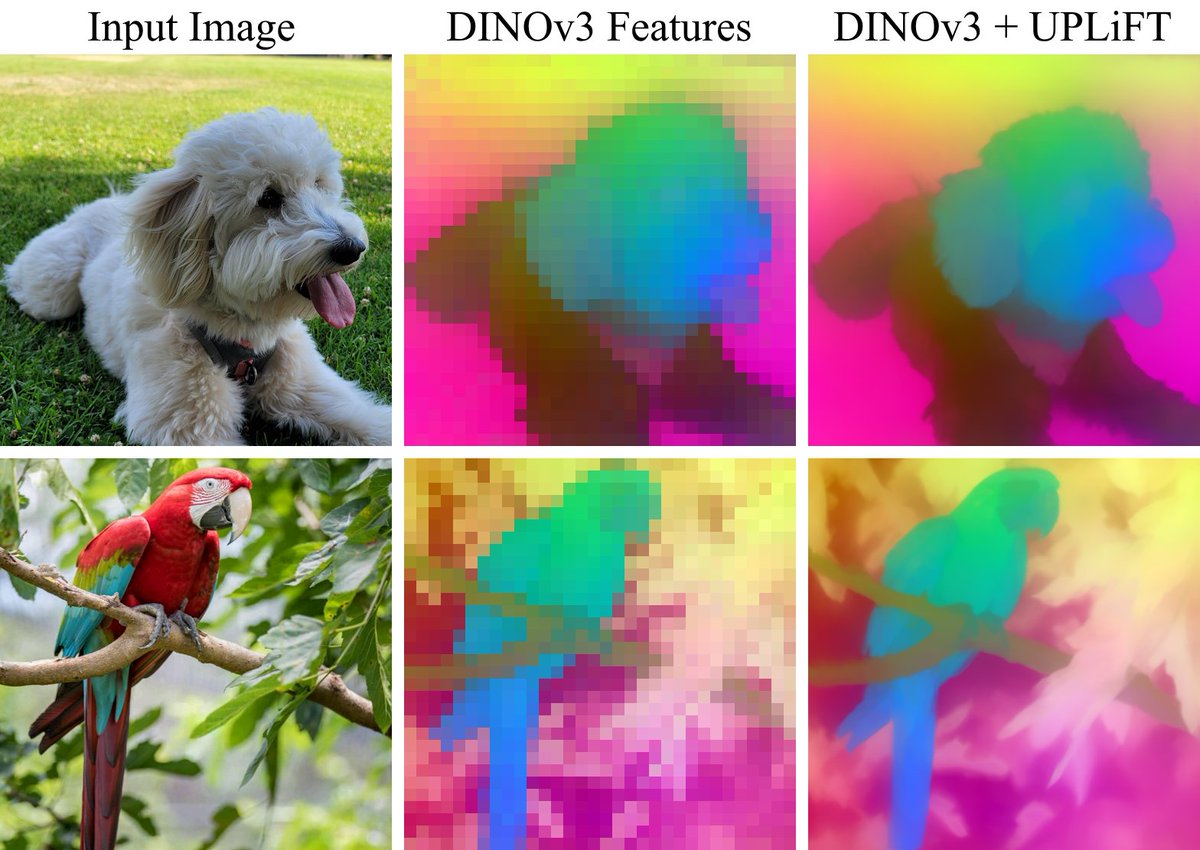

We will be presenting Trokens — our work on point tracking for action recognition.

Details of the paper are below 👇

Pulkit@pulkitkumar95

🎉 Excited to share our paper "Trokens: Semantic-Aware Relational Trajectory Tokens for Few-Shot Action Recognition" has been accepted to #ICCV2025! Equally co-led with @ShuaiyiH — we advance few-shot action recognition via smart point tracking. 🔗 trokens-iccv25.github.io 🧵👇

English

Pulkit retweetou

Everyone says they want general-purpose robots.

We actually mean it — and we’ll make it weird, creative, and fun along the way 😎

Recruiting PhD students to work on Computer Vision and Robotics @umdcs for Fall 2026 in the beautiful city of Washington DC!

English

👥 This work was co-led with @ShuaiyiH in collaboration with @MatthewWalmer, @rssaketh , and @abhi2610

🔗 All code and data are released:

🌐 Webpage: trokens-iccv25.github.io

📄 ArXiv: arxiv.org/abs/2508.03695

💻 Code: github.com/pulkitkumar95/…

🤗 Data: huggingface.co/datasets/pulki…

English

🎉 Excited to share our paper "Trokens: Semantic-Aware Relational Trajectory Tokens for Few-Shot Action Recognition" has been accepted to #ICCV2025!

Equally co-led with @ShuaiyiH — we advance few-shot action recognition via smart point tracking.

🔗 trokens-iccv25.github.io

🧵👇

English

@ICCVConference In "Camera-Ready Submission Instructions", it says "However, papers that are longer than 8 pages (not including references), will not be processed and will not appear in the conference proceedings or on IEEE Xplore." Does this "8 pages" also not include acknowledgment section?

English

If copyright status and paper status are “received” or “submitted” then ignore email.

#ICCV2025@ICCVConference

🌺 Aloha! The camera-ready deadline is coming up! 🗓️ Friday, August 1, 2025 ⏰ 23:59 Pacific Time Don't wait until the last minute!

English

Pulkit retweetou

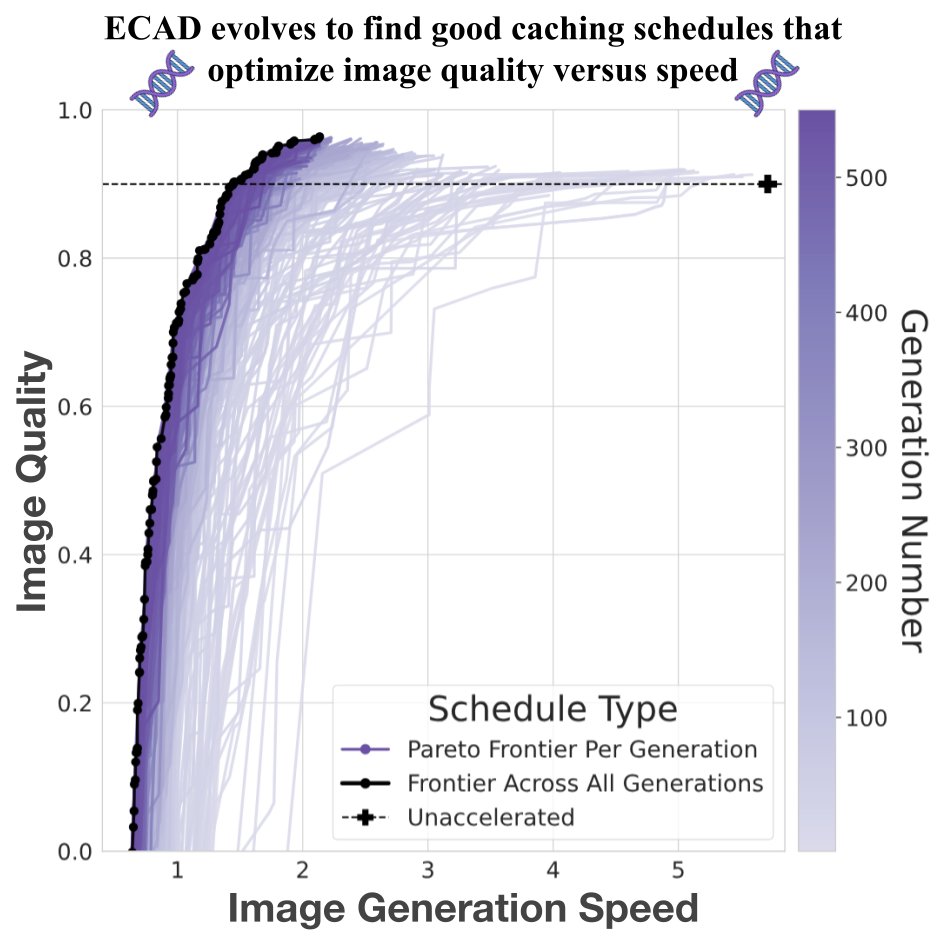

🧵 Your DiT, faster

Introducing ECAD: we reframe diffusion model caching as multi-objective optimization and evolve Pareto-optimal schedules via a genetic algorithm—achieving 4.47 FID gain at 2.58× speedup, with no retraining or tuning.

🔗 aniaggarwal.github.io/ecad

#MachineLearning

English

Pulkit retweetou

🌟 CoLLM: A Large Language Model for Composed Image Retrieval (CVPR 2025)

✨A cutting-edge training paradigm using image-caption pairs

📊High-quality synthetic triplets for training & benchmarking

🔗Project: collm-cvpr25.github.io

📄Paper: arxiv.org/abs/2503.19910

#LLM #CIR

English

Pulkit retweetou

Finally, I found some time over the break to clean the code up and release it:

github.com/pulkitkumar95/… :)

Pulkit@pulkitkumar95

📢 Point tracking 🤝 action recognition at #ECCV2024 We've set the new SoTA of few-shot action recognition by harnessing morion data from point tracking and semantic features from SSL. Curious? Visit Poster #203 Thursday AM to see the future of action recognition🔥. Details:🧵

English