Tejaswi Kasarla

784 posts

@tkasarla_

PhD student @UvA_Amsterdam @Ellis_Amsterdam | Learning in non-Euclidean spaces | Previously Intern @AIatMeta | Board Member @WiCVWorkshop

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

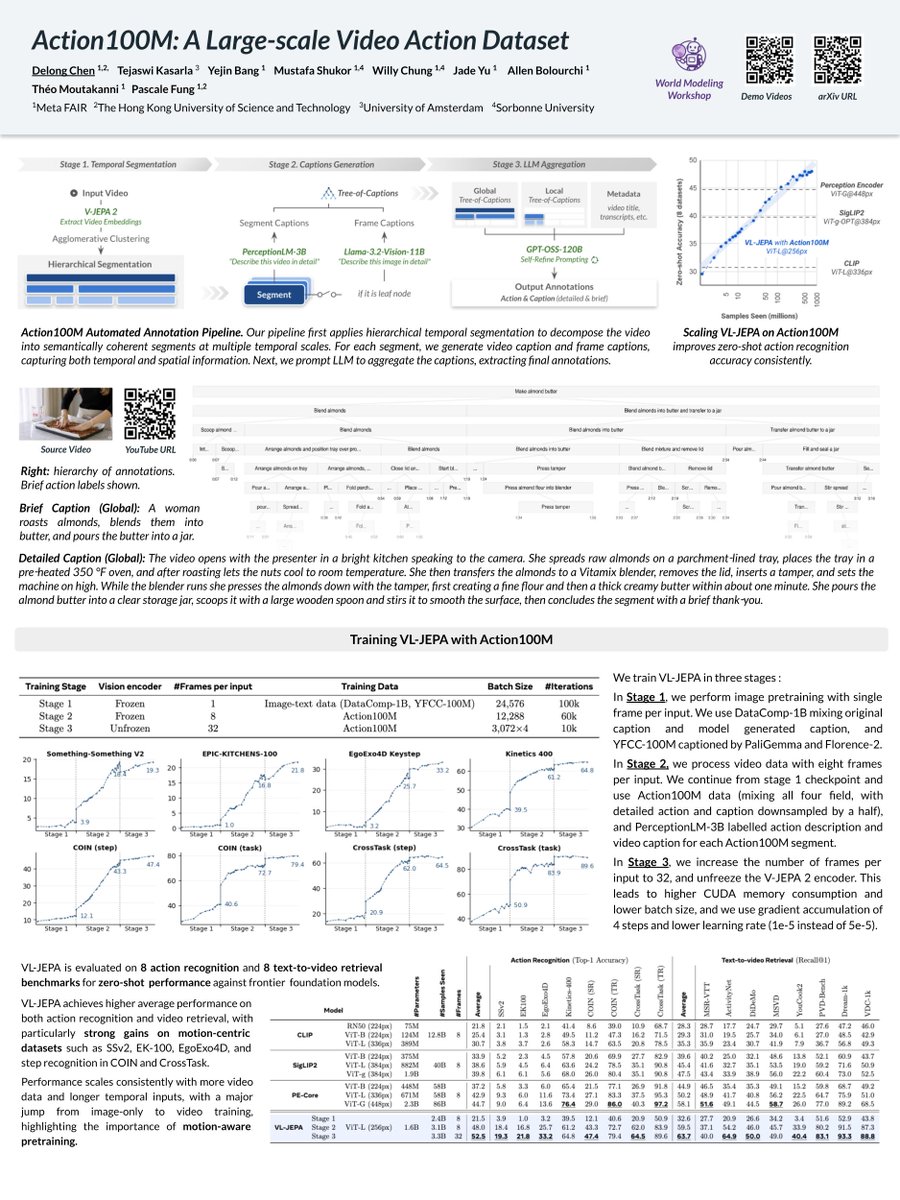

Meta just released Action100M on Hugging Face A massive video dataset with 100M+ hierarchical action annotations. Every video includes tree-of-captions with action labels, brief and detailed summaries.

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…