Nathan Axcan

1.2K posts

Nathan Axcan

@AxcanNathan

Entropy-neur | Gradient descent takes a photography of the real world. | Formerly@tudelft, now LLM research@IBM (I do not represent IBM in any way)

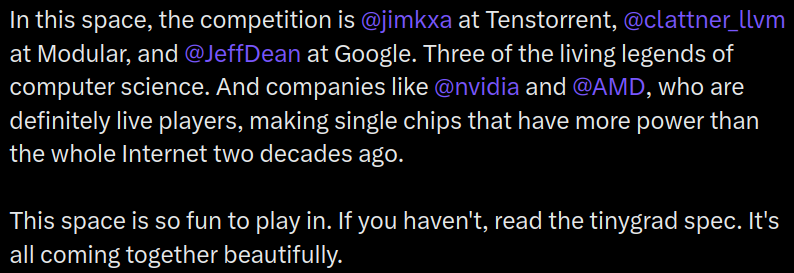

Few know this, but I (George) was the only person in history to get a perfect score in CMU compilers, which is likely the best compilers course in the world. Combine that with crazy low level knowledge of hardware from 10 years of hacking. Then add a team of people who are talented enough to push back on my dumb ideas and clean up the implementations of the good ones. The team who keeps this whole operation running, software, infrastructure, and product. I love how there's no hype in deep learning compilers. It was one of the most annoying things about self driving cars, all the noobs who burned through billions on crap that was obviously dumb, and the companies who deserved to go bankrupt years ago if not for government bailouts (Tesla and China will devour them all). In this space, the competition is @jimkxa at Tenstorrent, @clattner_llvm at Modular, and @JeffDean at Google. Three of the living legends of computer science. And companies like @nvidia and @AMD, who are definitely live players, making single chips that have more power than the whole Internet two decades ago. This space is so fun to play in. If you haven't, read the tinygrad spec. It's all coming together beautifully.

Introducing M²RNN: Non-Linear RNNs with Matrix-Valued States for Scalable Language Modeling We bring back non-linear recurrence to language modeling and show it's been held back by small state sizes, not by non-linearity itself. 📄 Paper: arxiv.org/abs/2603.14360 💻 Code: github.com/open-lm-engine… 🤗 Models: huggingface.co/collections/op…

Frankly disappointed. Three iterations deep and the paper itself concedes pure SSMs can’t do retrieval and hybrids (SSM + attention) are the future.. So Mamba is converging on being a better compression sublayer inside someone else’s architecture — not a replacement. The “inference-first” framing is doing heavy rhetorical lifting over what are solid but incremental control theory refinements (complex transitions, trapezoidal discretization, MIMO) that don’t touch the core constraint: fixed state = lossy history. ~5% decode speedup over Mamba-2 SISO. Kernel engineering is genuinely good. But this isn’t the trajectory you want if the original pitch was obsoleting transformers.

Composer 2 is now available in Cursor.

Remember this thing? After a year+ : - Stable metal backend with easy visuals - runs great on my M1 Max - in Claude Code even GLM 4.7 was able to setup nice experiments Very cool if you don't wanna be locked into Isaac Gym!