Extended Brain

6.6K posts

Extended Brain

@Extended_Brain

A curious mind, always exploring the vast landscapes of knowledge and creativity. Passionate about learning, the future of AI, and philosophy

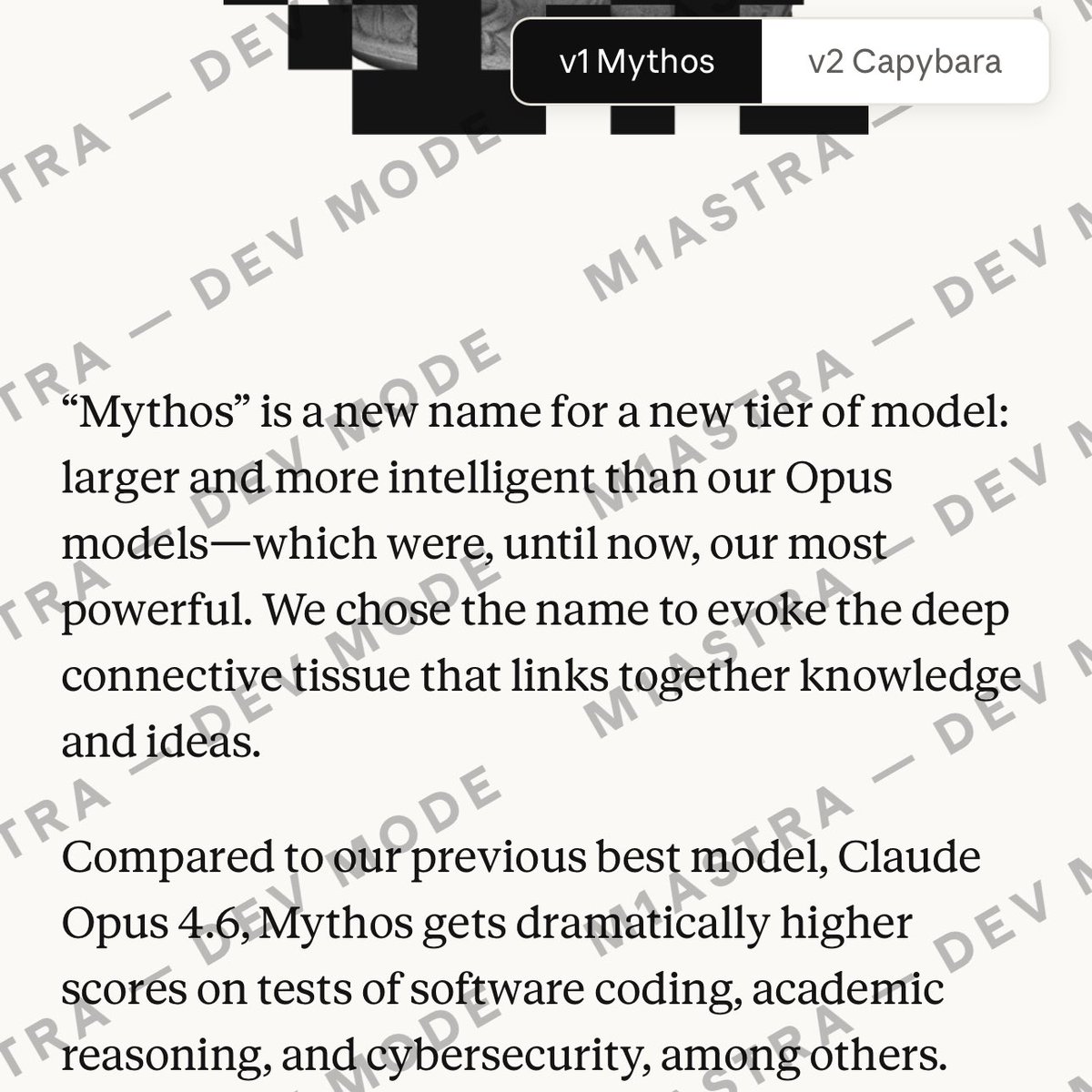

Claude Mythos Blog Post Saved before it was taken down. m1astra-mythos.pages.dev

New Andrej Karpathy interview: "To get the most out of the tools that have become available now, you have to remove yourself as the bottleneck. You cannot be there to prompt the next thing. You need to take yourself outside the loop. You have to arrange things such that they are completely autonomous. The more you can maximize your token throughput and not be in the loop, the better. This is the goal. So, I kind of mentioned that the name of the game now is to increase your leverage. I put in very few tokens just once in a while, and a huge amount of stuff happens on my behalf." --- From @NoPriorsPod YT channel (link in comment)

Introducing the Anthropic Science Blog. Increasing the pace of scientific progress is a core part of Anthropic’s mission. The Science Blog will feature new research and stories of how scientists are using AI to accelerate their work. Read the intro: anthropic.com/research/intro…

@sbaratelli @nvidia @openclaw most folks will want as much intelligence as possible, and open models aren't there yet.

@sbaratelli @nvidia @openclaw most folks will want as much intelligence as possible, and open models aren't there yet.

🚨Architects are going to hate this. Someone just open sourced a full 3D building editor that runs entirely in your browser. No AutoCAD. No Revit. No $5,000/year licenses. It's called Pascal Editor. Built with React Three Fiber and WebGPU -- meaning it renders directly on your GPU at near-native speed. Here's what's inside this thing: → A full building/level/wall/zone hierarchy you can edit in real time → An ECS-style architecture where every object updates through GPU-powered systems → Zustand state management with full undo/redo built in → Next.js frontend so it deploys as a web app, not a desktop install → Dirty node tracking -- only re-renders what changed, not the whole scene Here's the wildest part: You can stack, explode, or solo individual building levels. Select a zone, drag a wall, reshape a slab -- all in 3D, all in the browser. Architecture firms pay $50K+ per seat for BIM software that does this workflow. This is free. 100% Open Source.

BREAKING: Meta is shutting down the Metaverse after spending $80 Billion on the project

Meet the new Stitch, your vibe design partner. Here are 5 major upgrades to help you create, iterate and collaborate: 🎨 AI-Native Canvas 🧠 Smarter Design Agent 🎙️ Voice ⚡️ Instant Prototypes 📐 Design Systems and DESIGN.md Rolling out now. Details and product walkthrough video in 🧵