Закреплённый твит

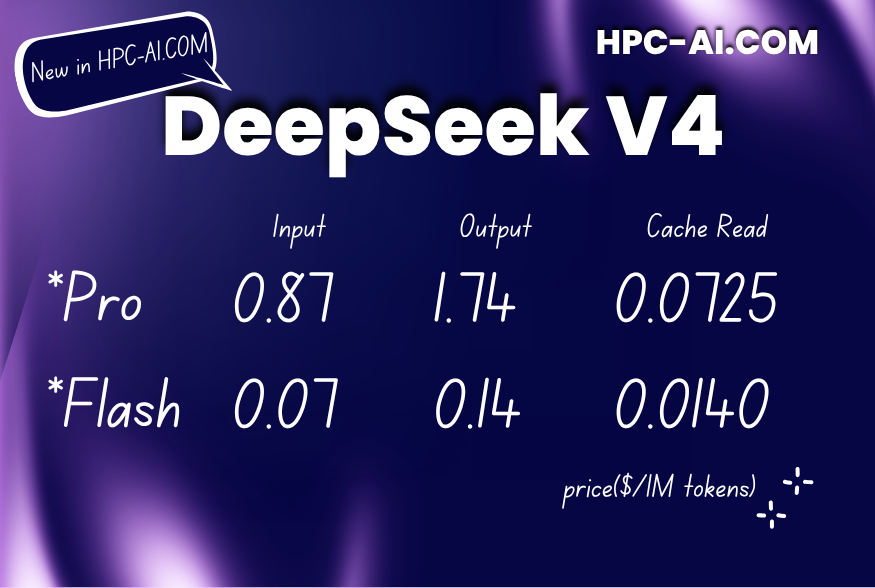

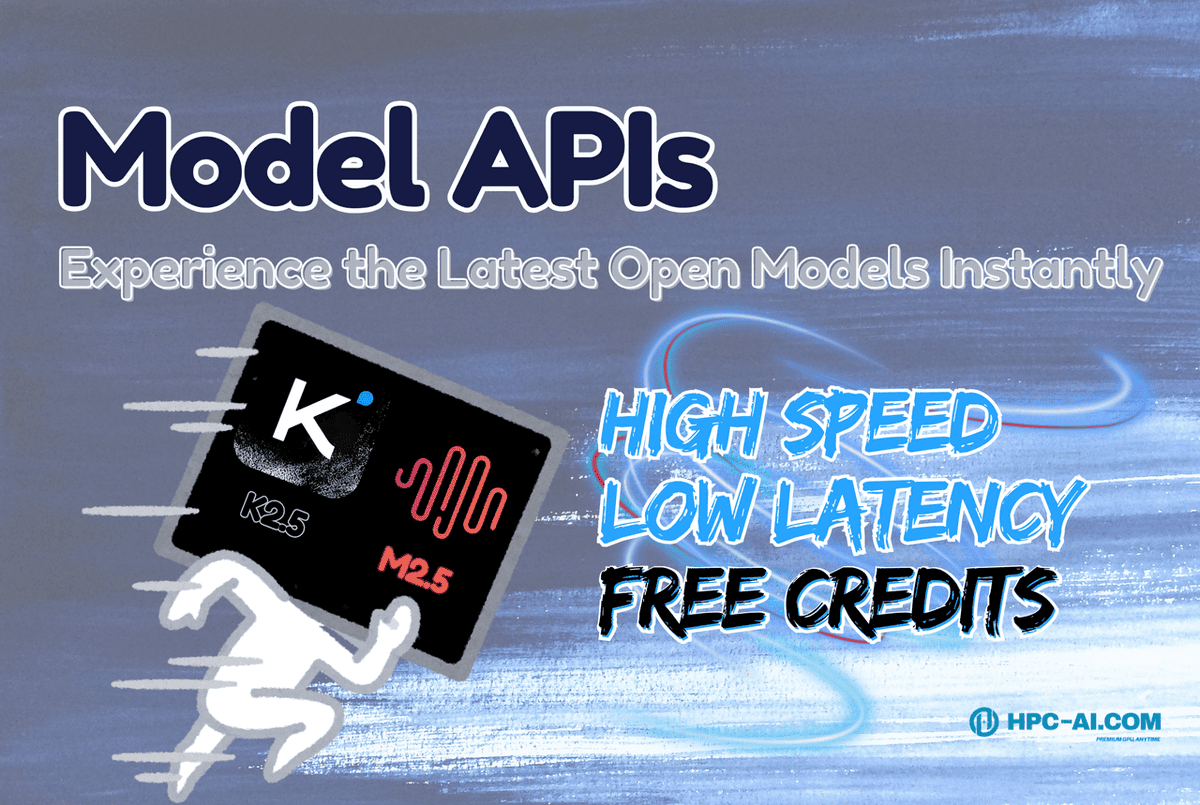

Our Model APIs are live!

Access frontier open-source models instantly:

⚡ No deployment

⚡ Low latency

⚡ OpenAI-compatible

⚡ Supports 256K context, AI agents, coding workflows

🎁 Free credits: $2 for all users | New users with invite code HPCAI-MAPI → $4 total credits

Build faster → hpc-ai.com/account/signup…

English