Meris Dabhi

429 posts

@Merisdabhi

Building production AI agents | Guardrails, reliability & real-world plumbing | Sharing what actually ships

JUST IN: Meta AI has officially overtaken ChatGPT in the Apple App Store rankings.

MiniMax Music 2.6 is live. A few things worth knowing: 🎬 Original BGM in minutes No more hunting for "probably fine to use" tracks. Describe your scene, get something fully yours. 🎭 Structure that actually follows your prompt. You can now write "open with tension, build toward awakening, explode into triumph", and the model follows, beat by beat. For the first time, AI music generation feels less like rolling the dice and more like directing. 🎤 Intentional imperfection In lo-fi, indie folk, jazz — the breathiness that makes a track feel human, not generated. Also shipping with 2.6: → First audio in under 20s: write a prompt, take a breath, it's ready → Improved low-mid frequency response: tighter bass for House, Trap, Drum & Bass → Style transfer & remixing: reimagine your own melody in a completely different genre 14-day free global beta starts today (500 songs/day). 👉Try now: minimax.io/audio/music

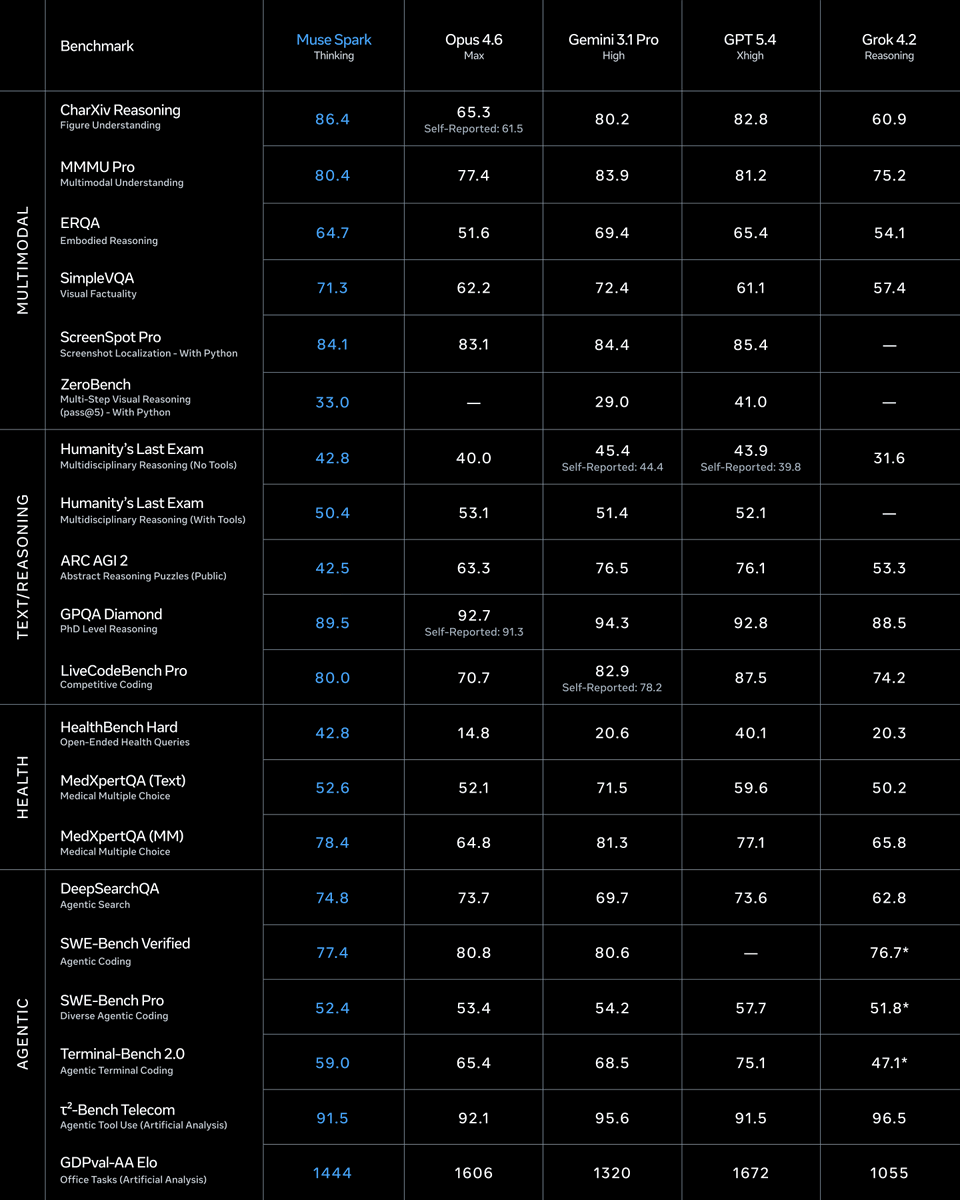

Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at meta.ai and the Meta AI app. We’re also making it available in private preview via API to select partners, and we hope to open-source future versions of the model. Learn more: go.meta.me/43ea00

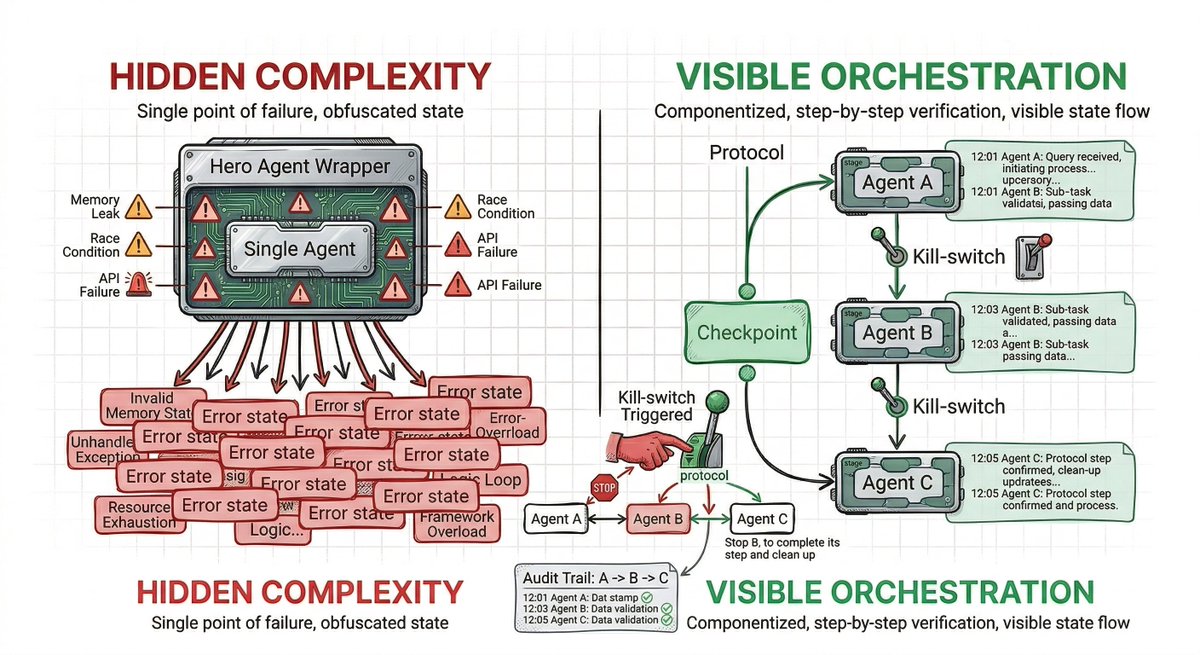

Days instead of months. That's the real shift here. Before: Agents work great in isolation. Getting them to production meant wrestling infrastructure, scaling, monitoring, memory management. A team would spend weeks or months on DevOps just to deploy one agent. -> Now: Define your agent, hit deploy, it scales and updates automatically. The harness is tuned for agent workloads specifically—not general compute. That detail matters. Agents have different patterns than typical applications. They spawn subtasks, maintain state, consume in bursts. When infrastructure understands your agent architecture, you move fast

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform.

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

We’ve made major upgrades to X API: • Pay-Per-Use now GA worldwide • XMCP Server + xurl for agents • Official Python & TypeScript XDKs • API Playground - free realistic simulations New releases coming will be a game changer. Start building → docs.x.com 🚢