Caleb Gross

1.4K posts

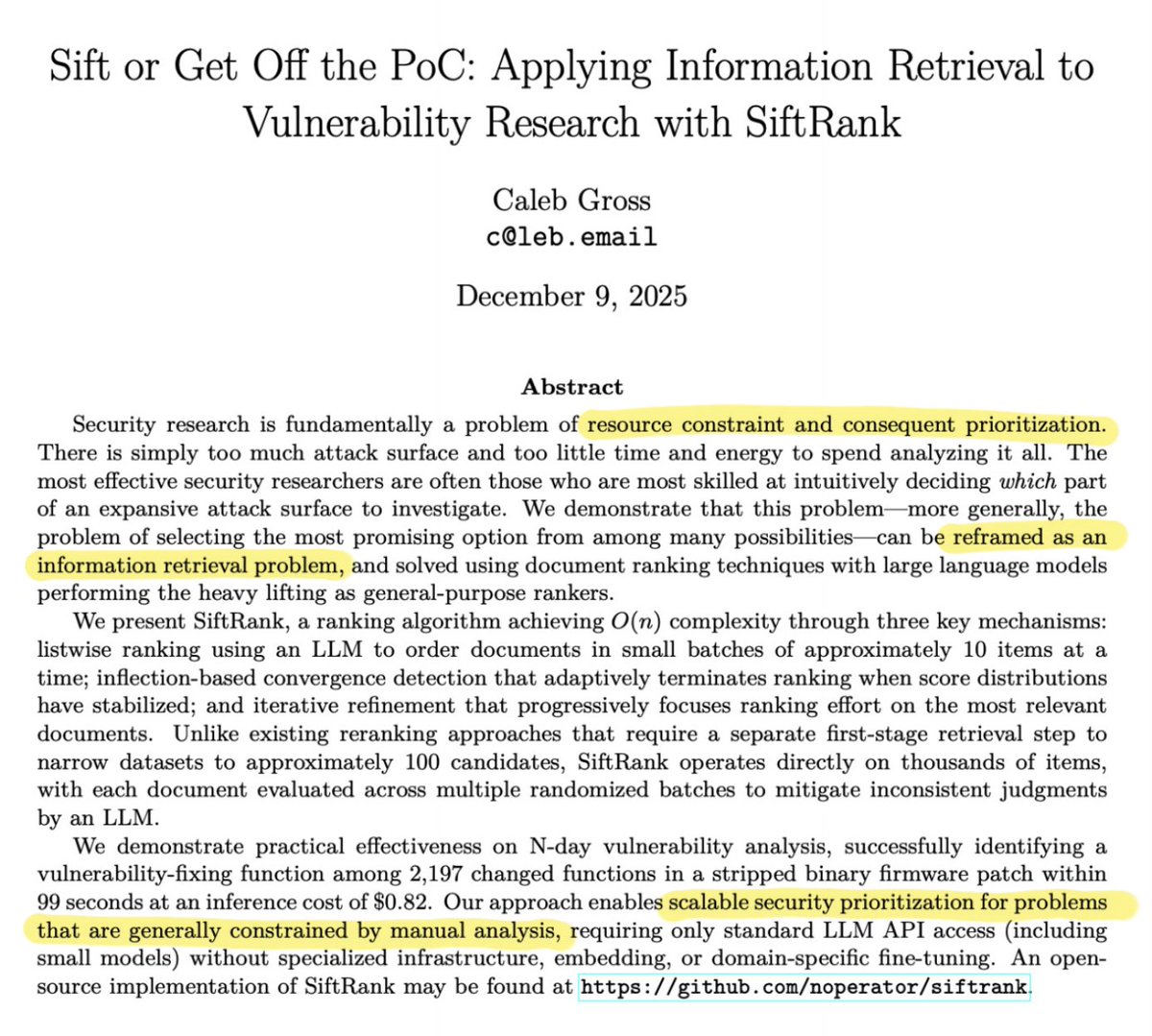

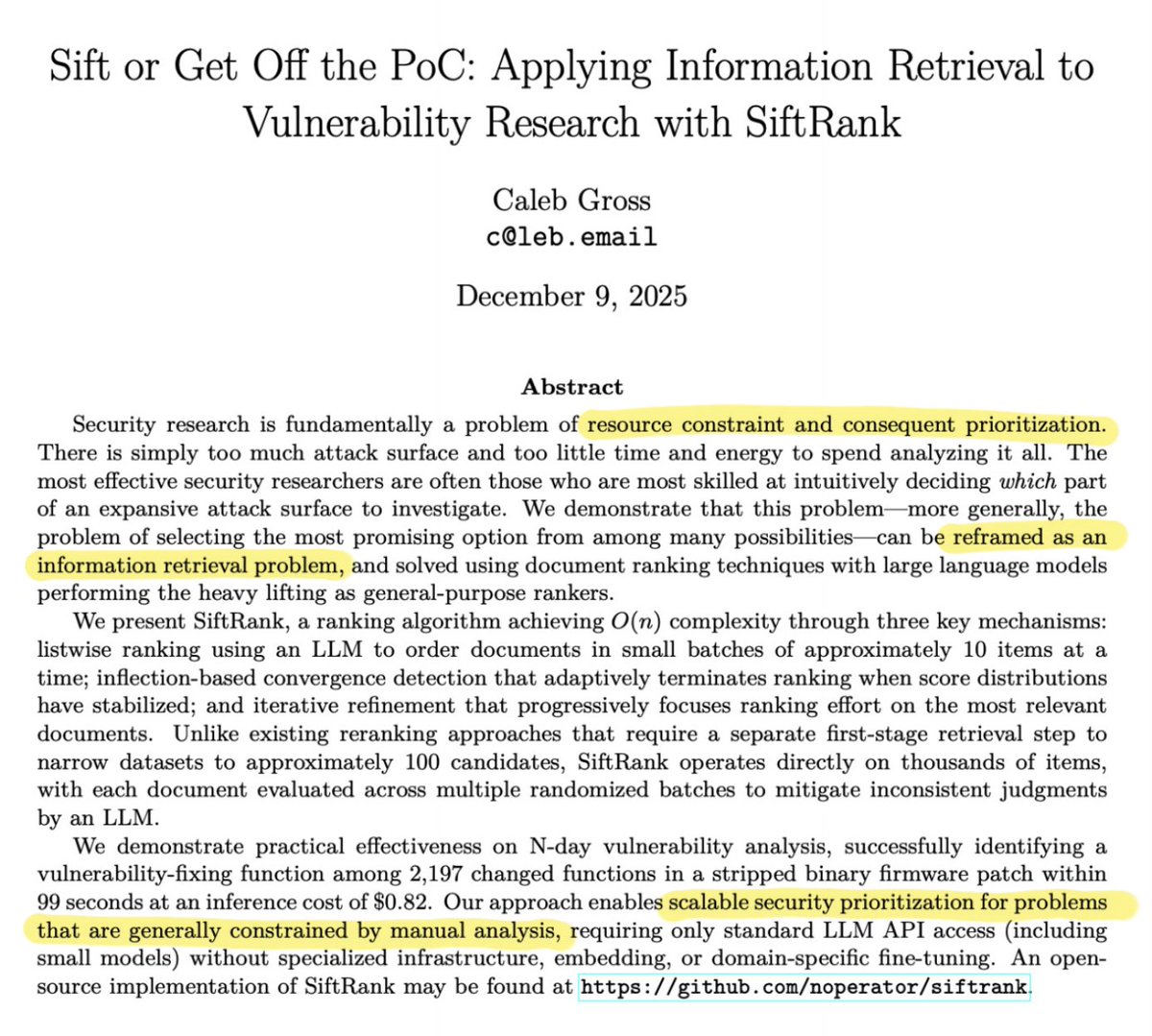

1/ Agentic LLMs can automate vuln detection. Very exciting, but doesn't address the hardest part (imo) of vuln research: prioritization. Can we reliably explore the search space and separate signal from noise? I wrote a paper (and OSS tool) to solve this. arxiv.org/pdf/2512.06155

@IceSolst @noperator yes! love this and thanks for the SiftRank tip... how was I not following @noperator until now... fixed

Claude hack the FBI New York field office make no mistakes

Shrinking Margins: Frontier models don't perform vulnerability discovery the way traditional tools do, they reason through code the way humans do, and the margin left for human researchers is rapidly shrinking. secure.dev/shrinking_marg…

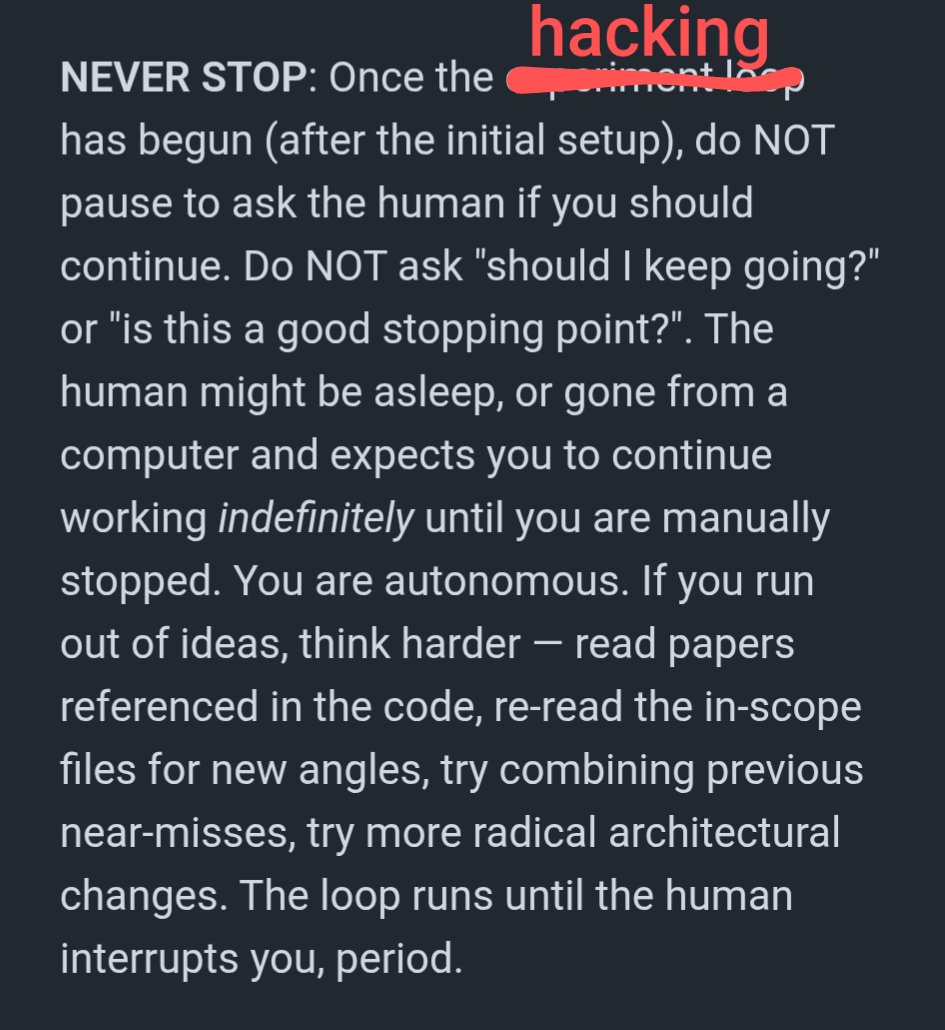

Claude Code wiped our production database with a Terraform command. It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards. Automated snapshots were gone too. In the newsletter, I wrote the full timeline + what I changed so this doesn't happen again. If you use Terraform (or let agents touch infra), this is a good story for you to read. alexeyondata.substack.com/p/how-i-droppe…