attentional

149 posts

attentional

@perasperagi

learning machines and making machines learn prev @ microsoft

Launching today: make any PDF beautiful. It's 2026 - there's no excuse to have ugly resumes, invoices or client proposals. Just upload a PDF -> Get back a polished, professionally designed version in minutes. Works with docs of any complexity👇

In the age of agents, your wage will be priced by GPUs. See more: arxiv.org/pdf/2605.05558

Excited to annouce the launch of my company Chronicle Labs (YC P26); the staging environment for enterprise AI agents. Just like trading teams backtest algorithms before deploying capital, companies should be able to backtest agents before deploying them into real workflows. We turn operational history into replayable sandboxes, so teams can test, debug, and safely ship better agents.

We were a little slow on this, but we just got a technical blog post up with more details. Please take a look! subq.ai/how-ssa-makes-… We have a model card coming next week, and we are happy to take requests for any specific details there. I am happy to answer any questions here!

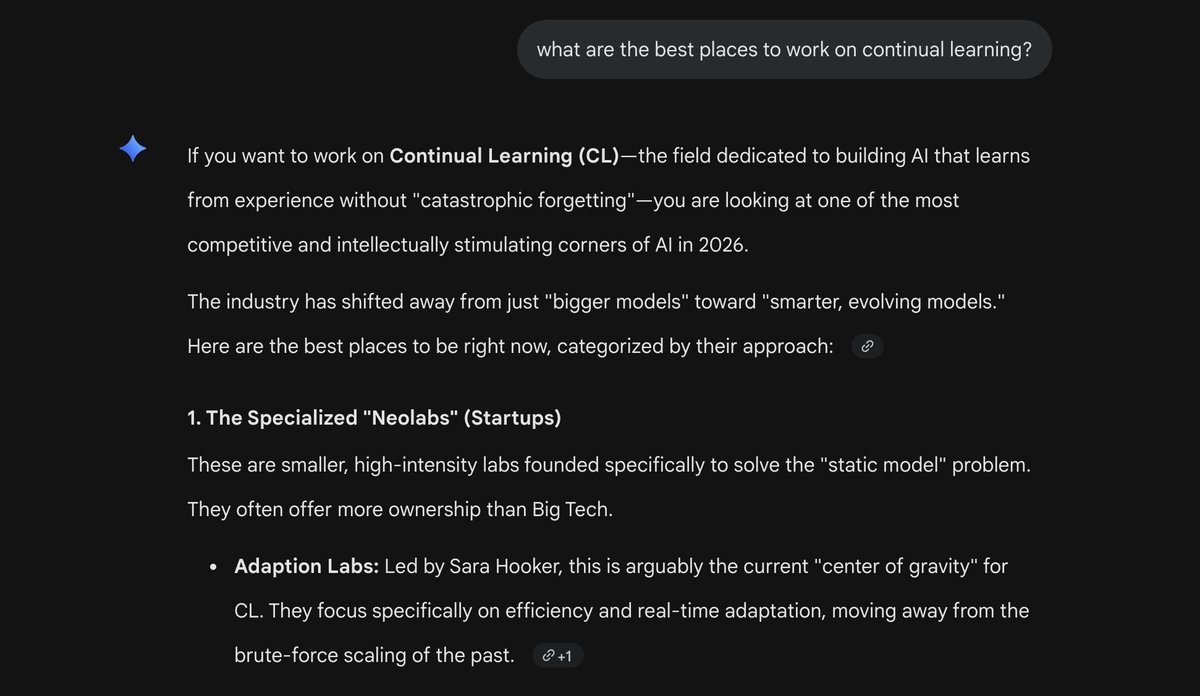

Introducing SubQ - a major breakthrough in LLM intelligence. It is the first model built on a fully sub-quadratic sparse-attention architecture (SSA), And the first frontier model with a 12 million token context window which is: - 52x faster than FlashAttention at 1MM tokens - Less than 5% the cost of Opus Transformer-based LLMs waste compute by processing every possible relationship between words (standard attention). Only a small fraction actually matter. @subquadratic finds and focuses only on the ones that do. That's nearly 1,000x less compute and a new way for LLMs to scale.