Закреплённый твит

Motoki Wu 🌊🦈

1.4K posts

Motoki Wu 🌊🦈

@plusepsilon

Resisting the urge to finetune @ Salient. ex-@cresta and other doodlings.

Oakland, CA Присоединился Ekim 2013

3.3K Подписки829 Подписчики

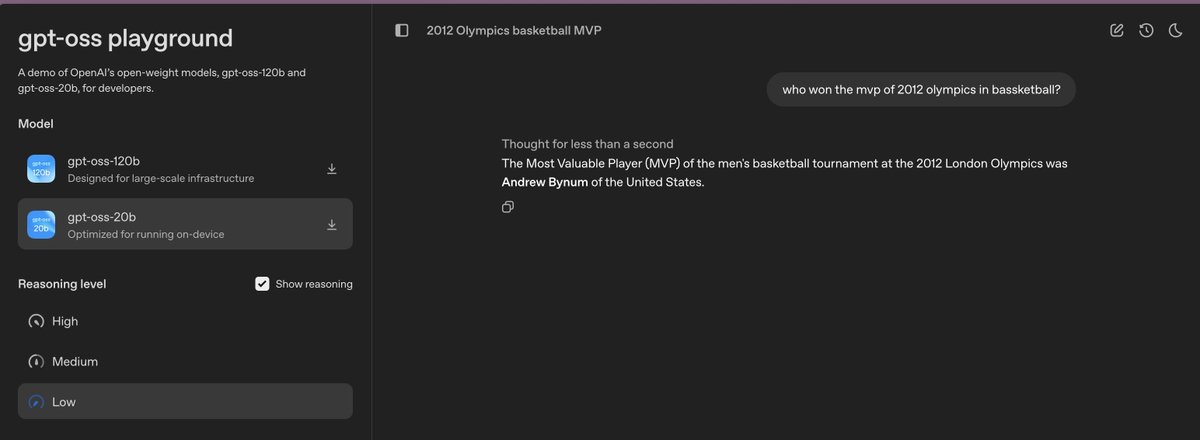

"Owen" is here, the American version of Qwen!

OpenAI@OpenAI

Our open models are here. Both of them. openai.com/open-models

English

I know RAG is dead, but please chunk your docs for the browser :)

platform.openai.com/docs/api-refer…

English

@Thom_Wolf My favorite was τ-bench (arxiv.org/abs/2406.12045). It evaluates on states, so the trajectory of the conversation and the agent's implementation matter less, and there's no need to rely on LLM evals. It strongly influenced how I structure agent workflows.

English

I would say a skillful cook would have a sharper knife.

Agrim Gupta@agrimgupta92

"A pair of hands skillfully slicing a ripe tomato on a wooden cutting board" #veo

English

@abacaj I've found loss masking to work well, but it does lower the number of tokens that the model sees. So you may have to compensate for that (more epochs / higher batch size).

English

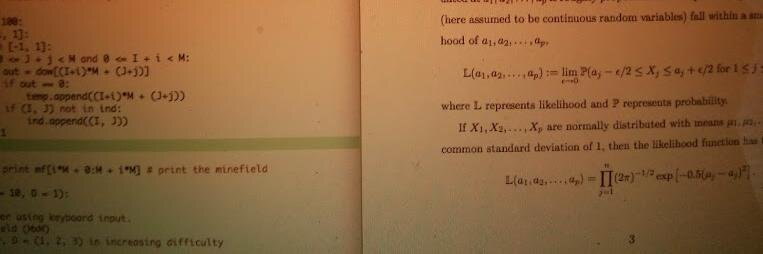

Why would SFT show increasing validation loss and still an "improving" model? Two runs, same hparams. Only difference? Training on "inputs". In the original stanford alpaca they set the instructions to -100 to condition the model on the outputs

But removing this line, shows a steady validation loss (blue line) - and a model that performs better in my benchmarks... What gives?

English