VSTDO

494 posts

VSTDO

@vicodoth

https://t.co/3gEPUoWwQZ https://t.co/da4HTaWVKF

“Your size is not size” > 1st, 2nd, 3rd & 4th addy definitely not urs as they are using ref code and u are not So it means u currently PNL could be $18 or $0.2, which one ser?

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to lead at scale. openai.com/index/accelera…

More RPGs need to add the feature that lets you automatically defeat enemies if you are much higher level than them. It makes exploring so much more fun.

I found a banger d’app. No points (yet at least). Free, no fees and it’s not a perp dex (finally!). More details tomorrow

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project. This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.: - It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work. - It found that the Value Embeddings really like regularization and I wasn't applying any (oops). - It found that my banded attention was too conservative (i forgot to tune it). - It found that AdamW betas were all messed up. - It tuned the weight decay schedule. - It tuned the network initialization. This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism. github.com/karpathy/nanoc… All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges. And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

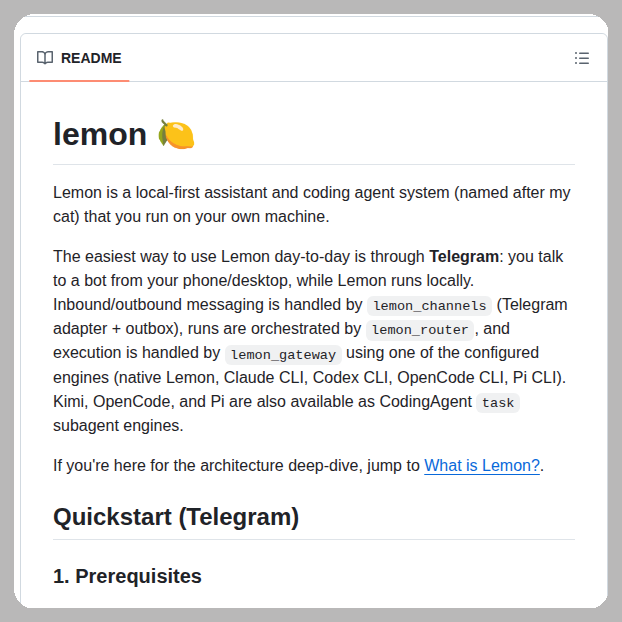

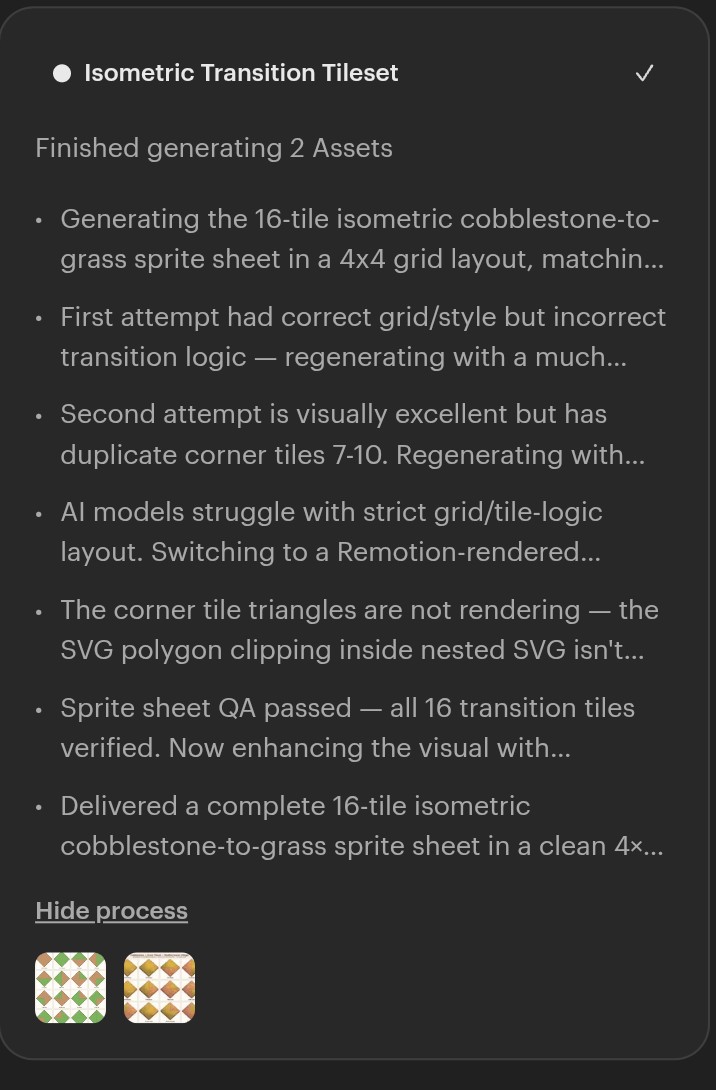

tldr: jean.build - check it out, its cool. not tldr: I was so skeptical like 8-10 months ago about AI and AI-based workflows and now I am using it 24/7 - lol. I quickly realized that this requires a totally new kind of apps to be able to efficiently use it. So I found Conductor - totally awesome, and cool what they are doing with it, check it out! Chefs kiss. However, I needed some features for my open-source work. (there was also one more thing that I will do a longer post about soon). So I made my own app, and I think there are others out there who will find it useful. It is called Jean, like Jean-Claude (you get it?!): jean.build It is opinionated. It is free and open-source, backed by our philosophy (see image). 💜 Current features: - load issues, PRs, and other session's compacts even from different projects as context to any sessions. - quickly check out PRs, issues, branches. - you can work on the base branch or use git worktrees if you want, both could have several parallel sessions. - predefined and customizable magic prompts to easily review PRs, investigate issues, investigate CI build fails, generate release notes, generate commits, generate PR descriptions, etc. - almost everything could be done with keyboard only, but with a nice GUI. - most importantly I can see everything on the interface, so I don't need to open several tabs / apps to do my work. - a lot more. As it runs on your computer, where as a developer, you have your natural habitat (😅) and your already configured dev env. But you can enable web access so you can use it from your phone/tablet/fridge through Tailscale, or CF tunnels or locally in your home network. So you can add your thoughts after a fresh shower (you know what I mean, best thoughts pops up after that) from anywhere, so LLM's could start planning it out immediately. Currently, it supports @claudeai CLI, but @opencode and Codex support is coming soon. It reuses your subscriptions, so you don't need to pay anything else. I have been using it for weeks as my daily driver on my Mac, but it should work on Linux and Windows soon (testers needed, btw 🙏 github.com/coollabsio/jean). Ofc, it has bugs 🐛, but I will escort them out through the window. eventually. Ok, this is too long, get back to work!