Adithya Bhaskar

80 posts

Adithya Bhaskar

@AdithyaNLP

Third year CS PhD candidate at Princeton University (@princeton_nlp @PrincetonPLI), previously CS undergrad at IIT Bombay

Introducing Tinker: a flexible API for fine-tuning language models. Write training loops in Python on your laptop; we'll run them on distributed GPUs. Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models! thinkingmachines.ai/tinker

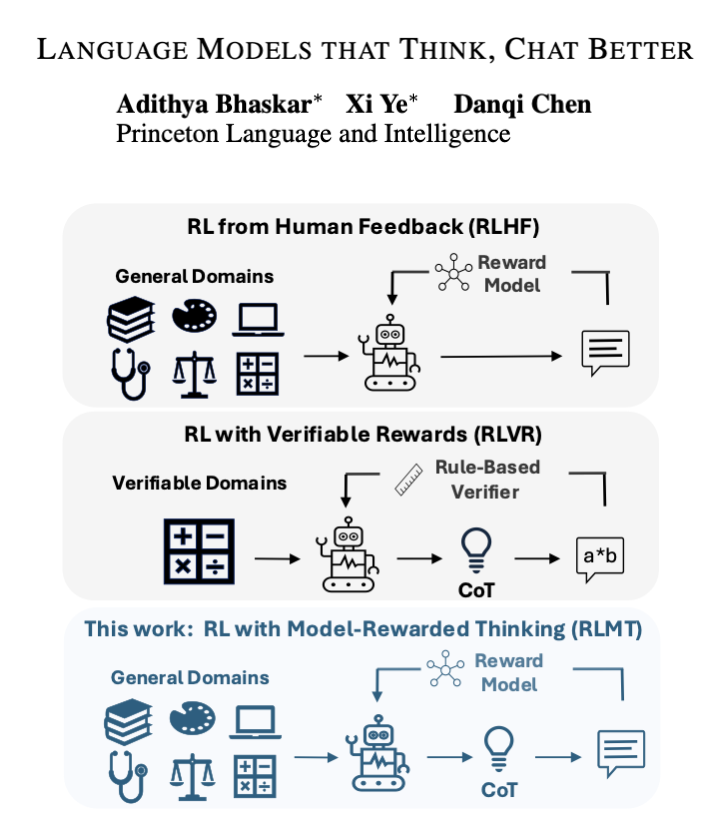

Language models that think, chat better. We used longCoT (w/ reward model) for RLHF instead of math, and it just works. Llama-3.1-8B-Instruct + 14K ex beats GPT-4o (!) on chat & creative writing, & even Claude-3.7-Sonnet (thinking) on AlpacaEval2 and WildBench! Read on. 🧵 1/8

7. Language Models that Think, Chat Better A simple recipe, RL with Model-rewarded Thinking, makes small open models “plan first, answer second” on regular chat prompts and trains them with online RL against a preference reward. x.com/omarsar0/statu…

Ever wonder why some AI chats feel robotic while others nail it? This new paper introduces a game-changer: Language Models that Think, Chat Better. They train AIs to "think" step-by-step before replying, crushing benchmarks. Mind blown? Let's dive in 👇