ทวีตที่ปักหมุด

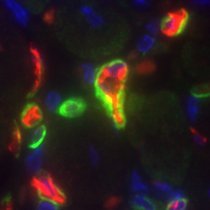

Have you recently (or nearly) defended your PhD and are interested in gene regulatory networks (GRNs), cell fate decisions, and evolution of skeletal form? Let's apply together for the Wallenberg DDLS Postdoc Call 2026 (deadline is March 31). 1/n

scilifelab.se/data-driven/dd…

English