ทวีตที่ปักหมุด

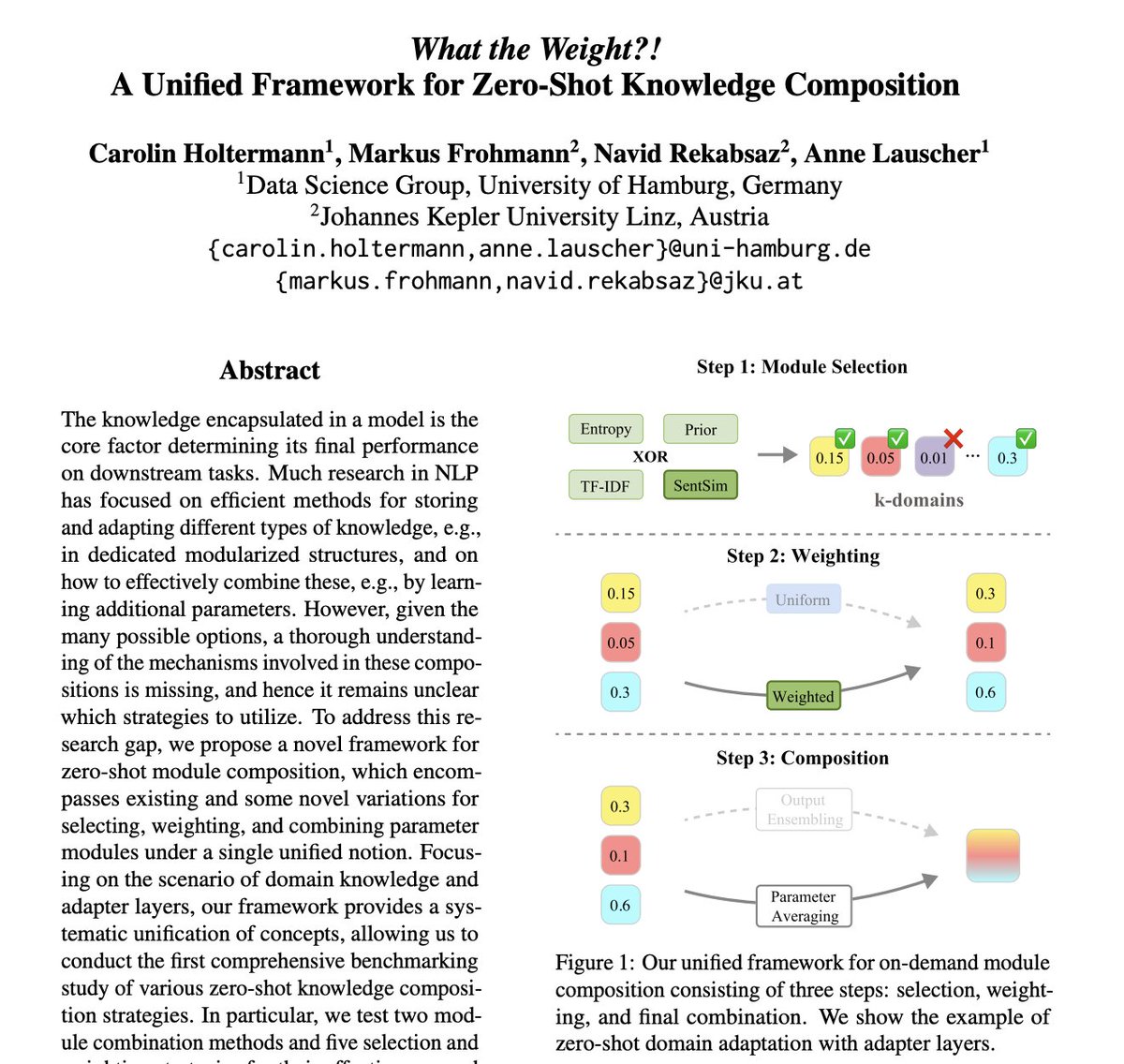

🧠 Which knowledge module do I need and how much of it ⁉️

🌟 Check out our latest paper, "What the Weight?! A Unified Framework for Zero-Shot Knowledge Composition", which has been officially accepted for Findings of the EACL Conference!

arxiv.org/pdf/2401.12756…

English