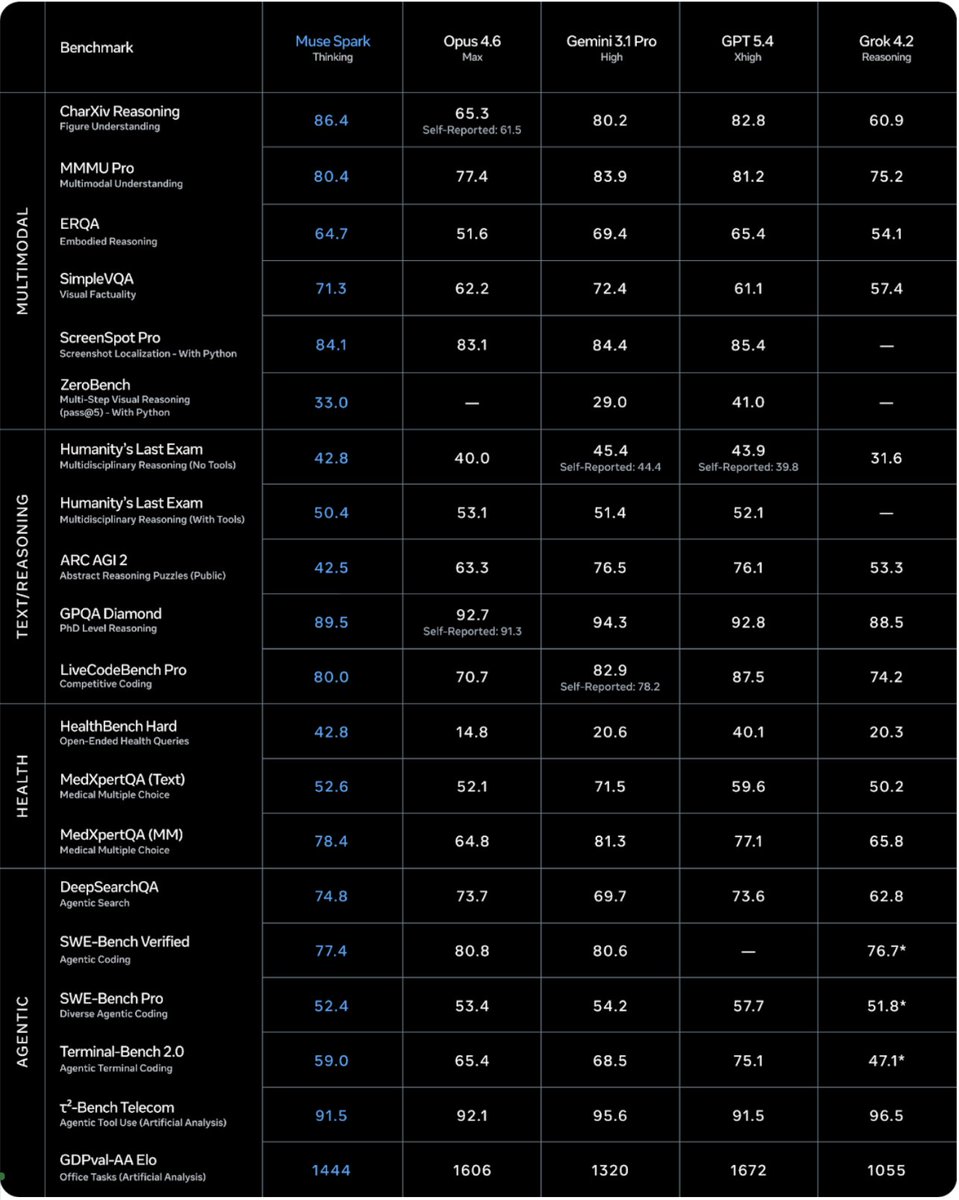

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Han Fang

1.4K posts

@Han_Fang_

AI Research @ Meta SuperIntelligence Labs

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Muse Spark from MSL is our first model and an exciting early step for the team. It’s been a great journey building it together! While there’s still a gap to the SoTA, this is an exciting start! We’ll keep improving and moving toward personal superintelligence!

Composer 2 is now available in Cursor.

New on the Anthropic Engineering Blog: In evaluating Claude Opus 4.6 on BrowseComp, we found cases where the model recognized the test, then found and decrypted answers to it—raising questions about eval integrity in web-enabled environments. Read more: anthropic.com/engineering/ev…