ทวีตที่ปักหมุด

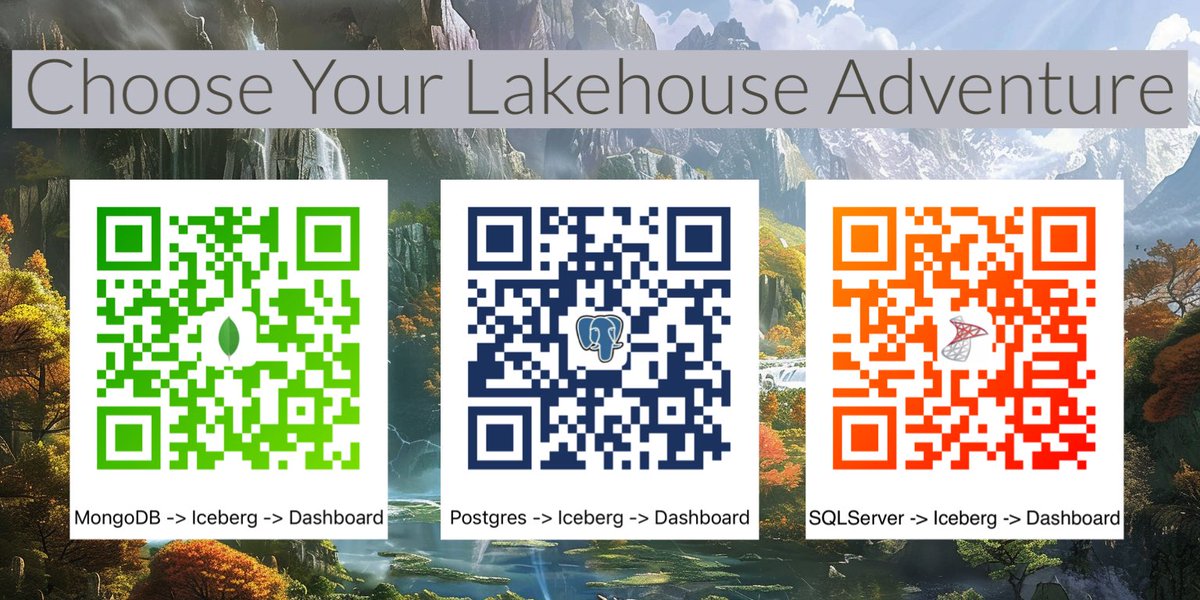

Experience how easy it is to take data from your source data systems, ingest them into Apache Iceberg and serve a BI dashboard from the confines of your laptop with these tutorials.

#DataLakehouse #DataLake #DataEngineering #ApacheIceberg

English