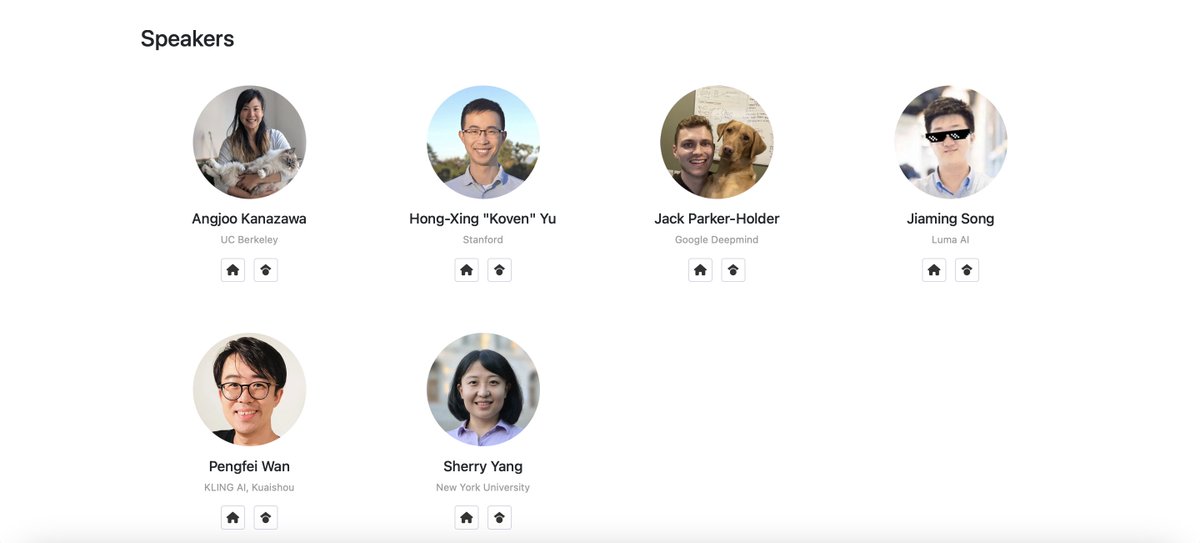

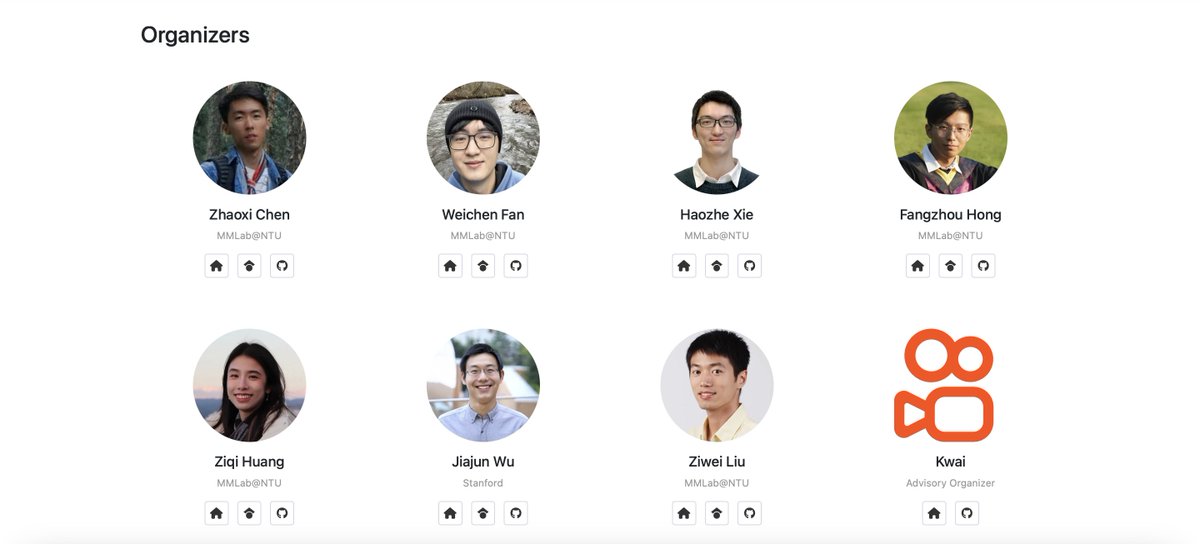

Freshly picked: #NTUsg PhD student Huang Ziqi has been selected as one of 21 global recipients of the prestigious 2025 Apple Scholars in AIML PhD Fellowship — a prestigious programme that supports emerging leaders in AI and machine learning through funding, mentorship, and internship opportunities with Apple. She is working on #GenAI technologies that intuitively understand human intentions, making it easier for people to create and control AI-generated visuals like images and videos. “I see this fellowship not only as a personal milestone but also as a recognition of the supportive community and innovative work taking place at NTU,” says Ziqi. Congratulations to Ziqi and her advisor, Assoc Prof Liu Ziwei, on this international recognition. #NTUsgStudents #NTUsgEducation #NTUsgResearch #AIatNTUsg