ทวีตที่ปักหมุด

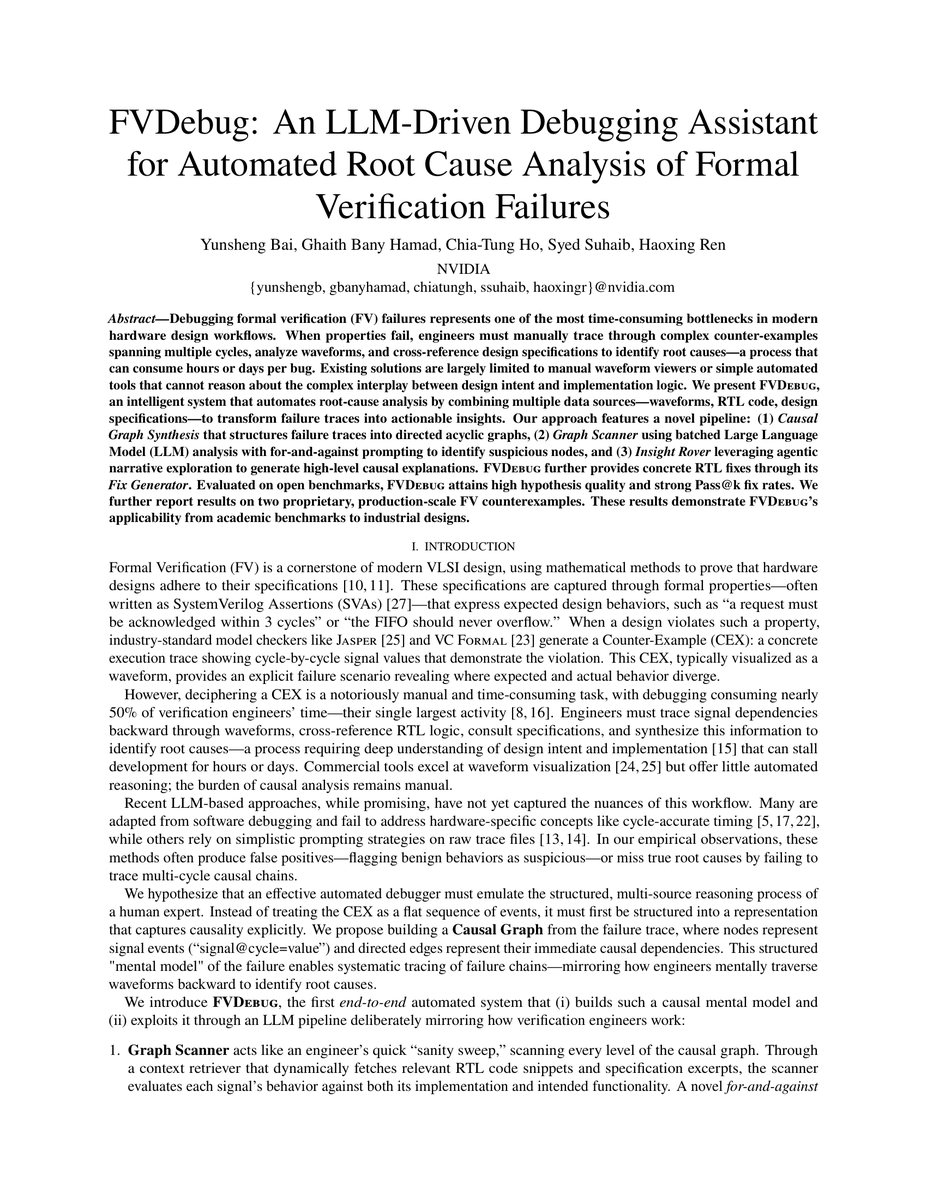

⭕️ Check out MultiLLM debate this new paper "FVDebug: An LLM-Driven Debugging Assistant":

⭕️ Moderator Synthesis: FVDebug Paper Review

Key Agreements

All participants concur on FVDebug's conceptual merit: automating formal verification debugging through causal graphs, multi-source evidence tr...

⭕️ Join the debate: multillm.ai/conversations/… #AI #Research #ML

English