ทวีตที่ปักหมุด

Sima Noorani

36 posts

Sima Noorani

@NooraniSimaa

PhD candidate @Penn

Philadelphia, PA เข้าร่วม Mart 2024

185 กำลังติดตาม129 ผู้ติดตาม

Sima Noorani รีทวีตแล้ว

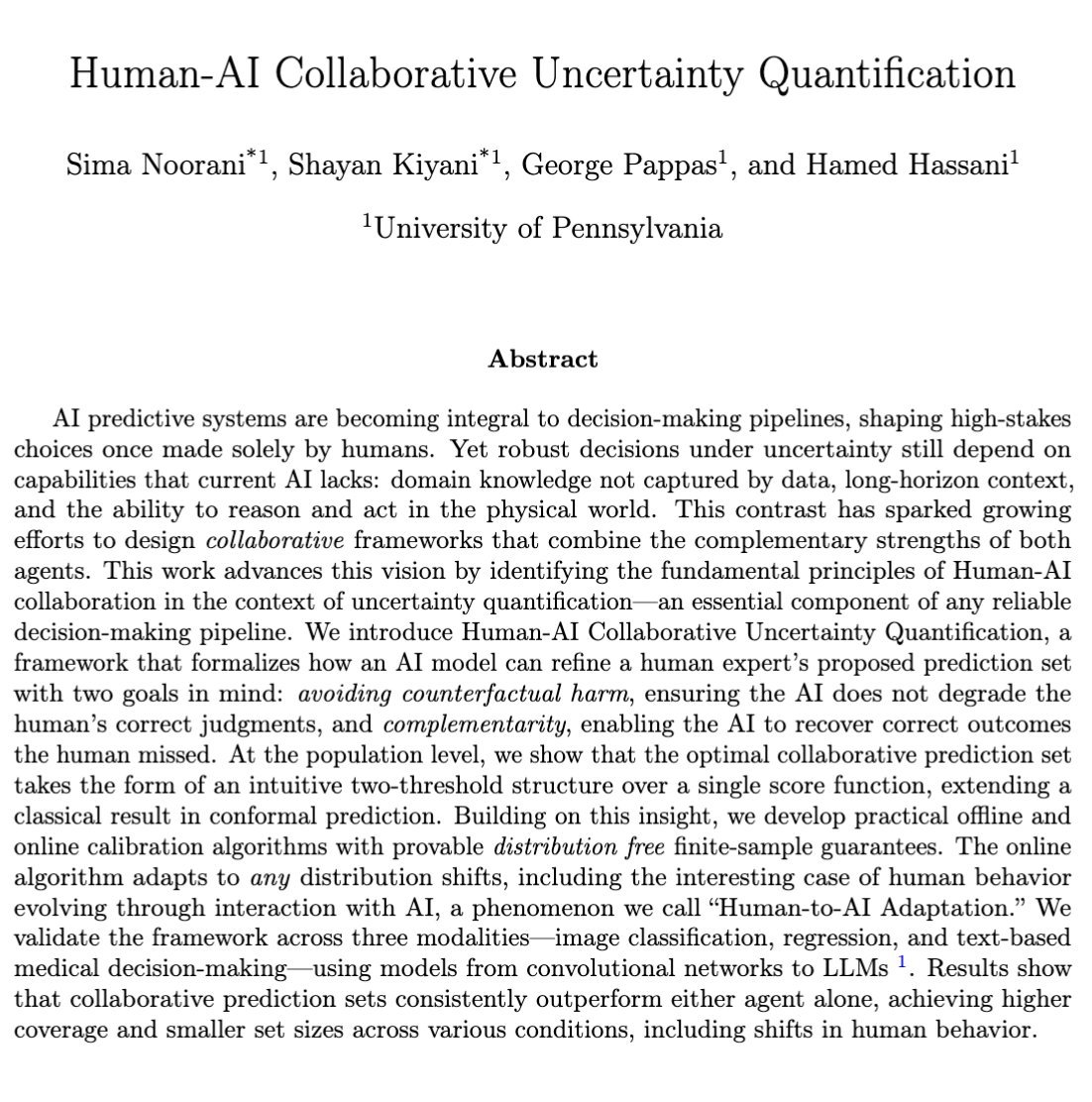

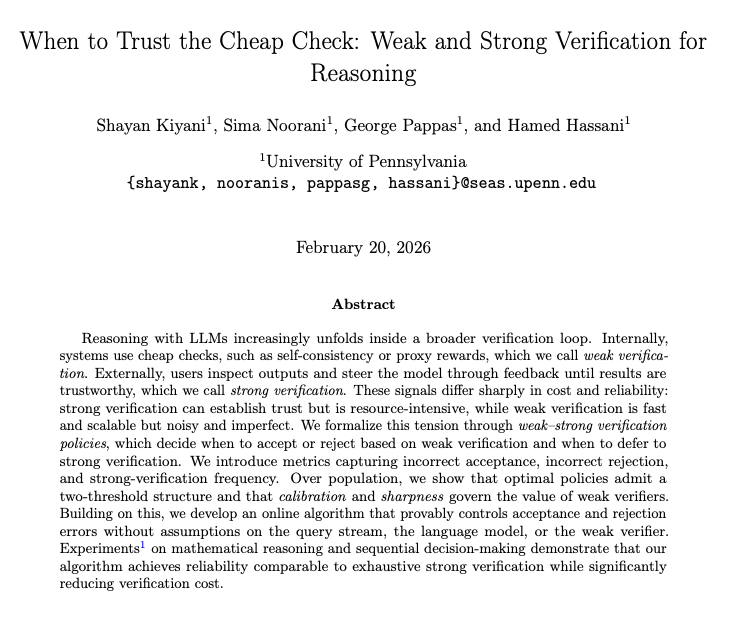

Paper:arxiv.org/pdf/2602.17646

Joint work with the amazing @ShayanKiyani1, @HamedSHassani, @pappasg69

English

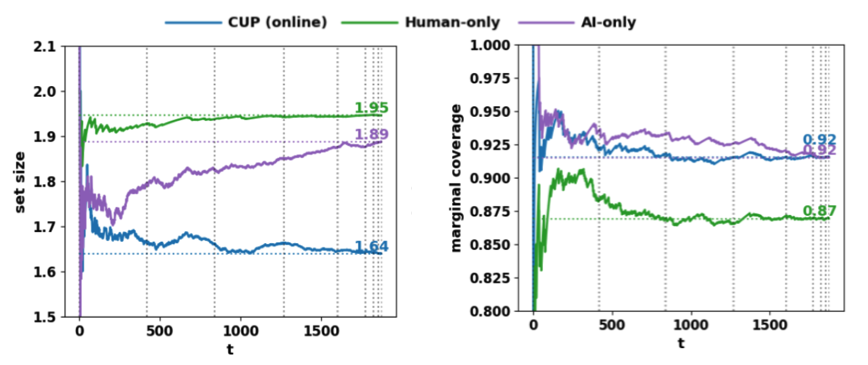

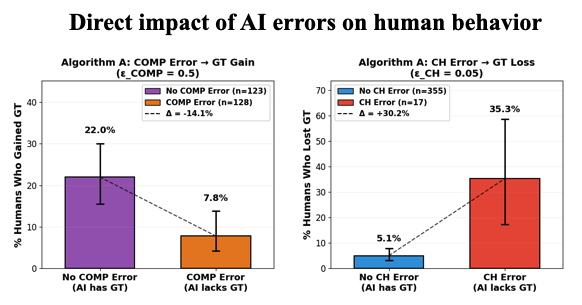

Empirically, we study the framework in both LLM-simulated interactions and a real human crowdsourcing study, and show that enforcing these two principles leads to predictable shifts in downstream human decision quality.

This validates that these human-centric principles serve as practical levers for steering multi-round HAI collaboration dynamics.

English

We’re presenting our work today at Poster Session 2 (4:30 PM, #3303). Come check it out and chat with us! :) This is a joint work with @ShayanKiyani1 @HamedSHassani @pappasg69.

Sima Noorani@NooraniSimaa

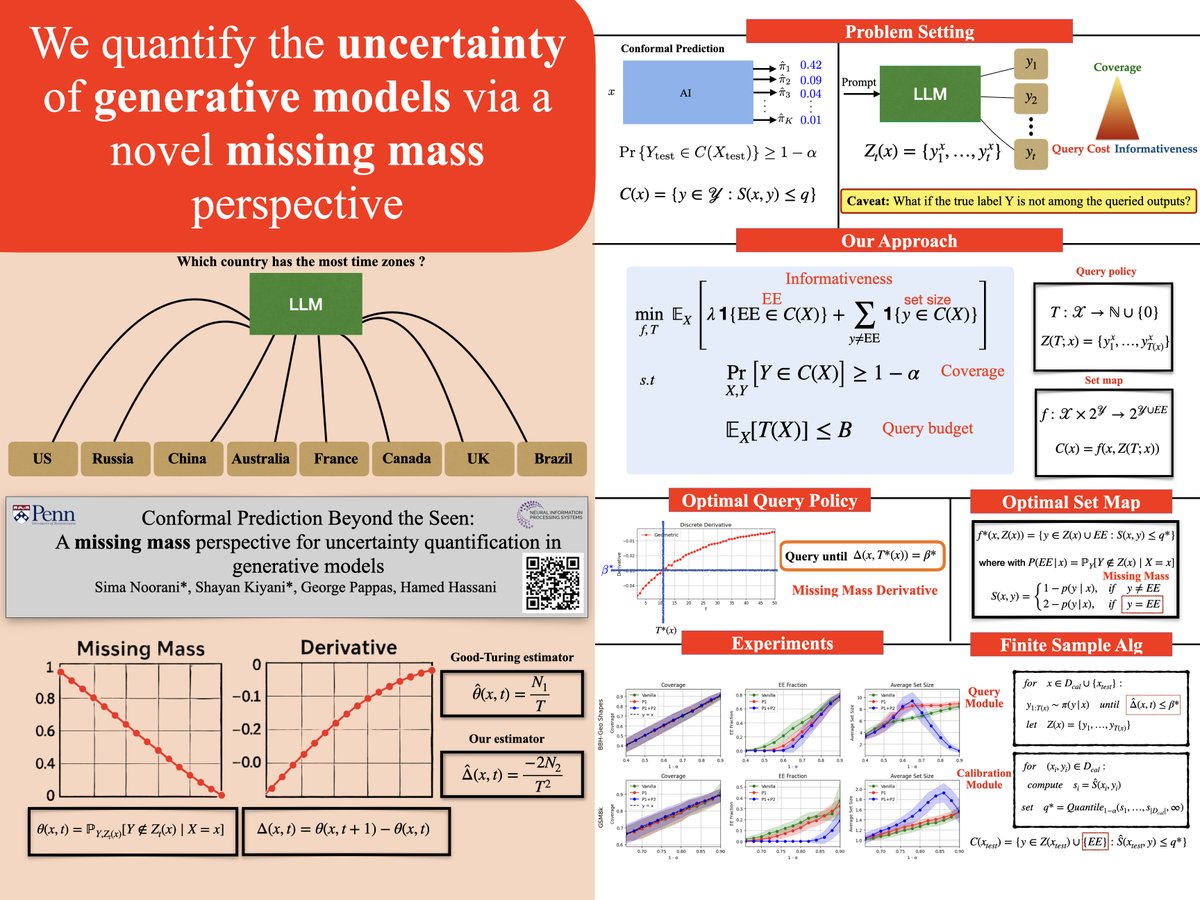

How can we quantify uncertainty in LLMs from only a few sampled outputs? The key lies in the classical problem of missing mass—the probability of unseen outputs. This perspective offers a principled foundation for conformal prediction in query-only settings like LLMs.

English

Paper : arxiv.org/pdf/2510.23476

Joint work with the amazing @ShayanKiyani1 , @pappasg69, @HamedSHassani

English

Sima Noorani รีทวีตแล้ว

Sima Noorani รีทวีตแล้ว

We push conformal prediction and its trade-offs beyond regression & classification — into query-based generative models.

Surprisingly (or not?), missing mass & Good-Turing estimators emerge as key tools once again.

Very excited about this one!

Sima Noorani@NooraniSimaa

How can we quantify uncertainty in LLMs from only a few sampled outputs? The key lies in the classical problem of missing mass—the probability of unseen outputs. This perspective offers a principled foundation for conformal prediction in query-only settings like LLMs.

English