Qinyue Zheng

23 posts

Qinyue Zheng

@QueyJ

I build. AI PhD Student @ETH Zürich | Prev @EPFL, @Harvard, @llama_index

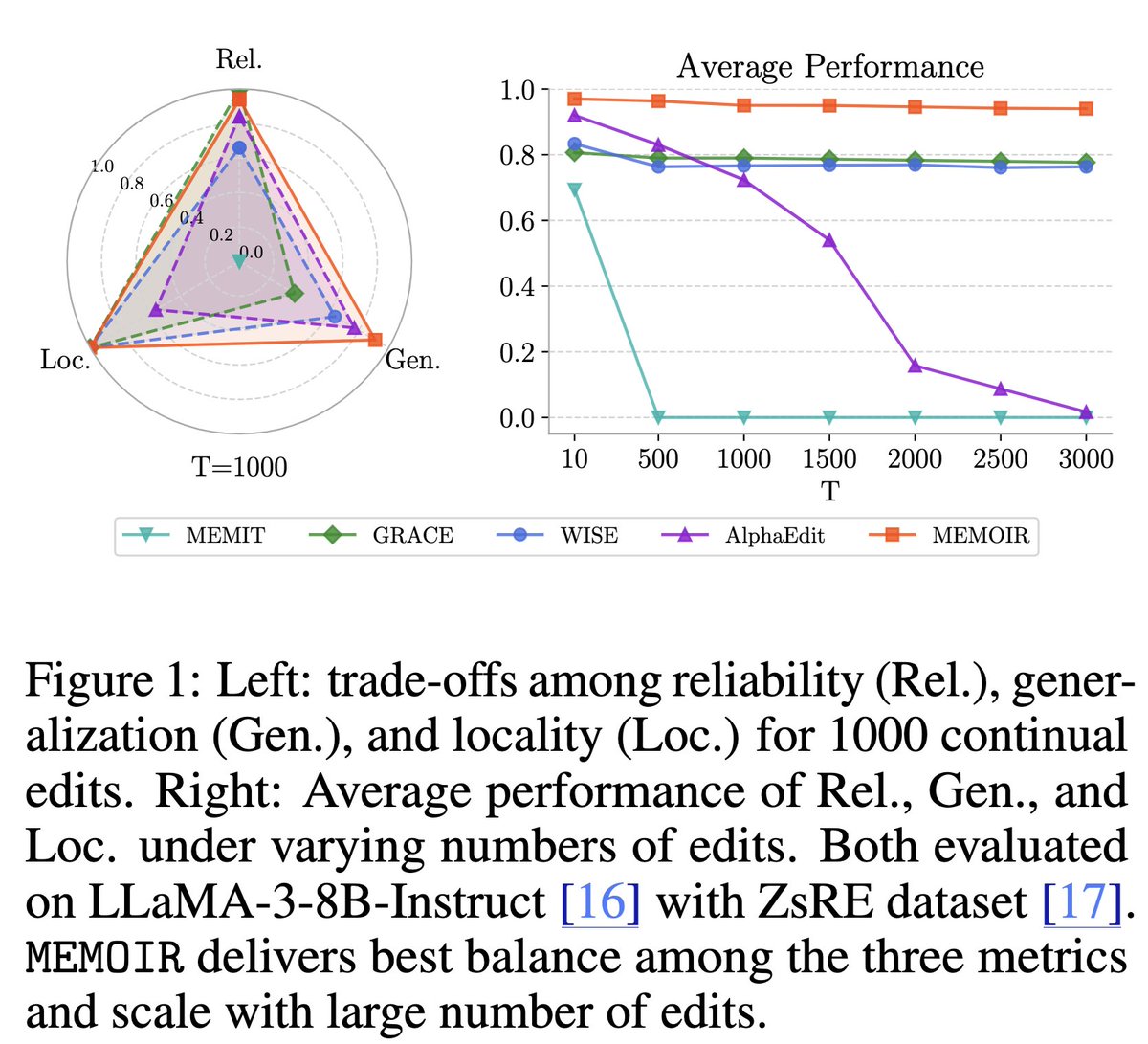

Training LLMs with verifiable rewards uses 1bit signal per generated response. This hides why the model failed. Today, we introduce a simple algorithm that enables the model to learn from any rich feedback! And then turns it into dense supervision. (1/n)

Researchers at @ETH_en and @Stanford released an open dataset of 5.8M+ long-form medical QA pairs, each grounded in peer-reviewed literature and designed for RAG. 🚀 The pipeline: ▪️ Source: 900K+ full-text medical papers (S2ORC) ▪️ QA generation via GPT-3.5 with a three-stage filtering process (regex, Mistral-7B classifier, human-in-the-loop) ▪️ Embeddings generated and indexed in Qdrant for scalable dense retrieval The dataset is available on @huggingface🤗 with full code for embedding, indexing, and RAG setup. 👉 Full story: qdrant.tech/blog/miriad-qd…