RawthiL

460 posts

RawthiL

@Rama_stdout

not THAT kind of doctor...

If you're building AI apps, this matters. Most queries don’t require heavyweight models, but devs still pay GPT-5 prices. pnyx. ai routes each request to the right model, cheap when possible, powerful when required, using @POKTnetwork as the decentralized model layer. Watch it here 👇 TIMESTAMPS 00:00 — Intro to Pnxy and intelligent AI model routing 03:21 — Why AI apps are financially unsustainable today 05:02 — Cost breakdown: GPT-level models vs cheaper alternatives 07:10 — Why Pnxy uses decentralization and Pocket Network 15:34 — Long-term vision

If you're building AI apps, this matters. Most queries don’t require heavyweight models, but devs still pay GPT-5 prices. pnyx. ai routes each request to the right model, cheap when possible, powerful when required, using @POKTnetwork as the decentralized model layer. Watch it here 👇 TIMESTAMPS 00:00 — Intro to Pnxy and intelligent AI model routing 03:21 — Why AI apps are financially unsustainable today 05:02 — Cost breakdown: GPT-level models vs cheaper alternatives 07:10 — Why Pnxy uses decentralization and Pocket Network 15:34 — Long-term vision

UPDATE YOUR API URLs. If you are using the free public Pocket Network RPC endpoints, you MUST update your endpoint URLs by 11/25. -> api.pocket.network

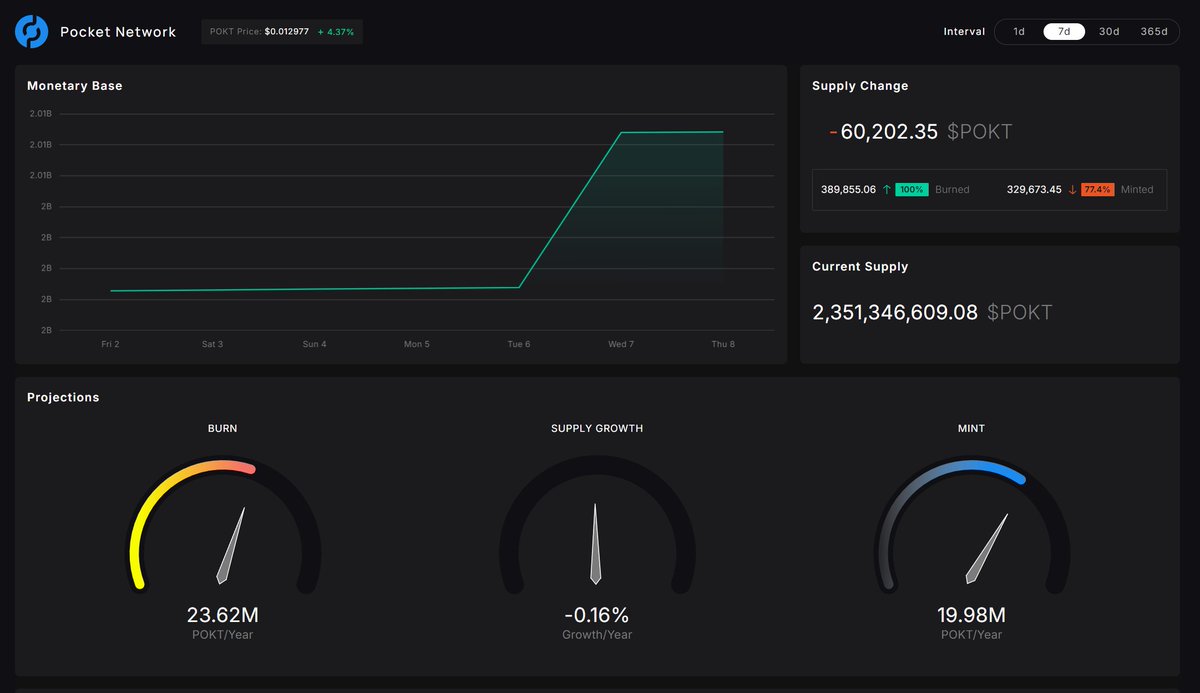

Pocket Network is a @cosmos chain with usage-based tokenomics 📈 That means $POKT is 𝙙𝙚𝙛𝙡𝙖𝙩𝙞𝙤𝙣𝙖𝙧𝙮 👀 We support 100+ sources (e.g., @ethereum, @iotex_io, @gnosischain, @SolanaFndn, @NEARProtocol). What integrations/partnerships do you want to see?

A lot of problems with AI discourse are because "being good at AI" (called Theory of Mind in this paper) is a skill that seems to be independent of "being great at your job" So you have amazing experts who gain from AI, and others who do not, and they don't understand each other

Ever wished you could have a .pokt address to simply sending and receiving $POKT? Want an easy to remember ENS name for your POKT based service? Now you can. Pokt has partnered with Unstoppable Domains to bring you .pokt domain names. Get yours now: get.unstoppabledomains.com/pokt/

+1 for "context engineering" over "prompt engineering". People associate prompts with short task descriptions you'd give an LLM in your day-to-day use. When in every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step. Science because doing this right involves task descriptions and explanations, few shot examples, RAG, related (possibly multimodal) data, tools, state and history, compacting... Too little or of the wrong form and the LLM doesn't have the right context for optimal performance. Too much or too irrelevant and the LLM costs might go up and performance might come down. Doing this well is highly non-trivial. And art because of the guiding intuition around LLM psychology of people spirits. On top of context engineering itself, an LLM app has to: - break up problems just right into control flows - pack the context windows just right - dispatch calls to LLMs of the right kind and capability - handle generation-verification UIUX flows - a lot more - guardrails, security, evals, parallelism, prefetching, ... So context engineering is just one small piece of an emerging thick layer of non-trivial software that coordinates individual LLM calls (and a lot more) into full LLM apps. The term "ChatGPT wrapper" is tired and really, really wrong.

There's a new paper circulating looking in detail at LMArena leaderboard: "The Leaderboard Illusion" arxiv.org/abs/2504.20879 I first became a bit suspicious when at one point a while back, a Gemini model scored #1 way above the second best, but when I tried to switch for a few days it was worse than what I was used to. Conversely as an example, around the same time Claude 3.5 was a top tier model in my personal use but it ranked very low on the arena. I heard similar sentiments both online and in person. And there were a number of other relatively random models, often suspiciously small, with little to no real-world knowledge as far as I know, yet they ranked quite high too. "When the data and the anecdotes disagree, the anecdotes are usually right." (Jeff Bezos on a recent pod, though I share the same experience personally). I think these teams have placed different amount of internal focus and decision making around LM Arena scores specifically. And unfortunately they are not getting better models overall but better LM Arena models, whatever that is. Possibly something with a lot of nested lists, bullet points and emoji. It's quite likely that LM Arena (and LLM providers) can continue to iterate and improve within this paradigm, but in addition I also have a new candidate in mind to potentially join the ranks of "top tier eval". It is the @openrouter LLM rankings: openrouter.ai/rankings Basically, OpenRouter allows people/companies to quickly switch APIs between LLM providers. All of them have real use cases (not toy problems or puzzles), they have their own private evals, and all of them have an incentive to get their choices right, so by choosing one LLM over another they are directly voting for some combo of capability+cost. I don't think OpenRouter is there just yet in both the quantity and diversity of use, but something of this kind I think has great potential to grow into a very nice, very difficult to game eval.