Sudharshan Suresh

288 posts

@Suddhus

Research scientist & tech lead @BostonDynamics Atlas // Prev: @AIatMeta and PhD at @CMU_Robotics.

Robots excel at learning motions from humans, but can they also learn to apply force safely? 💪 Introducing UMI-FT: the UMI gripper equipped with force/torque sensors (CoinFT) on each finger. Multimodal data from UMI-FT, combined with diffusion policy and compliance control, enables robots to apply sufficient yet safe force for task completion. UMI-FT Project website: umi-ft.github.io CoinFT Project website: coin-ft.github.io

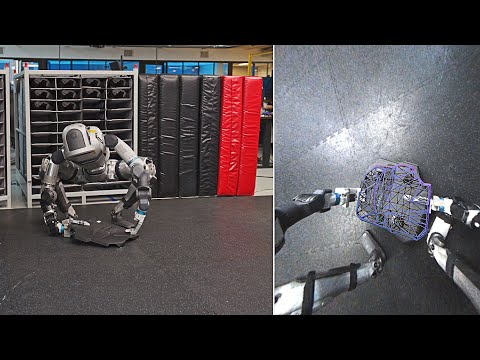

I will join UChicago CS @UChicagoCS as an Assistant Professor in late 2026, and I’m recruiting PhD students in this cycle (2025 - 2026). My research focuses on AI & Robotics - including dexterous manipulation, humanoids, tactile sensing, learning from human videos, robot systems, and anything needed to make robots truly work and improve everyday life. I also place strong emphasis on open-source. Check my homepage to learn more: haozhi.io. Please reachout if you are interested! The deadline is Dec 11th. Link: tinyurl.com/uchiapp.

Excited to announce that we have raised $120M in our Series A to advance the frontier of general-purpose high-performance robots. 🤖 The new funding will accelerate progress towards our mission of bringing foundation-model powered robots to everyone, everywhere. Read more 👇

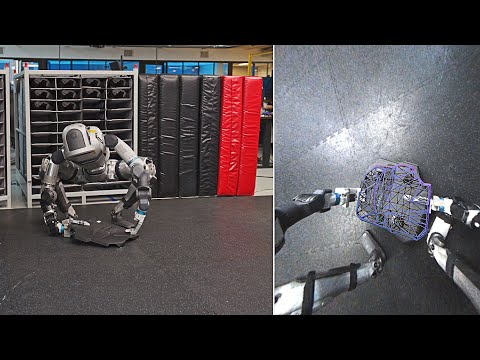

Today I’m proud to share what I’ve been working on recently with my team at @BostonDynamics along with our collaborators at @ToyotaResearch . bostondynamics.com/blog/large-beh…

📹Recording now available! If you missed our workshop at RSS, you can now watch the full session here: youtu.be/7a5HYjQ4wJo?si… Thanks again to all the speakers and participants!

We are excited to host the 3rd Workshop on Dexterous Manipulation at RSS tomorrow! Join us at OHE 122 starting at 9:00 AM! See you there!

July has been a big month for Viser! - Released v1.0.0😊 - We did some writing Some demos👇