Tarun Mathur

4.5K posts

Tarun Mathur

@TarunMathur

#GrowthAdvisory #CorporateStrategy #FoundersAdvice #DealMaker #PE #VC #ScaleupAdvisory | #Fintech #AI #Web3 #EnterpriseTech | #KelloggAlum | RT≠ Endorsement

AI has made polished, interchangeable work abundant, flooding the middle market and compressing the economic value of first drafts and generic execution. Taste—deliberate human judgment that discerns what is generic from what is worth pursuing—emerges as the real moat. This taste is strongest when rooted in deep context, strategic refusal, and authorship rather than surface aesthetics alone. AI compresses execution while inflating the premium on specificity, judgment, and the disciplined choice of what not to build. Enterprises, founders, and investors must stop measuring success by output volume or coding velocity and audit every decision against taste—context-aware judgment, strategic refusal, and authorship. The edge is no longer in generating competent work—it is in judging what work is worth generating at all. #TMinsights

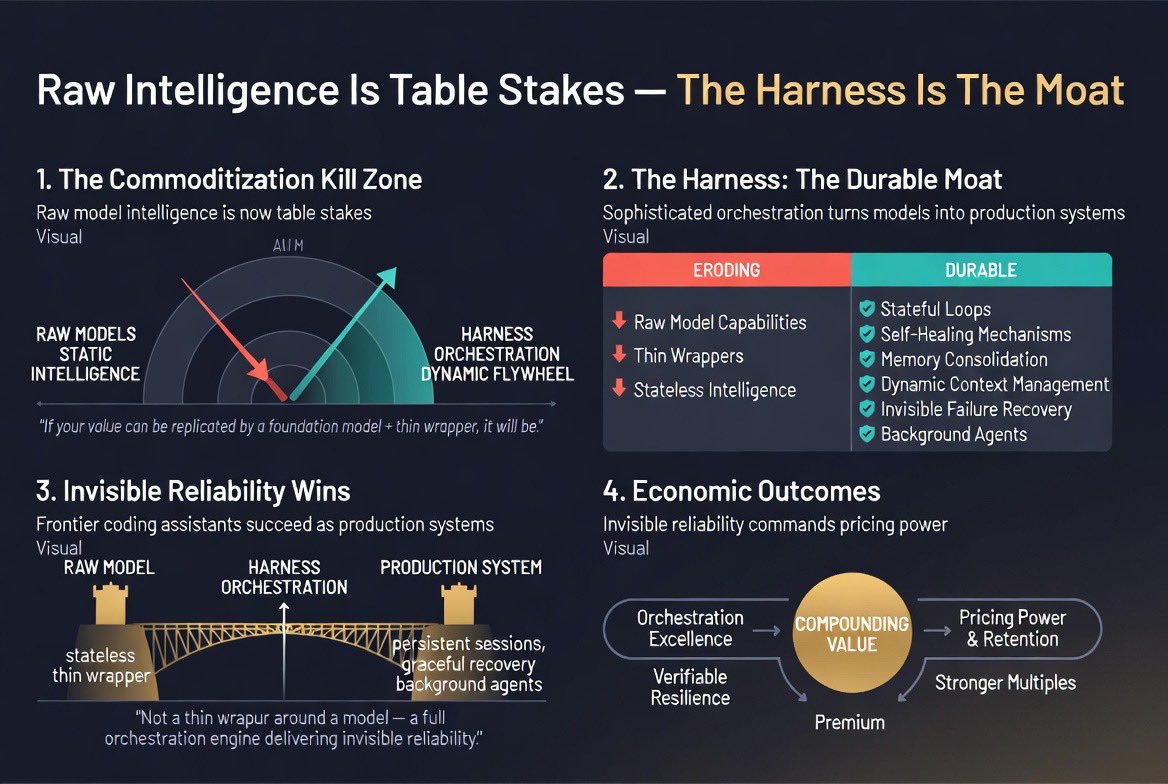

Raw intelligence is now table stakes; the harness that makes intelligence reliable and persistent is the moat. A frontier coding assistant is not a model with a thin wrapper. It is a sophisticated orchestration engine—stateful loops, self-healing mechanisms, memory consolidation, constrained tool use, dynamic context management, invisible failure recovery, and background agents that continuously refine long-term memory. These elements turn a raw, stateless model into a production-ready system that maintains session persistence and recovers gracefully without user intervention. Platforms that deliver invisible reliability command pricing power, higher retention, and premium multiples. Those selling raw capability or thin wrappers face margin compression and duration risk as customers arbitrage on trust and uptime rather than intelligence alone. Enterprises, founders, and investors must stop treating the model as the product and engineer the harness as the non-negotiable moat. Prioritize verifiable recovery rates, session persistence, and invisible resilience as core product metrics. Orchestration trumps the model. Resilience trumps secrecy. The edge is no longer in the weights—it is in the harness that makes the weights production-ready and persistently useful. #TMinsights