ทวีตที่ปักหมุด

Adam J. Smith

597 posts

Adam J. Smith

@adamjsmith

Climbing the energy-information gradient. VP Product at ZoomInfo (AI, ML) - bad takes are my own. 🇺🇸

Philadelphia เข้าร่วม Mayıs 2022

1.8K กำลังติดตาม292 ผู้ติดตาม

Someone is going to build a worldclass “Brain” for enterprises & make a stupid amount of money.

Why? As @da_fant said, “coding w ai is solved bc all context is in the git repo. knowledge work is difficult bc context is spread out. an ai system that creates a git repo w all context for a knowledge worker will be able to 100% automate the work.”

When companies talk about being data ready for AI, this is what they’re implicitly saying.

Engineering has been prepared for this moment for a long time because of the deterministic nature of code, the centralization/versioning of data (read: GitHub), and AI tools that are largely build by engineers for engineers.

But for the rest of white collar work, there’s a TON of catching up to do to properly harness the power of the technology.

The big challenge here, and why no one has truly cracked the code for "an ai system that creates a git repo w all context for a knowledge worker" is because unlike code, most knowledge is 1) distributed, 2) unstructured, and 3) unverifiable.

It's distributed: transcripts live in Granola. Documents in Notion. Customer Data in Hubspot. ERP. Emails. Slack messages. Random spreadsheets. SOP docs. Etc. Etc.

Building an ingestion engine that connects to all of your disparate data sources and auto-updates based on the shelf-life of the data is the first, and frankly, easiest step of the process.

Next, it's unstructured: let's say I want to create a proposal for a potential client. To nail the proposal, I want it to pull important information from a variety of sources. The specific asks & background from our initial sales call. Previous proposals to anchor ourselves to a proven format. And completed sprint boards from Linear, so the pricing & timeline in the document is grounded in truth.

Whether it's a thoughtful filesystem (a la Obsidian) or an OpenClaw-esque memory structure, the brain needs to be great at self-organizing in a thoughtful schema. This is very hard, especially if you want to build a generalizable brain that can be shaped to an array of different enterprises.

And finally, most knowledge is unverifiable: writing a function, running a unit test, and seeing if the code works is easy. It works or it doesn't. Using AI to accelerate your content creation process is highly subjective. What is a good/bad idea? Is the content in your voice or not? Does it feel like slop or novel? Answering these questions are both difficult and non-verifiable.

That same system described above doesn't just have to be great at organizing & forming coherent relationships, but it also has to be great at self-improving based on feedback from the user. Memory systems (like those introduced by OpenClaw) are great to a point, but as you scale the corpus of data within your company's brain, things like compaction and cleaning become wildly important to avoid the needle in the haystack problem.

Someone is going to figure out how to solve this problem, and when they do, not only will they make a shit ton of money, but they'll be robinhood for knowledge workers, enabling non-engineers to enjoy the sort of leverage that only technical folks have felt for the last few years.

English

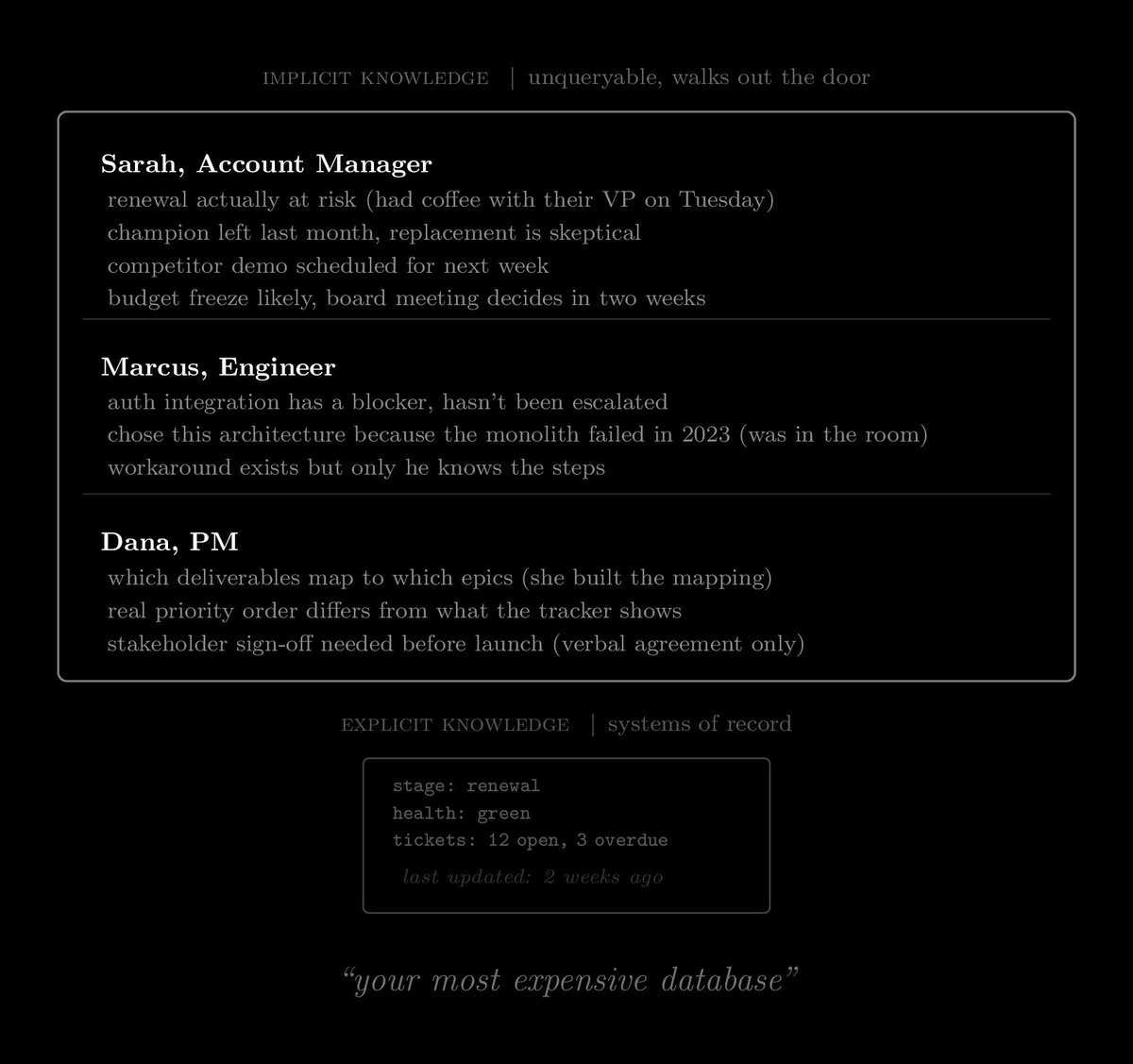

Your most expensive database is probably your payroll, because every employee stores business state that exists nowhere else. The account manager knows the real status of the renewal because she had coffee with the VP last week, and the engineer knows why that architecture decision was made because he was in the room, and all of that took months to embed through onboarding and hallway conversations.

It walks out the door every time someone leaves, and you can't query it with an AI system because none of it was ever written down. We built a whole data architecture around human memory and then wondered why the AI can't find anything useful to retrieve.

The companies I see getting ahead on AI started capturing decisions and context as a byproduct of how people already work, so when the model goes looking for institutional knowledge, it's actually there.

English

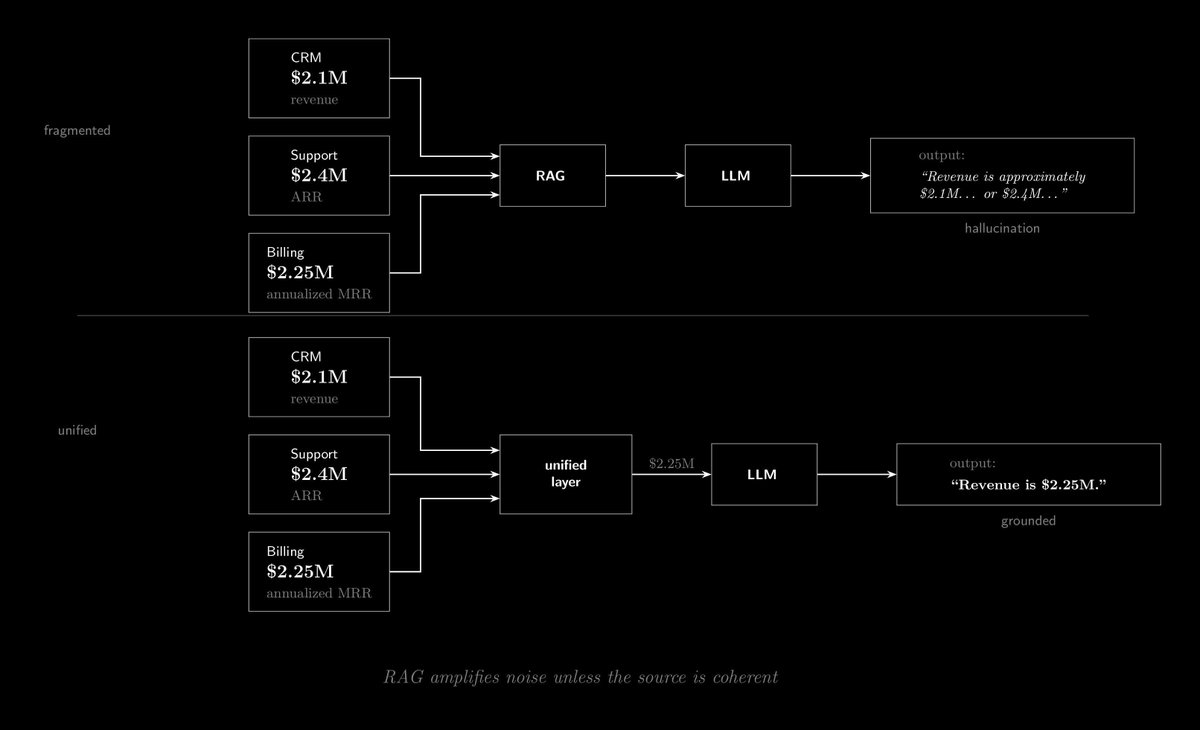

The default enterprise AI play right now is RAG, where you pull context from your systems and feed it to a model, and it makes sense until you look at what's actually being retrieved.

Your CRM says the customer is worth $2.1M, billing says $2.25M annualized from MRR, support says $2.4M in ARR. You retrieve all of that and the model gets three conflicting numbers with no way to know which one is right, so it fills the gap from training data instead of your actual business state, and that's where the hallucinations come from.

I keep seeing teams spend months on model selection and prompt engineering when the actual problem is upstream. RAG just moves fragmented data into the model faster, which means the contradictions arrive faster too.

The teams getting real value from this figured out which source is canonical for what and cleaned up the conflicts before they started building, which is tedious but it's where the results actually are.

English

if your AI initiative isn’t working it’s not the models. the models are good enough, they’ve been good enough for a while. the bottleneck is fragmentation.

you have the same customer represented differently across six (or sixty) systems. the CRM says “Acme Corporation,” support says “ACME Corp,” billing says “Acme Inc.” a human pattern-matches through that without thinking. an AI system hits conflicting records and starts guessing. guessing is hallucination.

think about it as a signal problem. your business data is signal. fragmentation introduces noise in two ways: gaps in coverage, where the model fills in from its training data, and conflicting information across systems, which degrades the signal further. more fragmentation, noisier channel, less reliable output.

this is also why bolting on RAG keeps disappointing people. retrieval-augmented generation works when the retrieved context is coherent. retrieve conflicting information from three systems and you’ve amplified the noise.

before you fund another AI pilot, audit where your institutional knowledge actually lives. how much is in systems vs. in people’s heads? how consistent is it across sources? the strategic priority is data and state coherence. everything else depends on it.

English

The best investment any company can make right now is upskilling and releveling talent to be AI-first. If the people in your business can’t leverage AI, your business can’t leverage AI. AI isn’t going to just show up, replace everyone, and take over. It will be integrated collaboratively with our existing workflows and teams.

Over time, yes, you’ll need fewer people to get the same amount of work done. But that’s exactly what happened with computers, the internet, and networked information systems. They all created massive leverage, but none of it worked without people. AI will follow the same pattern.

Forget about hypothetical AGI or superhuman intelligence for now. The real question is the next 5 to 10 years. How will your business succeed and take advantage of this technology? It comes down to one thing: investing in talent.

English

Every single-player tool eventually loses to a multiplayer tool.

What’s interesting about the current state of applied AI, especially in the enterprise, is that most applications are either:

1. Single-player by design, or

2. Multiplayer by legacy, meaning AI is being plugged into an existing multiplayer system and doing the best it can.

But the AI itself usually isn’t “multiplayer” in those contexts. It feels like we haven’t yet seen the biggest companies or the top players in most verticals, the ones that will truly embrace multiplayer AI.

Who’s actually building multiplayer AI today?

English

There are basically two dominant stories being told about AI right now, and neither of them is right.

One says AI is overhyped, unreliable, and basically vaporware. The other says we’re on the verge of post‑AGI, that it’s the most transformative technology we’ve ever seen, and everything will change in the next couple of years. I think both of those takes are wrong.

There are two other stories that I think better capture where we're at. First, foundational AI research is progressing incredibly fast. We’ve seen multiple orders of magnitude in performance improvements and cost reductions over just the last few years. Second, applied AI, the part that actually drives productivity, economic growth, and real value, is lagging.

One big reason is that the talent needed to translate raw model capabilities into enterprise value is scarce. OpenAI, Anthropic, Google DeepMind, they’ll capture some value, of course. But most of the long‑term value will come from the businesses that figure out how to build on top of this technology and make it useful. Think of the early internet: right now, we’re basically in the pre‑2000s phase. The tech is revolutionary, but the applications haven’t fully caught up yet.

Both things can be true: AI can be disappointing today in many ways, and at the same time, it can be a revolutionary technology whose impact is inevitable once the foundational progress and the applied use cases converge.

English

Over the next couple of years, 2026 and 2027, we’re going to see the rise of more verticalized, hyperfocused AI and intelligence systems. I’m talking about small language models, fine-tuned architectures, and federated model systems.

It feels similar to what happened after the cloud transition. When software “ate the world,” we saw a Cambrian explosion of SaaS, every conceivable type of software for every use case and vertical. That kind of verticalization hasn’t really happened in AI yet. So far, we’ve mostly seen legacy SaaS vendors bolting AI onto their products, rather than true ground-up vertical AI systems.

Part of the reason is that foundational AI research is moving faster than enterprises can apply it in real time. And the talent to build these specialized systems just isn’t widespread yet. But I think the next two years will mark the early stages of that wave.

That’s basically what ZoomInfo is doing right now, building a federated intelligence system specifically for GTM.

English

The biggest friction for AI over the next two years will be at the applied level, not the foundational level. The models and core capabilities are advancing quickly, but enterprises are struggling to actually integrate them into workflows and get real leverage.

The gap is mostly on the talent side. There just aren’t enough engineers or architects who know how to work with these models and meaningfully embed them into business processes. That’s where adoption will lag.

English

I wouldn't say we celebrate the startup over the small business owner, but raising $4m is an event, whereas earning it is a process.

Generally as a society we don't celebrate processes, but we love to make noise about events.

Think about all major life celebrations, they usually the culmination of a process (graduation) or the start (marriage).

No one cares about the startup after the raise until there's some other event that must be bigger and better than the one before.

The process is what matters fwiw.

Justin Welsh@thejustinwelsh

We strangely celebrate the startup that raises $4M more than the small business owner who earns $4M.

English

You can read my full take here open.substack.com/pub/admjs/p/ag…

English

Berkeley's new study of AI agents in production has a finding worth sitting with: 68% of deployed agents execute at most 10 steps before requiring human intervention.

Teams achieve reliability by constraining autonomy. Read-only access, sandboxed environments, humans approving final actions.

The current generation of successful deployments works by being simple and bounded. Whether that's a temporary phase or a permanent feature of enterprise AI is the open question, but it's what's working today.

English

I’ve been thinking about progress in general as having three gates in series: computation (resolving uncertainty), coordination (getting nodes to agree), and transcription (moving atoms).

You can only move as fast as your slowest gate. For applied AI right now, coordination is the bottleneck dragging everything else.

We’re not short on capability, we’re short on agreement and standards about how to use it. The same was true of the internet at first, lots of possibility and lots of failed experiments at the application layer.

We are still very early.

English