ทวีตที่ปักหมุด

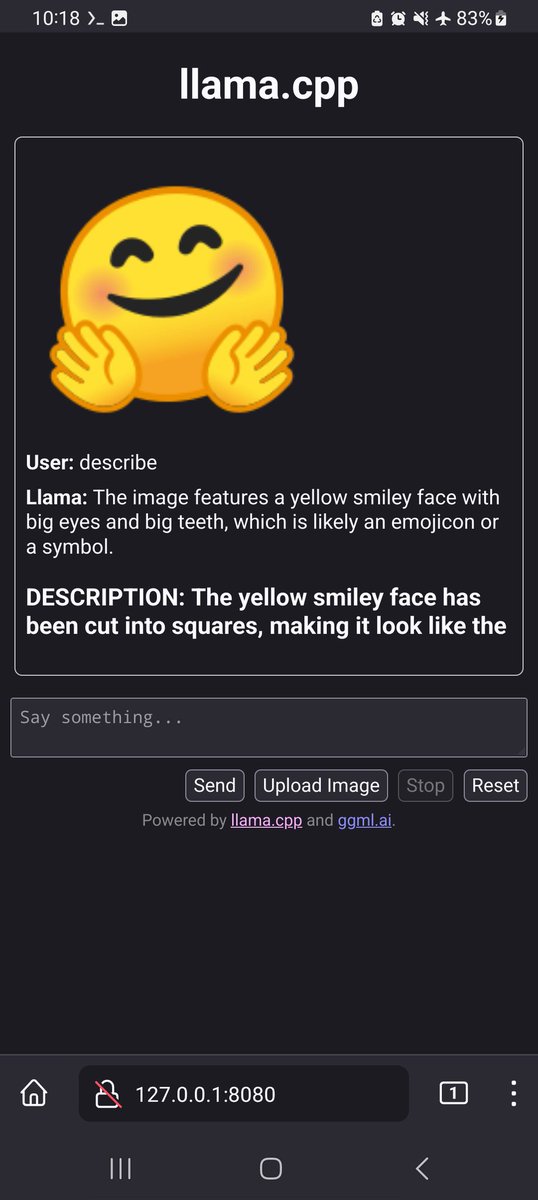

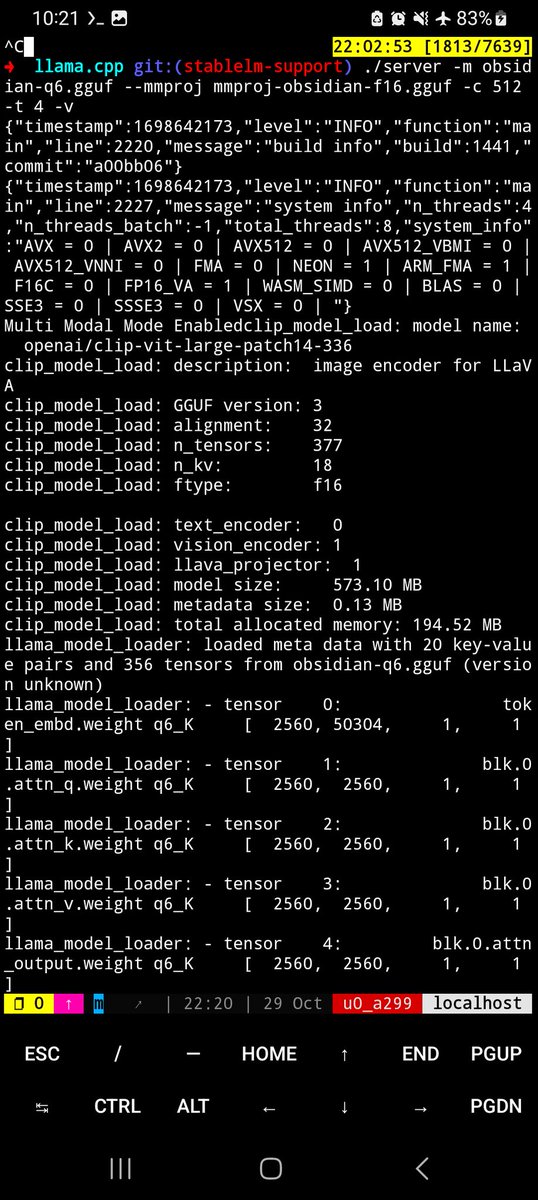

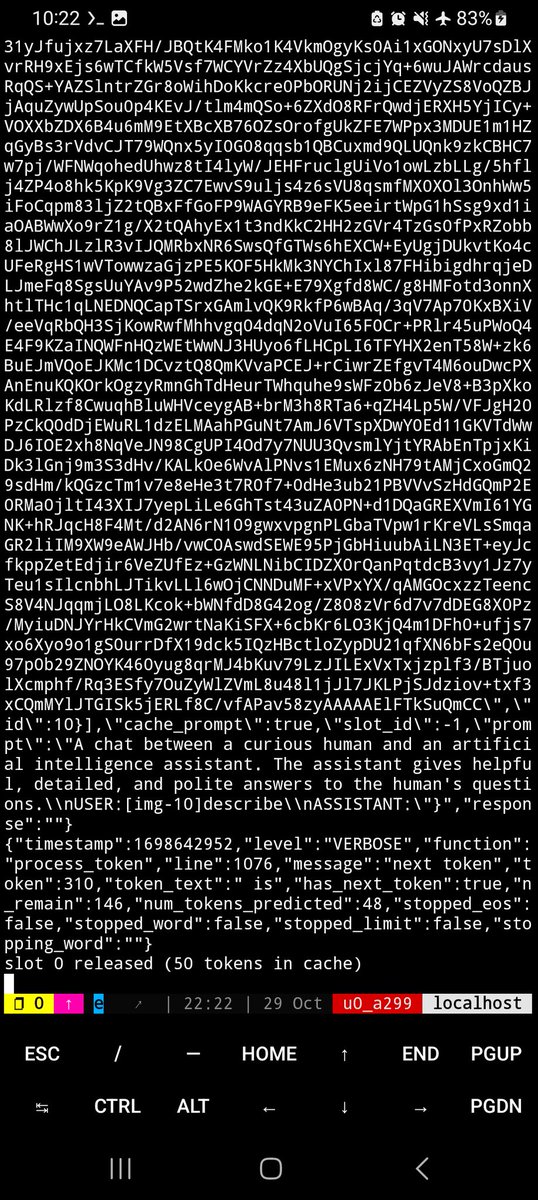

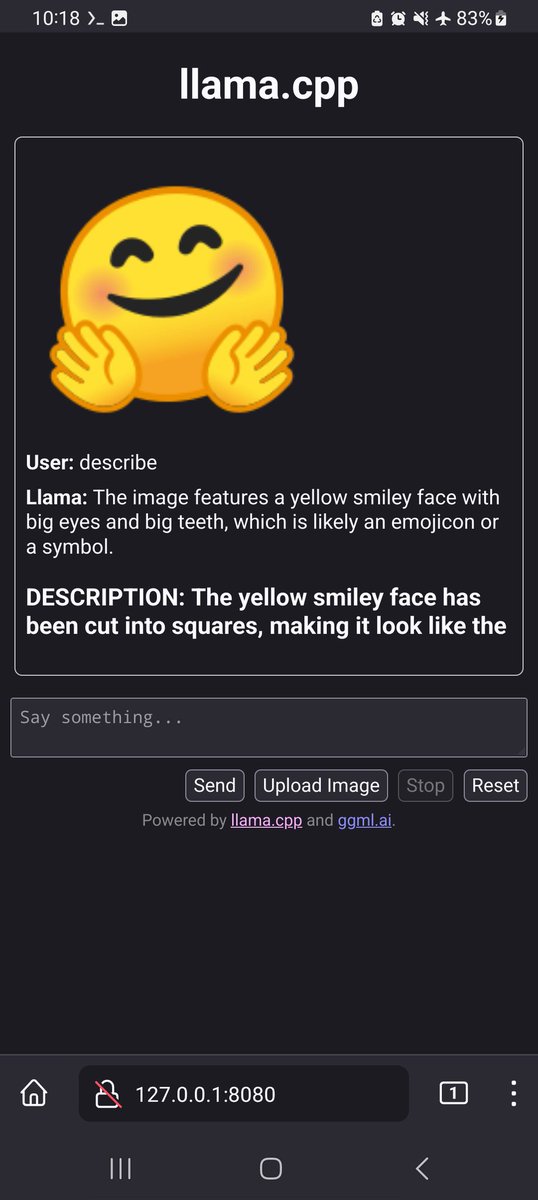

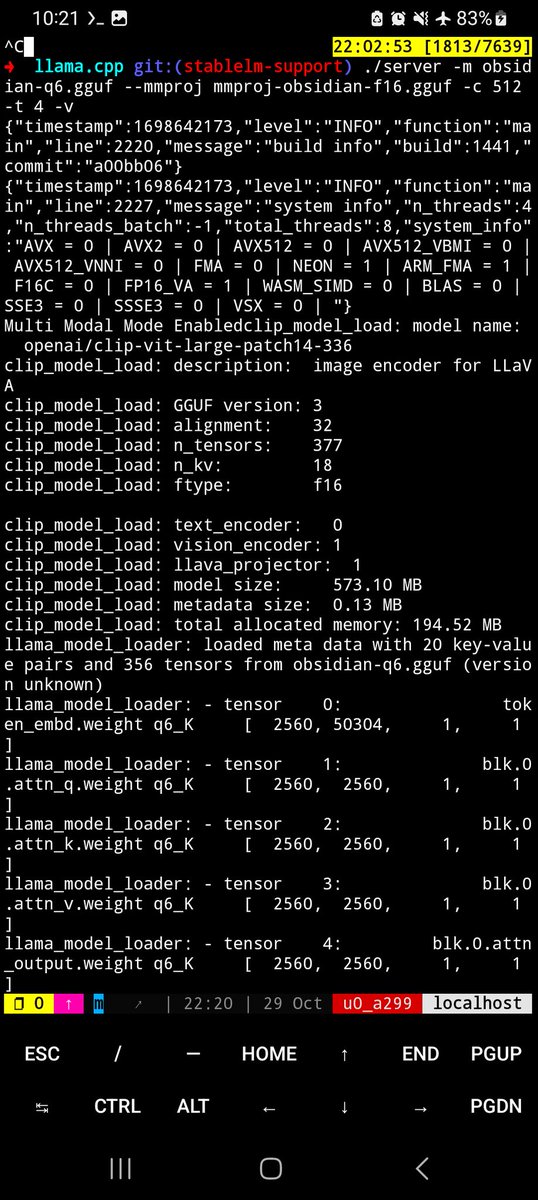

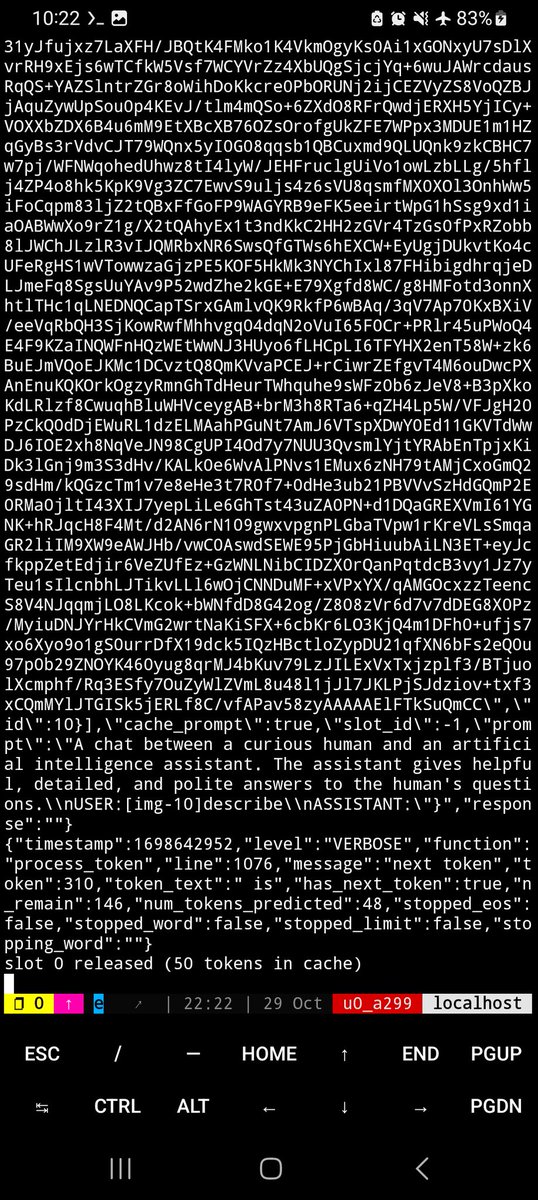

CPU based offline multimodal inference on an Android phone. Original model from @NousResearch, quantization done by @nisten

English

afrideva

213 posts

I just trained a 124M param LLM from scratch. It took ~26 seconds in @GoogleColab Training details: • 5145 tokens in training set • 1024 tokens in context window • 256 tokens per batch • 10 epochs total The model went from generating gibberish to full sentences. Extremely cool.